What Are AI Agents? A Plain-English Guide for 2026

AI agents don't just answer questions -- they take action. Learn how they work, what they can reliably do in 2026, and how to start using them.

What Are AI Agents? A Plain-English Guide for 2026

TL;DR: AI agents are AI systems that take actions, not just answer questions. They perceive their environment, reason about a goal, use tools to act, check the result, and adjust. The agentic AI market hit roughly $9-10 billion in 2026, and 79% of enterprises have adopted some form of them. Coding and research agents are the most reliable today. Fully autonomous computer-use agents exist but still need human oversight for anything consequential.

AI chatbots answer questions. AI agents do things.

That's the core difference, and it matters a lot when you're figuring out which tools to use and what they can actually handle.

This guide explains what AI agents are, how they work, where they're useful right now (and where they still fall short), and how you can start using them without getting burned.

What Makes Something an "AI Agent"

A regular AI assistant responds to your input. You type, it replies. That's the whole loop.

An AI agent has a goal. It decides what steps to take, uses tools to take those steps, checks whether each step worked, and tries again if it didn't. You assign a task and the agent figures out how to finish it.

Think of it this way: a chatbot is a very smart calculator. An agent is more like a junior employee you can hand off work to.

That's not hype. It's also not magic. Agents are software systems that combine a language model with tools (web search, code execution, file access, APIs) and a feedback loop that keeps running until the job is done.

How AI Agents Actually Work

Most AI agents follow some version of this cycle:

1. Perceive The agent takes in information: your prompt, files, web pages, database results, whatever inputs it has access to.

2. Reason The underlying model thinks through the situation. What needs to happen? What's missing? What's the right plan?

3. Act The agent uses a tool. It might search the web, run code, read a file, call an API, or spin up a subagent to handle part of the job.

4. Observe It checks the result. Did the action work? Did it return an error? Was the output useful?

5. Adjust and repeat Based on what it observes, the agent updates its plan and keeps going. This loop runs until the task is done or the agent gets stuck.

The "try, fail, read error, fix, retry" pattern is what separates agents from one-shot tools. A chatbot gives you an answer. An agent tries to get the job done.

Multi-Agent Systems

Some tasks are too complex for one agent. So many systems use multiple agents working together.

An orchestrator agent takes your goal, breaks it into subtasks, and assigns those subtasks to subagents. One subagent might search for information while another writes code while a third formats everything into a deliverable. They run in parallel, which speeds things up and keeps each agent focused on a narrow job.

This is how enterprise teams handle large workflows: customer onboarding, DevOps monitoring, research synthesis, and so on.

What AI Agents Can Do Right Now

Coding agents (the most reliable category)

Coding agents are the most mature AI agents available today. About 50% of all agent tool calls in 2026 happen in software engineering contexts, and the reason is simple: code has objective pass/fail feedback. The agent runs the code, reads the error, fixes it, and tries again. The loop works.

Tools like Claude Code, Cursor, and GitHub Copilot Agent can write code, run it, read errors, fix them, and keep going until the code works. They handle multi-file codebases, run tests, and debug across long sessions with minimal hand-holding.

If you code, this is the category worth trying first. Our guide to the best AI coding assistants in 2026 covers the top options in detail.

Research agents

Research agents take a complex question, autonomously search the web, read multiple sources, synthesize the findings, and return a structured report with citations. Tasks that used to take two hours of manual reading now take a few minutes.

Claude's extended research mode, Perplexity, and ChatGPT deep research are the main options. They're the second most mature agent category after coding, mostly because web search is a reliable, well-scoped tool.

Computer use agents

In March 2026, Anthropic launched Claude Computer Use Agent in research preview. It can see your screen, click buttons, open apps, fill in spreadsheets, and complete multi-step workflows on your desktop.

One example Anthropic demonstrated: a user running late tells Claude to export a pitch deck as a PDF and attach it to a calendar invite. Claude handles both steps without any further input.

This category is real and impressive. In production it still fails on complex or unpredictable interfaces. Use it for structured, repeatable tasks. Don't turn it loose on anything dynamic or consequential without a human review step.

Workflow automation

Agents can monitor systems, respond to triggers, and take action automatically. DevOps teams use them to watch for alerts, pull logs, run diagnostics, and post summaries before engineers even know something went wrong.

For practical ideas on what's possible right now, see our guide on using AI to automate daily tasks.

The Numbers Behind AI Agents in 2026

- The global agentic AI market sits at roughly **

9-10 billion in 2026**, up from7.3 billion in 2025. It's projected to hit $93-139 billion by the early 2030s at a CAGR of 40-45%. - 79% of enterprises have adopted AI agents in some form. Only 11% run them in production.

- Enterprise deployments that do run agents in production report an average 171% ROI, according to Deloitte's 2026 State of AI in the Enterprise report.

- Gartner forecasts 40% of enterprise applications will embed task-specific AI agents by 2026, up from under 5% in 2025.

The gap between "we're exploring it" and "it's running in production" is the defining challenge right now. Most organizations are experimenting. Few have figured out where agents actually earn their keep.

What AI Agents Still Can't Do Well

Being honest about the limits matters.

Complex, dynamic interfaces trip agents up. Computer use agents work well on structured, predictable screens. They break on sites with unusual layouts, CAPTCHAs, or unpredictable interactions.

High-stakes autonomous actions are risky. Don't let an agent send emails to real people, make purchases, or take any irreversible action without a human review step. The failure modes are unpredictable and the consequences are real.

Long chains of dependent steps still have reliability issues. Each step introduces a chance of error, and errors compound. The more autonomous you make an agent, the more robust your error handling needs to be.

Most industries outside software are barely using agents yet. Healthcare, legal, and finance each account for under 5% of total agent tool calls as of 2026. That's not a sign of low value -- it's a sign tooling and trust haven't caught up.

AI Agents vs. Regular Chatbots: The Practical Difference

| Chatbot | AI Agent | |

|---|---|---|

| What it does | Answers questions | Completes tasks |

| Tools access | Usually none | Search, code, APIs, files |

| Loop | Single turn | Multi-step until done |

| Human input needed | Every turn | Set it, check the result |

| Best for | Q&A, drafting | Research, coding, automation |

The right tool depends on the job. For a quick question, a chatbot is faster. For a job with multiple steps and external lookups, an agent is the right choice.

How to Start Using AI Agents Today

You don't need to build anything. Several agent-capable tools are available right now:

- Zemith -- AI chat, coding assistance, and agents built for knowledge workers

- Claude (Anthropic) -- Agent mode, computer use, multi-step research

- Cursor / Claude Code -- Coding agents for developers

- Perplexity -- Research agent with real-time web access

- OpenAI Operator -- Task-focused web agent

If you're new to working with AI systems, start by learning how to write clearer prompts. Agents respond well to clear goals, specific constraints, and defined stopping conditions. Vague instructions produce vague results.

For your first real use case, pick something repeatable and low-risk. A research task. A coding problem. A document summary. Get a feel for where the agent succeeds before trusting it with anything that can't be undone.

Frequently Asked Questions

What's the difference between an AI agent and a chatbot? A chatbot answers questions in a single turn. An agent takes multi-step actions using tools and keeps working until a task is done, or it hits a wall.

Are AI agents safe to use? For low-risk, reversible tasks -- yes. For anything involving real money, real emails to real people, or irreversible system changes -- keep a human in the review loop.

Do I need to code to use AI agents? No. Tools like Claude, Zemith, and Perplexity let you use agents through a chat interface. You describe the task; the agent handles the mechanics.

What's a multi-agent system? A setup where one orchestrator agent manages multiple subagents that each handle part of a larger task. It's faster for complex work and keeps individual agents focused on a single job.

How are AI agents different from RPA? Robotic Process Automation follows fixed scripts. If a button moves, the script breaks. AI agents reason about what they see and adapt. They're slower and more expensive than RPA for stable, structured processes -- but far more flexible for anything dynamic.

Will AI agents replace jobs? They'll replace specific tasks before they replace whole jobs. High-volume, rule-based work -- data entry, basic research, code review, customer triage -- is the near-term target. Roles that require judgment, relationships, and creative decisions are less immediately affected.

The Bottom Line

AI agents are the step beyond chatbots. They're real, they're useful, and the most reliable versions -- coding agents and research agents -- are worth trying now.

The bigger promise of fully autonomous agents that handle complex work without supervision is real but not fully there yet. The reliability gap between impressive demos and production deployments is the honest story of 2026.

Start small. Pick low-risk tasks. Keep humans in the loop for anything consequential. Build from there. That's how you get real value from agents today without the painful failures that come from trusting them too much, too fast.

استكشف ميزات زميث

كل الذكاء الاصطناعي. اشتراك واحد.

ChatGPT، Claude، Gemini، DeepSeek، Grok و25+ نموذج

ذكاء اصطناعي فوري ومباشر.

صوت + مشاركة شاشة · إجابات فورية

ما أفضل طريقة لتعلم لغة جديدة؟

الانغماس والتكرار المتباعد هما الأفضل. حاول استهلاك وسائط بلغتك المستهدفة يومياً.

صوت + مشاركة شاشة · الذكاء الاصطناعي يجيب في الوقت الفعلي

توليد الصور

Flux، Nano Banana، Ideogram، Recraft + المزيد

اكتب بسرعة الفكر.

إكمال تلقائي، إعادة كتابة وتوسيع بأمر

أي مستند. أي صيغة.

PDF أو رابط أو YouTube → دردشة، اختبار، بودكاست والمزيد

إنشاء الفيديو

Veo، Kling، MiniMax، Sora + المزيد

تحويل النص إلى كلام

أصوات ذكاء اصطناعي طبيعية، 30+ لغة

توليد الأكواد

كتابة، تصحيح وشرح الأكواد

الدردشة مع المستندات

رفع ملفات PDF، تحليل المحتوى

ذكاؤك الاصطناعي في جيبك.

وصول كامل على iOS وAndroid · مزامنة في كل مكان

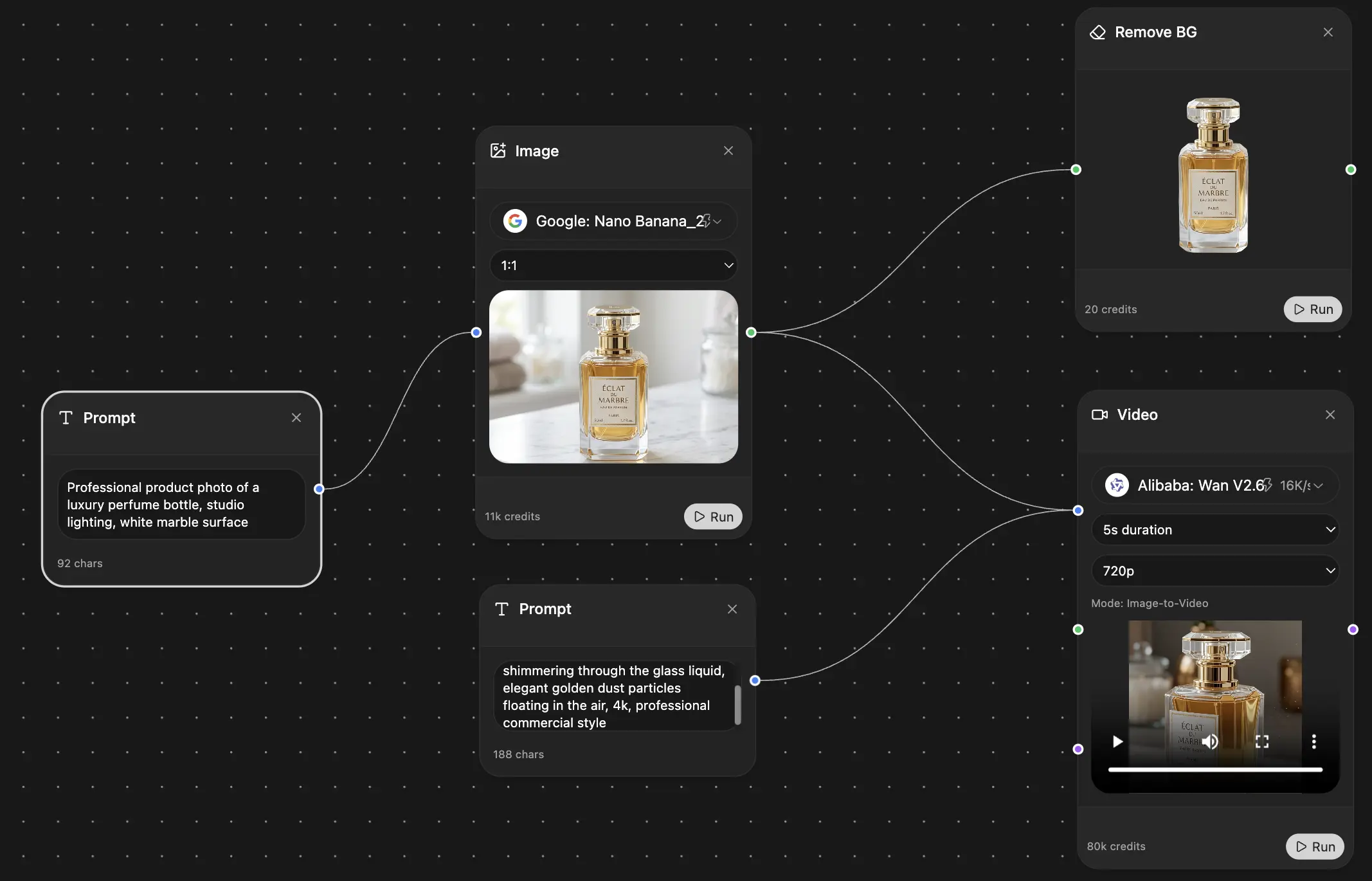

لوحتك اللامتناهية للذكاء الاصطناعي.

دردشة، صور، فيديو وأدوات حركة — جنبًا إلى جنب

وفر ساعات من العمل والبحث

تسعير بسيط وبأسعار معقولة

موثوق من قبل فرق في

مجاني

لا يتطلب بطاقة ائتمان

- 100 رصيد يومياً

- 3 نماذج ذكاء اصطناعي للتجربة

- دردشة ذكاء اصطناعي أساسية

بلس

- 1,000,000 رصيد/شهر

- أكثر من 25 نموذج ذكاء اصطناعي — GPT-5.2، Claude، Gemini، Grok والمزيد

- Agent Mode مع بحث الويب وأدوات الكمبيوتر والمزيد

- Creative Studio: توليد الصور وتوليد الفيديو

- Project Library: الدردشة مع المستندات والمواقع ويوتيوب، إنشاء البودكاست، البطاقات التعليمية، التقارير والمزيد

- Workflow Studio و FocusOS

احترافي

- كل شيء في بلس، و:

- 2,100,000 رصيد/شهر

- نماذج حصرية للمحترفين (Claude Opus، Grok 4، Sonar Pro)

- Motion Tools و Max Mode

- أول وصول إلى أحدث الميزات

- الوصول إلى عروض إضافية