Supercharge Your Workflow with a Claude Code Review

Level up your development with our guide to Claude code review. Learn actionable prompts, workflows, and tips to write better code faster.

So, what exactly is a Claude code review? It’s pretty simple: you get Anthropic's AI, Claude, to take a first pass at your source code. It scans for everything from pesky bugs and style issues to glaring security holes and performance bottlenecks. Think of it as an on-demand peer reviewer that's always ready to go and has never had a bad day.

Why Your Next Code Review Should Be with an AI

Let's be real—manual code reviews can be a total slog. You’re painstakingly combing through hundreds of lines, hunting for that one logic flaw or typo, while your own interesting work sits on the back burner. It’s a chore we all have to do, but it doesn't have to be so painful.

Using an AI like Claude for that first-pass review isn’t just some sci-fi fantasy; it’s a massive productivity booster. I’ve seen developers go from total skeptics to making a Claude code review a standard part of their daily routine. The "aha!" moment usually comes when the AI flags a subtle bug that two senior engineers already skimmed right over. Ever had that happen? It's both humbling and awesome.

Beyond Simple Syntax Checking

Forget what you think you know about automated checkers. This isn't your old-school linter that just yells about semicolons. Modern AI gets the context. It can spot complex logical errors, suggest clever performance tweaks you hadn't thought of, and even explain why a change is needed. It’s like having a senior dev on standby 24/7, ready to give your PR a thorough review the second you create it.

This changes the game for development teams in some huge ways:

- Ship Faster: AI reviews can dramatically cut down the time a pull request sits waiting for human review. That means your whole development cycle gets a speed boost.

- Fewer Bugs in Production: Catching more issues before they merge means cleaner, more stable code for your users. It’s that simple.

- Help Junior Devs Level Up: Junior engineers get instant, private feedback. This helps them learn best practices without the classic "review anxiety" of waiting for a senior dev to tear their code apart.

- Free Up Your Seniors: When the AI handles the routine checks, your senior engineers can stop nitpicking and focus their brainpower on system architecture and tough, high-impact problems.

This isn't about replacing human reviewers. It's about making them more effective. The AI handles the first pass, catching 80% of the common stuff, so your human experts can focus on the critical 20% that requires deep architectural knowledge and business context.

Making AI a Real Part of Your Workflow

The real magic happens when you stop copy-pasting code into a separate chat window and start integrating AI directly into your tools. Imagine an environment where your AI assistant is just there, right inside your editor. For a solid primer on this concept, the is a great resource.

This is exactly the thinking behind platforms like Zemith. By building the AI right into the workflow, you create a powerful and efficient loop. Check out how an can be used to make the Claude code review a seamless part of how you write and ship software, not just another task to check off a list.

Crafting Prompts That Get You Great Code Reviews

Just tossing a chunk of code at an AI and asking it to "review this" is a recipe for disappointment. You'll get something back, sure, but it will likely be generic and not very helpful. The real magic behind a top-tier code review comes from how you ask.

Think of it as giving your AI assistant a detailed "code review rubric" instead of a vague command. A fuzzy prompt leads to fuzzy feedback. A sharp, detailed prompt, on the other hand, delivers actionable insights that actually help you catch bugs and save time.

This isn't some dark art you need a degree for. It's just about being clear, providing the right context, and knowing what you want. If you're new to this way of thinking, our guide on is a great place to start.

Give Claude a Persona and a Goal

I've found one of the most powerful tricks is to give Claude a role to play. Don't just let it be a generic AI—tell it who to be. This little bit of role-playing completely changes the quality of its feedback.

- For security: "Act as a senior cybersecurity expert. Go through this Python code and hunt for potential vulnerabilities, specifically SQL injection, cross-site scripting (XSS), and insecure direct object references. Keep it concise and give me code examples for any fixes."

- For performance: "You're a lead performance engineer. Analyze this JavaScript function and point out any performance bottlenecks. I need suggestions for optimizing its speed and memory usage, and please explain the trade-offs for each idea."

When you assign a persona, you're essentially telling the AI to apply a specific filter to its analysis. It's so much more effective than a generic "find bugs" request because it focuses Claude's massive knowledge base on the exact problem you're trying to crack.

Key Takeaway: A solid prompt should always cover three things: a role (who the AI is), a task (what it needs to do), and constraints (how you want the feedback delivered).

This focused approach really pays off. Industry analysis shows that a whopping 34% of interactions with Claude are about computer science, mostly for fixing code. It can tear through code at 0.2 seconds per 1,000 tokens with 99.1% accuracy, making it an incredibly fast and reliable partner—as long as you guide it properly.

Provide Context and Standards

Claude doesn't know your team's coding style or your project's architecture unless you tell it. If you have specific standards, style guides, or patterns you follow, you need to spell them out in the prompt.

For instance, don't just ask it to check for "good style." Get specific:

"Review this React component and make sure it follows our team's standards:

- State management must use Zustand; no

useStatefor complex objects. - All components need to use TypeScript and be strongly typed.

- Follow the BEM naming convention for CSS classes.

- Keep the component file size under 200 lines."

This level of detail turns a generic Claude code review into a custom audit of your team's specific practices. It's like having a senior dev who has memorized your entire playbook.

And here’s a pro-tip: with a platform like , you can save these detailed rubrics in your Prompt Gallery. This turns a complex, multi-point review into a simple, one-click action that your whole team can use consistently. No more copy-pasting the same instructions every time!

Automating Reviews with an Integrated Workflow

Let’s be honest, bouncing between your IDE, GitHub, and a separate AI chat window is a real drag on productivity. Every time you alt-tab, you lose your train of thought. That constant context switching is where a quick code check turns into a half-hour ordeal.

This is exactly why integrated platforms like are becoming so popular. The goal is simple: stop juggling tabs and bring all your tools under one roof. Imagine getting a full Claude code review done without ever leaving your main workspace.

A Single Pane of Glass for Code Quality

With something like Zemith’s Coding Assistant, which runs on powerful models like Claude 3 Sonnet, the entire review process happens right where you code. You're not just getting a list of issues. You can get instant explanations, ask the AI to generate a bug fix, and even see a live preview of your changes if you're working on React or HTML. For front-end devs, that immediate visual feedback is incredibly valuable.

This flow is what makes the whole thing work so smoothly.

As you can see, it’s a direct path. You feed it your code and your prompt, and a solid review comes back out, all inside the same tool.

From Messy Code to Merged PR, Faster

So, what does this look like in practice? Let's say you just finished a new JavaScript function. It works, but it’s a bit messy, and you want to polish it before opening that pull request.

Instead of navigating to a new tab for Claude, you just paste the code directly into the Zemith Coding Assistant. Then, you can pull up a custom prompt you’ve already saved—something like, "Act as a senior JavaScript engineer and review this code for performance, readability, and potential bugs."

Claude gets to work and gives you a list of suggestions. But here’s the magic. You don’t have to manually apply those changes. You can just tell the AI to do it for you. A quick follow-up like, "Okay, apply those refactoring suggestions and convert the for loop to a map function," and it's done.

The Big Picture: This tight feedback loop—review, suggest, implement—is what really speeds things up. You're not just spotting problems; you're fixing them in seconds with a little help from the AI.

This integrated approach is already making a huge impact. Globally, with over 300,000 business customers, Claude is automating 45% of manual review tasks and helping developers with another 52% of them. This is a clear signal that consolidating tools is the future for boosting developer productivity.

By keeping everything in one place, you cut out the friction and stay in the zone. It's less about the AI itself and more about weaving it smartly into your day-to-day workflow. If you want to make your own processes more efficient, you should check out our guide on .

Going Beyond a Single Model for Unbeatable Reviews

Getting Claude to review your code is a fantastic first step. But what if you could get a second, third, or even fourth expert opinion in just a few seconds? Relying on a single AI model is like asking only one person for directions—they might know a great route, but you could be missing out on a shortcut.

This is where a multi-model review strategy comes in. You’re essentially creating your own panel of AI experts. By running your code through a gauntlet of different models, you build a review process that’s far more robust and catches things you’d otherwise miss.

Assembling Your AI Review Team

The goal here isn't to find the one "best" model, but to use them together for their unique strengths. Think of it as putting together an AI "dream team" for your code. Claude might be a genius at spotting flaws in your logic, while another model is better at sniffing out obscure security vulnerabilities.

A platform like makes this easy by giving you access to a whole suite of models in one spot. You can quickly switch between them to get diverse feedback without juggling a bunch of different accounts and APIs.

It’s no secret that AI has taken the development world by storm. In fact, 95% of software engineers now use AI tools weekly. We've seen performance metrics skyrocket, with coding accuracy jumping to 84.9% and math problem-solving hitting a staggering 95%. You can dig into these fascinating to see the full picture.

This rapid evolution shows just how powerful specialized AI has become, and a multi-model approach is the next logical step in your workflow.

By pitting different AI models against each other, you're not just reviewing code; you're stress-testing it from multiple angles. One model might be the meticulous stickler for style, while another is the paranoid security guard—you want both on your team.

So, how do you actually do this? You can build a strategy around the different models available right inside Zemith. Here's a quick look at how their specializations can give you a more complete review.

Multi-Model Code Review Strengths

This isn't an exhaustive list, and the models are always getting better. For a closer look, you might want to check out our deep dive into the .

The key takeaway is to experiment. See which combination gives your code the most thorough workout. It’s the ultimate Claude code review—plus a whole lot more.

Keeping the Human in the Loop for Better Code

AI code reviews are an incredible leap forward, no doubt. But before you start planning a retirement party for your senior devs, we need to talk. The "human in the loop" isn't just a buzzword; it's the absolute key to shipping software that actually works.

Think of Claude as a brilliant junior developer who runs on rocket fuel. It’s a workhorse that can spot syntax errors from a mile away and flag common issues with lightning speed. But it's missing the one thing you have in spades: context.

The Limits of an AI Reviewer

An AI wasn't in the room when your team decided to take on some tech debt to hit a critical launch date. It doesn't know the five-year architectural plan or the subtle business logic that makes a chunk of code look weird but totally necessary.

Blindly accepting AI suggestions is a fast track to chaos. I’ve seen some genuinely scary examples where an AI suggested a "fix" that, while elegant, would have completely torpedoed a core feature because it didn't understand the business requirements behind it. The code looked cleaner, sure, but it would have failed spectacularly in production.

Your job is to be the final gatekeeper. Use the AI for its incredible breadth to catch the low-hanging fruit, but always apply your project-specific depth to make the final call. It's a collaboration, not a hand-off.

A Checklist for Validating AI Suggestions

You wouldn't merge a pull request from a new hire without a thorough review, would you? The same exact principle applies to your Claude code review. Every single suggestion needs a human sanity check.

Here’s a quick checklist I run through before accepting any AI-generated change:

- Business Logic Check: Does this change actually support the feature's goal, or is it just "technically" better code?

- Architectural Fit: Does this "improvement" align with our long-term roadmap, or is it a clever but problematic detour we'll regret later?

- Team Convention Alignment: Does the suggestion follow our specific coding standards and patterns, not just generic best practices?

- Test Impact: What does this change do to our test suite? Will it break existing tests or require writing new ones?

Making this validation step a non-negotiable part of your workflow is crucial. If you're looking for more ideas, our own is a great place to start.

Even with an AI assistant, the fundamentals still matter. A solid grasp of the principles outlined in these will make you a much better partner to your AI counterpart.

Ultimately, a Claude code review is here to augment your skills, not replace them. A platform like Zemith makes this partnership feel seamless, letting you get instant AI feedback and then use your own expertise to validate and implement the changes that truly matter—all in one fluid workflow.

Common Questions About Claude Code Reviews

Whenever I talk to teams about using AI for code reviews, the same questions always pop up. It's completely normal to have a few hesitations before you dive in, so let's walk through some of the big ones I hear all the time.

Chances are, if you're wondering about something, someone else is too.

Can Claude Review for Compliance Standards?

Yes, but you have to be really specific with how you ask. Just throwing a file at Claude and asking, "Is this code HIPAA compliant?" isn't going to get you very far. It'll probably give you a vague, lawyer-y response that helps nobody.

You have to feed it the exact rule you're checking against. Think of it less like a general question and more like a targeted audit.

For instance, you'd want to prompt it like this: "Review this C# code handling patient data. According to HIPAA rule 164.312(a)(2)(iv), all data in transit must be encrypted. Verify that all data transmission here uses TLS 1.2 or higher."

By giving it the direct rule, you get a much more reliable check. This is where a platform like comes in handy—you can save these detailed compliance prompts so anyone on your team can run a consistent check with just a click.

How Does This Compare to Static Analysis Tools?

They're partners, not competitors. Think of a tool like as your by-the-book security guard. It's incredible at enforcing a strict set of predefined rules and will catch common mistakes and code smells with brutal efficiency.

A Claude code review, on the other hand, is more like getting feedback from a creative senior developer. It’s great at understanding the intent behind the code. It can spot tricky logic bugs, suggest better architectural patterns, and point out things that, while not technically "wrong," are a mess waiting to happen.

The best workflow I've seen uses both. Run your static analysis scan first to get rid of the obvious stuff. Then, use Claude to do a deeper, more thoughtful review of the pull request. It's a two-layer approach that gives you fantastic coverage.

What Is the Best Way to Integrate This into CI/CD?

Full CI/CD automation is the end goal for many, but getting there with direct API integrations can be a heavy lift. There's a much more practical way to start.

What I've seen work really well is a "pre-review" step. Using a tool like the Coding Assistant, a developer can just copy the diff from their pull request, drop it in with a saved "PR Review" prompt, and get feedback in seconds.

This lets the developer find and fix issues before they even ask a teammate for a review. It takes a huge load off your senior engineers and keeps things moving quickly. It's the ultimate answer to the question "how can I improve code review comments?"—by catching problems before the comments are even needed.

Ready to stop juggling tabs and bring your entire AI-powered workflow into one place? Zemith pulls everything together—from multi-model AI access and a powerful coding assistant to deep research tools—all in a single platform. .

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

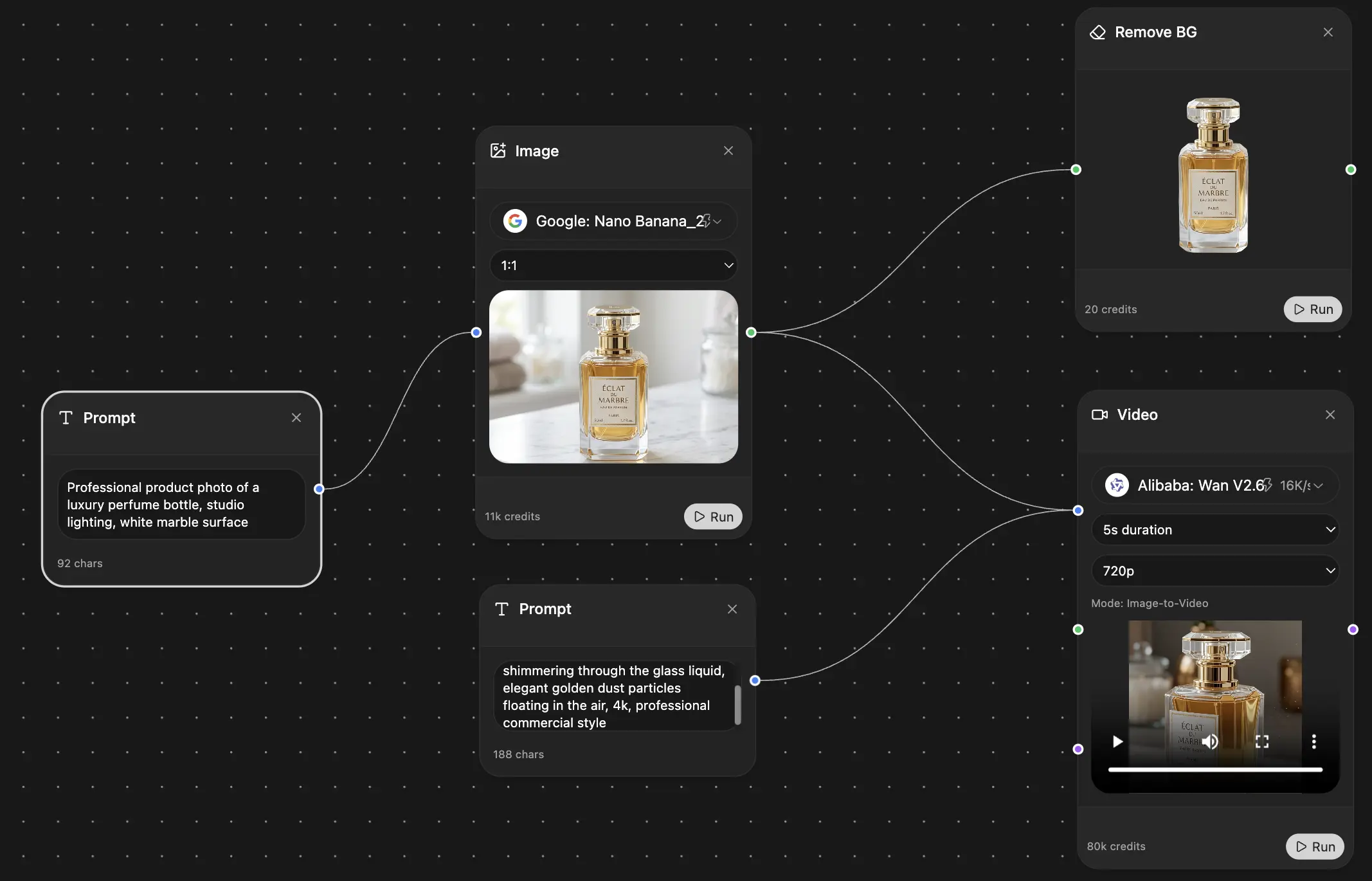

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers

What Our Users Say

Great Tool after 2 months usage

"I love the way multiple tools they integrated in one platform. Going in the right direction."

— simplyzubair

Best in Kind!

"The quality of data and sheer speed of responses is outstanding. I use this app every day."

— barefootmedicine

Simply awesome

"The credit system is fair, models are perfect, and the discord is very responsive. Quite awesome."

— MarianZ

Great for Document Analysis

"Just works. Simple to use and great for working with documents. Money well spent."

— yerch82

Great AI site with accessible LLMs

"The organization of features is better than all the other sites — even better than ChatGPT."

— sumore

Excellent Tool

"It lives up to the all-in-one claim. All the necessary functions with a well-designed, easy UI."

— AlphaLeaf

Well-rounded platform with solid LLMs

"The team clearly puts their heart and soul into this platform. Really solid extra functionality."

— SlothMachine

Best AI tool I've ever used

"Updates made almost daily, feedback is incredibly fast. Just look at the changelogs — consistency."

— reu0691