English to Albanian Translation: The AI Workflow That Works

Stop getting bad English to Albanian translation results. Learn a complete, AI-assisted workflow using multiple models for accuracy and a human touch.

You wrote the landing page, the product copy, or the onboarding emails. The English version sounds sharp. Then the Albanian version goes live, and something feels off almost immediately. Replies come in. A prospect sounds confused. A teammate says the wording feels stiff. Someone politely asks what you intended.

That's the significant challenge with english to albanian translation. The failure usually isn’t dramatic. It’s quieter than that. The sentence is technically understandable, but the tone misses, the grammar bends the wrong way, or the formality level lands in the uncanny valley between “professional” and “did a chatbot write this at 2 a.m.?”

I’ve seen teams make the same mistake over and over. They assume Albanian is close enough for a single-pass AI translation. It isn’t. Not if the content matters. Not if the text includes legal language, product messaging, reports, subtitles, UI strings, or anything with actual consequences.

The good news is that there’s a workflow that fixes most of this. Not an “AI does everything” fantasy, and not a painfully slow all-manual process either. The approach that works is hybrid. You use AI for speed, comparison, and draft generation. Then you use human judgment for grammar, register, terminology, and cultural fit.

That Awkward Moment Your Translation Fails

A lot of translation mistakes start with good intentions and bad shortcuts.

A founder wants to launch in Albania quickly. A marketer pastes campaign copy into a free translator. A project manager glances at the output, sees familiar words, and approves it because the deadline is already breathing down everyone’s neck. Then the Albanian version reads like it was assembled from spare parts.

The funny version of this story is the one people share in Slack. The painful version is the one customers see.

What usually goes wrong first

The first problem is almost never vocabulary. It’s usually tone, grammar, or context.

English lets you get away with a lot. Short fragments can sound punchy. Nouns can stack cleanly. Brand lines can stay vague and still feel persuasive. Albanian asks for more commitment. You have to decide who you’re addressing, how formal you’re being, and how the sentence should function in context.

That’s why a phrase that sounds polished in English can become oddly cold, overly literal, or unintentionally awkward after a quick machine pass.

Good translation doesn’t just preserve meaning. It preserves intent.

If you’re translating PDFs, reports, or brochures before you even get to the language layer, it also helps to clean the source text first. A practical place to start is this guide on . Messy extraction creates messy translation. Every time.

The cheap shortcut gets expensive fast

Free tools are fine for checking a message, reading a menu, or figuring out whether a forum post is worth your time. They’re risky for launch copy, contracts, analytics reports, or anything public-facing.

The trap is that the output often looks believable enough to pass a non-native review. That’s what makes it dangerous. Nobody sounds the alarm until the Albanian reader does.

And Albanian readers notice. Fast.

Why Albanian Is A Secret Handshake AI Can't Master

Albanian isn’t impossible for AI. It’s just one of those languages where weak shortcuts get exposed quickly.

Some of the difficulty comes from grammar. Some comes from low-resource training conditions. Some comes from the fact that a sentence can be “translated” while still sounding unlike something a native speaker would ever write.

The grammar problem is real

Albanian uses a postpositive definite article and a seven-case noun system, which makes sentence construction feel very different from English in practice. That difference is one reason single-model AI often sounds clunky even when the sentence is understandable.

A useful linguistic overview sits inside this explanation of . The short version is simple. If the model doesn’t understand the role a word plays in the sentence, it guesses. Albanian punishes guessing.

One verified benchmark makes the gap hard to ignore. Standard AI translators achieve only 62% accuracy on Albanian case structures, compared with 85% for a language like German, and Albanian appears in only 0.1% of some public datasets according to .

That tracks with what practitioners see. The model often gets close enough to sound plausible, but not close enough to trust.

Where single-model output breaks

The failures show up in predictable places:

- Formality choices: English “you” doesn’t force a choice. Albanian does.

- Word order: A literal carryover from English can feel stiff or unnatural.

- Case endings: A sentence may read as almost right, which is often worse than obviously wrong.

- Verb handling: Tense and agreement can wobble in longer sentences.

- Idioms: These are where AI starts acting overly confident.

A single engine might translate a phrase word-for-word and call it a day. A native editor immediately sees the sentence structure doesn’t breathe right.

Albanian isn't just one neat box

There’s also the issue of usage. Albanian isn’t only about direct word mapping. Register matters. Region matters. Audience matters.

Marketing copy for a startup, a municipal report, and app onboarding text should not sound like they came from the same prompt template. Yet that’s often what happens when teams rely on one output and skip comparison.

If the sentence is grammatically acceptable but socially wrong, the translation still failed.

That’s the part many AI-first tutorials skip. They treat translation like substitution. Albanian demands interpretation.

The Zemith AI-Assisted Translation Workflow

The workflow that holds up under pressure has three parts. Prepare the text. Compare multiple drafts. Then do a human pass that focuses on meaning, tone, and risk.

This is the point where a lot of teams either save hours or create a cleanup nightmare for next week.

Phase one, prepare before you translate

Most bad translations are already doomed before the first prompt.

If your English source is inconsistent, bloated, or full of unexplained internal language, the Albanian output will inherit that confusion. Before generating anything, clean the source and define what must stay stable.

I usually lock down four things first:

Brand terms that should not be translated

Product names, feature labels, campaign names, and internal frameworks need explicit handling.Terms that need one approved Albanian equivalent

This matters for pricing pages, support content, product tours, and recurring UI text.Audience and register

Are you speaking to customers, partners, officials, or end users? That changes the voice.Content type

A legal clause, a push notification, and a homepage headline should not go through the same prompt untouched.

A note-taking workspace helps here because you can build a mini glossary before touching the translation step. If you’re building repeatable operations around content, this guide to is worth skimming.

Phase two, compare models instead of trusting one

This is the move that changes the quality ceiling.

Instead of asking one AI to produce the “correct” Albanian version, run the same source through multiple models and compare the output side by side. Don’t look for a winner. Look for patterns. One model may handle sentence rhythm better. Another may choose stronger terminology. A third may avoid a case error the others make.

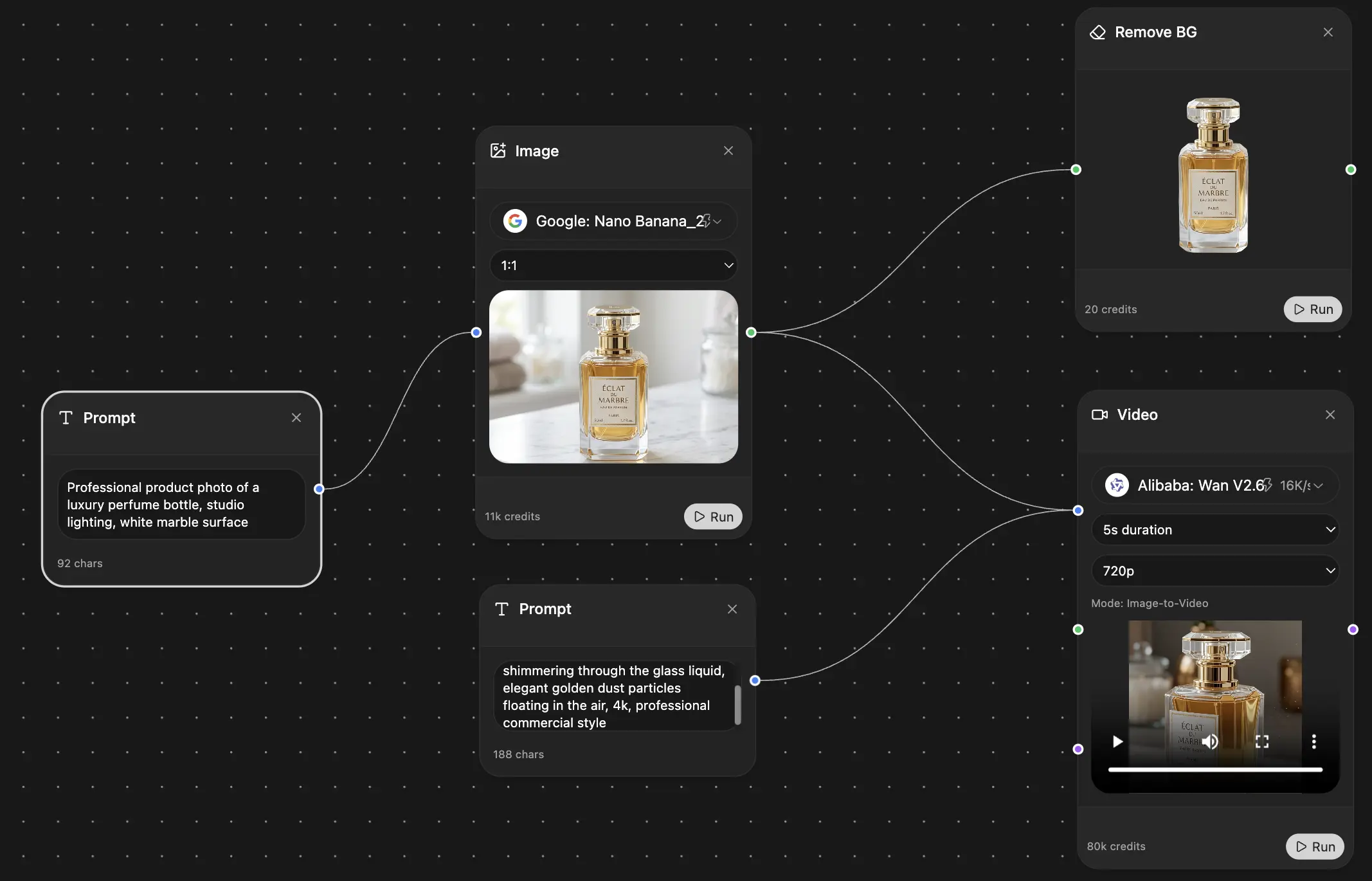

That’s where Zemith is useful in a practical sense. It gives you access to multiple models in one workspace, so you can compare drafts without bouncing across tabs or copying the same text into three different tools.

The value isn’t convenience alone. It’s editorial judgment. You stop asking, “Which model is best?” and start asking, “Which parts of each output are reliable?”

What to compare in each draft

Don’t compare translations like a casual reader. Compare them like an editor.

Use this checklist:

- Sentence function: Is the call to action still a call to action?

- Register: Does it sound appropriately formal for the audience?

- Terminology: Are key terms stable across the piece?

- Grammar pressure points: Cases, agreement, and article behavior.

- Natural flow: Would a native speaker choose this structure?

One useful mental trick is to ignore elegance in the first round. Start by eliminating the clearly risky version. Then blend the strongest parts of the remaining drafts.

Practical rule: If two models agree and the third goes rogue, don’t automatically trust the majority. Check whether the “odd” version is actually the only one that sounds native.

This comparison-first approach also mirrors what serious language learners do when they need to see nuance from multiple angles. If you’ve ever looked at how people by comparing forms, repetition, and contextual examples, the principle is familiar. Multiple exposures reveal where literal output stops and real usage begins.

Phase three, human post-editing is where quality appears

This part is essential for professional content.

Human review isn’t only for correcting obvious machine errors. It’s where the draft becomes publishable. The editor adjusts tone, smooths unnatural constructions, resolves ambiguity, and makes sure the Albanian version sounds like it belongs in its real environment.

Verified research on literary and professional workflows is blunt about this. Human editing and proofreading can reduce implicit cultural loss from 60% in draft translations to under 20%, and ISO 17100-certified workflows for business texts can achieve over 99% accuracy with less than 1% post-editing error rates according to the Sapienza Editorial article on translation methodology.

That doesn’t mean every project needs a full certified process. It does mean the hybrid method is the closest thing to a sane default.

What the human reviewer should actually do

The post-editor shouldn’t waste time redoing the entire translation from scratch. That defeats the point.

Focus on these:

Fix the social tone

This is where “technically correct” often still sounds wrong.Resolve ambiguity

English source text often hides ambiguity that Albanian forces you to choose.Check layouts and expansion

Albanian can expand enough to affect buttons, banners, and print layouts.Verify recurring strings

Menus, onboarding steps, policy labels, and support macros should match.Read aloud

If it catches in the mouth, it usually catches in the reader’s head too.

A lightweight prompt pattern that works

You don’t need a baroque prompt with seventeen rules and a prayer.

Use something like this in your first pass:

Translate this English text into standard Albanian for a professional business audience. Keep the tone clear and natural, not literal. Preserve product names in English. Use consistent terminology for these terms: [insert glossary]. Flag any phrase where meaning is ambiguous.

Then ask each model one follow-up question after the draft:

- Which terms were difficult to render naturally?

- Where might a native speaker prefer a different structure?

- Which sentences may sound too literal?

That second round often reveals more than the original translation.

Avoiding Pitfalls and Nailing Quality Control

“Good enough” is expensive when the content affects trust.

If you’re translating blog posts for SEO experiments, maybe the cost of a rough sentence is modest. If you’re translating contracts, product claims, policy pages, investor updates, or B2B outreach, rough is not harmless. It signals carelessness.

That’s one reason search intent around legal reliability has sharpened. Searches for “English Albanian legal translation accuracy” increased by 300% in 2025, and the same verified source notes that low-resource conditions can cause AI to hallucinate formal terms in business and legal documents, which is why human verification matters for contracts and similar content, as described by .

The fastest quality check that catches a lot

Use a back-translation check, but use it carefully.

Take the Albanian draft and translate it back into English using a different model or tool. You’re not looking for elegant English. You’re looking for drift. If your original line meant “request a quote” and the back-translation comes back closer to “ask for information,” your call to action probably softened.

Then do a second check with an editor’s eye. Read the Albanian as if you’ve never seen the English. Does it sound written for Albanian readers, or translated at them?

Common English-to-Albanian Translation Traps

A simple editing checklist helps too. This guide on maps well to translation QA because many failures are editorial before they are linguistic.

The most dangerous translation is the one that looks professional to a non-native reviewer.

My default QC stack

When the content matters, I use this order:

- First pass: Multi-model comparison

- Second pass: Native-language edit for tone and grammar

- Third pass: Back-translation on high-risk lines

- ** pass:** Layout and context review in the actual asset

That last step gets ignored too often. A sentence that works in a document may fail inside a button, chart label, app screen, or slide title.

Translating More Than Just Words

A lot of english to albanian translation work isn’t plain text pasted into a box. It’s PDFs, screenshots, tables, training decks, product images, transcripts, and reports with ugly formatting choices made by someone who has long since left the company.

That’s where the workflow gets more interesting.

When the file itself is part of the problem

A verified example from Albania makes the point nicely. In 2025, the Observatory for the Rights of Children and Youth in Albania issued a tender for translators to handle a 150-page statistical report from English to Albanian, including tables, charts, footnotes, and annexes, with coordination involving INSTAT and UNICEF Albania. The project shows how serious translation work often involves format complexity, not just language, as outlined in the .

That kind of job is very different from translating a paragraph on a website. Reports force you to preserve terminology, structure, numbering logic, and readability across dense material.

A practical project scenario

Say you’re localizing a campaign pack for an Albanian market launch.

The pack includes:

- a brochure in PDF format

- website hero copy

- three paid ad images

- subtitles from a webinar clip

- a pricing explainer with footnotes

If you handle each item in a separate app, quality drops because context disappears. The brochure uses one term, the subtitle uses another, and your ad image invents a third. Now everyone’s “aligned” in five different directions.

That’s why I prefer a single working environment where you can extract, compare, revise, and keep project memory in one place. If part of your source is spoken content, this walkthrough on is useful before translation even starts.

Cultural adaptation isn't optional

The same sentence can be grammatically correct and still underperform because it carries the wrong cultural weight.

This becomes obvious with slogans, educational material, and anything idiomatic. One reason language people obsess over examples is that direct equivalence rarely survives contact with real use. I like how this article on shows the principle. Idioms don’t become usable when you memorize them in isolation. They become usable when you see them inside context. Albanian adaptation works the same way.

A translated phrase can be correct on paper and wrong in the campaign.

For image-heavy assets, the language review also needs to check text inside visuals. A headline embedded in a promo banner often needs a shorter, sharper Albanian rewrite than the website body copy. If you swap the text without adjustments, the design may hold, but the message may sag.

The real win is continuity

The all-in-one workflow matters less because it feels modern and more because it prevents drift.

You can keep a running glossary, compare model output, chat with a long document, extract spoken content, and carry the same terminology through every asset in the project. That’s what clients and internal teams notice. Not that AI was involved, but that the campaign sounds consistent everywhere.

And yes, this is the part where the old “just throw it into a translator” advice completely falls apart.

Your New Superpower Unlocked

The jump from shaky machine output to professional english to albanian translation isn’t magic. It’s process.

Use AI to move faster. Use comparison to catch weak drafts before they spread. Use human review to handle the things models still miss, especially tone, grammar pressure points, and audience fit.

That’s the new standard. Not human versus AI. Human with AI, but with enough discipline to know where the handoff belongs.

If you’ve been burned by stiff wording, inconsistent terminology, or translations that looked fine until a native speaker winced, the fix is now pretty clear. Prepare the source. Compare multiple outputs. Edit for real-world use.

That’s not just a faster way to translate. It’s a safer way to publish.

Frequently Asked Questions

What’s the best AI model for English to Albanian translation

There usually isn’t one permanent winner.

That’s the wrong question for Albanian anyway. Different models handle different failure points better. One may produce smoother phrasing. Another may preserve terminology more faithfully. Another may be less reckless with formal language.

A more useful fact is this: modern free tools can handle up to 3,000 characters per request, while pro systems may aggregate output from more than 22 models and claim 85% of professional human quality through consensus-based translation, according to . That’s exactly why comparison matters. The power isn’t in a single answer. It’s in seeing alternatives.

Can I use free tools for business documents

For internal gist-reading, maybe. For anything client-facing, legal, contractual, or brand-sensitive, I wouldn’t rely on a raw free-tool output without review.

Business language depends on precision and register. Albanian is not forgiving when those choices are off. A phrase can be understandable and still be wrong for the setting.

How should I handle Gheg and Tosk

Start by deciding who the audience is and where the content will be used.

If you need broadly standard, formal, and publishable Albanian, keep the translation in standard written Albanian unless there’s a clear reason not to. If the content is community-based, regional, or creative, dialect decisions matter more and need a human reviewer who knows the target audience well.

Don’t ask the model to “sound local” unless you can validate the result. That’s how you end up with confident nonsense wearing a regional accent.

Is AI plus human review actually faster than doing it manually

Yes, in most practical content workflows.

The speed gain doesn’t come from skipping review. It comes from skipping the blank page, generating multiple candidate phrasings quickly, and reducing repetitive drafting work. Human effort moves from raw production to judgment, which is a much better use of expert time.

Should I back-translate every page

No. Use it selectively.

Back-translation is most helpful for high-risk lines like legal language, value propositions, onboarding steps, medical instructions, or anything where a subtle drift changes the meaning. It’s not necessary for every routine sentence.

Can this workflow replace a certified translator

No.

If you need a certified translation for legal, immigration, court, medical, or official administrative use, follow the formal requirements for that jurisdiction and hire the appropriate professional. AI can help prepare, compare, or clarify drafts, but it does not replace certified responsibility.

What kinds of content benefit most from the hybrid workflow

The biggest gains usually show up in:

- Marketing copy where tone matters

- Product UX text where space is limited

- Reports and PDFs with repeated terminology

- Support content that must stay consistent

- Business communication where formality matters

Anything repetitive, high-volume, or format-heavy benefits from AI assistance. Anything public or high-stakes benefits from human review.

If you want one workspace for comparing multiple AI models, organizing glossaries, working with documents, and refining translation drafts without tab chaos, try . It fits the hybrid workflow well because the drafting, comparison, and editing steps can happen in the same place.

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers

What Our Users Say

Great Tool after 2 months usage

"I love the way multiple tools they integrated in one platform. Going in the right direction."

— simplyzubair

Best in Kind!

"The quality of data and sheer speed of responses is outstanding. I use this app every day."

— barefootmedicine

Simply awesome

"The credit system is fair, models are perfect, and the discord is very responsive. Quite awesome."

— MarianZ

Great for Document Analysis

"Just works. Simple to use and great for working with documents. Money well spent."

— yerch82

Great AI site with accessible LLMs

"The organization of features is better than all the other sites — even better than ChatGPT."

— sumore

Excellent Tool

"It lives up to the all-in-one claim. All the necessary functions with a well-designed, easy UI."

— AlphaLeaf

Well-rounded platform with solid LLMs

"The team clearly puts their heart and soul into this platform. Really solid extra functionality."

— SlothMachine

Best AI tool I've ever used

"Updates made almost daily, feedback is incredibly fast. Just look at the changelogs — consistency."

— reu0691