English to Khmer Translation: The Complete 2026 Guide

Master English to Khmer translation with our complete guide. Learn about AI tools like Zemith, common linguistic errors, and a pro quality-assurance workflow.

You've got English copy that needs to land in Khmer. Maybe it's a product page, a customer support article, a travel handout, or a clinic form. You paste it into a translator, get something back fast, and then a native speaker gives you that polite half-smile that usually means, “Well… that's technically words.”

That's the moment localization professionals realize english to khmer translation isn't a copy-paste task. It's a workflow problem.

Khmer is one of those language pairs that punishes lazy inputs and rewards careful process. The good news is that modern AI tools are far better than the old “word salad generator” era. The less fun news is that they still need guidance. If you want Khmer output that sounds natural, preserves meaning, and doesn't accidentally turn your brand voice into a lost instruction manual, you need a better system.

Why Your Khmer Translation Might Sound... Funny

A common failure occurs when a casual, clear, and friendly English original results in a Khmer version that is stiff, oddly literal, or slightly off in a way that native readers notice immediately. The translation may not be completely wrong. It is wrong enough to feel strange.

That happens because English and Khmer don't line up neatly. Idioms wobble. Tone shifts. Assumptions hidden inside English sentences suddenly become visible once a machine tries to map them word by word.

Bad translations usually fail before the tool does

Most translation mistakes start upstream. Teams feed in sloppy source text, unclear referents, mixed tone, and marketing fluff with three meanings packed into one sentence. Then they blame the model.

Practical rule: If your English is vague, your Khmer will be confidently vague.

I've seen the same pattern across many language pairs. That's why advice like this useful breakdown of still applies here. Different language, same trap. Students and teams often translate surface words instead of meaning, structure, and intent.

If you want better output, start by understanding how meaning gets represented. A quick read on helps explain why some phrases transfer cleanly while others turn into accidental comedy.

Why this matters now

This isn't a niche issue anymore. Demand is clearly there. Multiple English-to-Khmer translator apps on Google Play have reached 10,000+ downloads, which shows strong adoption for both casual and professional use, as noted by .

That makes sense. More teams now need Khmer for support content, ecommerce listings, onboarding material, tourism copy, and internal operations. More access is good. More bad translation at scale is not.

Here's the practical split:

- Machines give you speed, consistency, and a fast first draft.

- Humans catch nuance, tone, and cultural appropriateness.

- A real workflow combines both so you don't waste budget fixing preventable mistakes later.

The trick isn't choosing one camp like it's a sports rivalry. It's knowing which job each one should do.

Machine Speed vs Human Touch

Machine translation and human translation solve different problems. Treating them as interchangeable is where budgets disappear and deadlines get weird.

The easiest way to think about it is transportation. Machine translation is the jet. It gets you there fast and handles volume well. Human translation is the luxury sedan. It takes longer, but the ride is smoother, more controlled, and much better for precious cargo.

Where machine translation wins

If you need to understand a document quickly, localize large volumes of repetitive text, or create a draft for later review, machine translation is hard to beat. It's fast, cheap, and available on demand.

That's especially useful for:

- Support content: FAQs, help center drafts, routine responses

- Internal material: notes, summaries, rough comprehension

- Bulk catalog copy: product attributes, structured descriptions

- First-pass localization: getting from zero to editable draft fast

Where human translation still earns its keep

Human translators matter most when the cost of awkward phrasing is high. Brand campaigns, legal language, healthcare communication, investor material, and public-facing messaging all need judgment that machines still don't reliably provide on their own.

Humans are better at:

- Tone control: warm, formal, persuasive, respectful

- Cultural fit: not sounding imported

- Ambiguity resolution: figuring out what the English meant

- Risk management: catching context errors before publication

A machine can translate the sentence. A linguist translates the intention.

The practical middle ground

The strongest setup for many organizations is hybrid. Use AI for draft speed. Then review based on content risk, not ideology.

Here's the side-by-side view:

This is why the smartest localization teams don't ask, “AI or human?” They ask, “Which pieces deserve a human pass, and which pieces should the machine handle first?”

The Hidden Hurdles in Khmer Translation

Khmer doesn't trip up AI because it's obscure or exotic. It trips up AI because its structure asks different questions than English does. If your process assumes English logic carries over neatly, the output gets shaky fast.

Pronouns are a real trouble spot

One of the most important technical problems is pro-drop. Khmer often omits subjects when context already makes them clear. English usually doesn't. So the model tries to “help” by inserting pronouns that were never explicitly stated.

That's not a harmless guess. Research tied to the DoMY and Moses-era academic work notes that English-to-Khmer systems can show a 25-35% higher pronoun hallucination rate than high-resource pairs like English-French, according to the .

That means your system may invent a “he,” “she,” or “they” and change the meaning.

Script and segmentation add friction

Khmer also uses an abugida script. That matters in practice because tokenization, segmentation, and spacing behavior aren't as forgiving as English users expect. Small preprocessing problems can snowball into awkward output.

This is one reason low-context translators often struggle with Khmer. They process one sentence at a time with very little surrounding information. Tools built for broader multilingual handling, including systems discussed in articles about pairs like , show the same general lesson. Language pairs that look manageable on the surface usually need context-aware handling underneath.

The errors you'll see most often

If you review enough english to khmer translation output, patterns emerge quickly:

- Missing implied subjects: the model inserts who did the action

- Over-literal phrasing: each word is translated, but the sentence feels unnatural

- Tone drift: a friendly line becomes robotic or overly formal

- Terminology wobble: the same term appears in different forms within one document

- Context collapse: a sentence translated correctly on its own reads oddly in a paragraph

Here's the useful mindset: don't treat these as random glitches. Treat them as predictable failure modes.

Khmer translation errors often look small when you inspect one sentence. They look expensive when you publish the whole page.

Once you know what usually breaks, you can build prompts, review steps, and glossaries around those weak points instead of fixing everything by hand afterward.

How to Get Amazing AI Translations with Zemith

A Cambodian customer lands on your pricing page, reads the Khmer version, and pauses at a sentence that technically says the right thing but sounds off. That hesitation is the whole problem. AI translation quality is rarely decided by the model alone. It is decided by the workflow wrapped around it.

Start by fixing the source, not the output

I have seen teams waste hours editing Khmer that was doomed by fuzzy English. Clean source text gives the model fewer chances to guess wrong.

Use a short pre-edit pass before you translate:

- Break up stacked ideas: one sentence, one point

- Replace vague references: swap “it,” “this,” and “they” for the actual noun

- Remove idioms: clever English usually becomes clumsy Khmer

- Name the audience: customers, patients, staff, students, travelers

- Set the tone: friendly, formal, neutral, instructional

Prompt quality matters here. This guide to covers the mechanics, and this hands-on is useful practice for writing clearer instructions.

Compare model output like an editor

One engine can give you a decent first draft. It can also give you a confident mistake.

The safer approach is to compare outputs, especially for customer-facing copy, legal text, product screens, and support content. If two models handle a sentence differently, that is a review signal. One may preserve meaning better. Another may sound more natural. A third may keep terminology steadier across the page. Good reviewers do not look for perfect agreement. They look for where the disagreement reveals risk.

Zemith is useful here because it gives access to multiple AI models in one workspace and includes a Document Assistant for file-based translation. That setup helps with Khmer because context often sits above the sentence level. Headings, repeated UI labels, and support instructions read better when the system can process the document as a whole.

Use a document workflow for anything important

For a short ad or a single email, sentence-level translation may be enough. For a landing page, policy, brochure, or onboarding flow, use a fuller process.

- Upload the full file instead of pasting isolated snippets.

- Ask the model to identify the domain, audience, and expected tone.

- Build a small glossary for product names, repeated terms, and words that should stay untranslated.

- Generate the first draft.

- Review high-risk parts first. Headlines, buttons, warnings, pricing language, and legal copy.

- Send only the risky passages through a second model for comparison.

- Make final edits for consistency across the whole document.

This saves time in the right place. You stop polishing low-risk lines and spend attention where bad phrasing costs trust or conversions.

A quick walkthrough helps if you want to see AI workflow ideas in motion:

Use prompts that leave less room for improvisation

Fancy prompts are entertaining. Clear prompts are useful.

Start with something like this:

Translate the following English text into natural Khmer for Cambodian customers. Keep the meaning accurate, preserve formatting, avoid literal idioms, and use consistent terminology for product names. If a phrase is ambiguous, choose the most natural customer-facing interpretation and flag any phrase that may need human review.

That prompt works because it sets audience, style, terminology, and fallback behavior. In practice, that beats a clever prompt stuffed with vague instructions. Boring is good. Boring ships.

Spot the Difference Good vs Bad Translations

The fastest way to improve your eye for quality is to compare weak output with revised output. You don't need to speak Khmer fluently to spot some warning signs. You need to know what kinds of English source text create trouble and what a better revision process changes.

What a bad first draft usually looks like

A bad machine translation often has three fingerprints: it's too literal, it carries over English structure awkwardly, and it doesn't respect the context of the sentence.

Here's a simple comparison table using representative examples:

What changed between bad and good

The “good” version usually isn't magical. It's the result of a few disciplined fixes:

- Source text was simplified: fewer nested clauses

- Audience was specified: customer-facing, internal, medical, legal

- A second output was compared: not just accepted blindly

- A reviewer checked tone: especially around instructions and service language

Review habit: Don't ask “Is this translated?” Ask “Would a native reader say it this way?”

One practical trick is to flag English phrases before translation if they contain any of the following:

- Idioms: “on the same page,” “right away,” “touch base”

- Loose pronouns: “this,” “that,” “they”

- Marketing fluff: “world-class,” “game-changing”

- Compressed logic: one sentence doing four jobs at once

Those are the phrases most likely to produce Khmer output that's technically defensible and still not publishable.

A good english to khmer translation workflow doesn't just generate text. It reduces the number of weird little repairs your reviewer has to make later.

Your Simple Translation Quality Checklist

Good translation work gets ruined in the last mile all the time. The draft is decent, but nobody checks term consistency, formatting, or whether the CTA still sounds like a CTA instead of a policy warning.

You don't need an enterprise QA department to avoid that. You need a short checklist and the discipline to use it every time.

The five checks that catch most problems

- Back-translate the risky bits: Run the Khmer back into English with a different tool. Don't do this for every sentence. Use it on headings, instructions, warnings, payment text, and anything legally or commercially sensitive.

- Check repeated terms: Product names, buttons, feature labels, and category names should stay consistent.

- Verify formatting: Lists, bold text, line breaks, and links often get messy in translation workflows.

- Review names and numbers: Names, dates, currencies, and version labels should remain intact unless you intentionally localize them.

- Get a native read if possible: Even a short review by a Khmer speaker can catch tone issues that machines miss.

What non-Khmer speakers can still review

A lot more than they think.

You can still inspect whether:

Organized project handling helps, particularly when managing multilingual pages, app strings, and revisions across devices, as even adjacent tasks like become part of the review loop.

Keep the checklist small enough to survive reality

The biggest QA mistake is building a beautiful checklist nobody follows. Keep it lean. If your review process takes forever, people skip it under deadline pressure.

The best QA checklist is the one your team actually uses on a Tuesday afternoon when three things are already on fire.

For larger projects, keep source files, translations, comments, and final approved versions in one place. Chaos isn't a language strategy.

Frequently Asked Translation Questions

Can I use AI alone for english to khmer translation

Use AI alone for low-risk content, internal drafts, and speed-heavy tasks where a rough but readable translation is enough. For legal terms, medical instructions, contracts, regulated content, or brand copy that carries reputation risk, add human review. AI is fast. Accountability still belongs to your team.

What about medical or technical Khmer content

Specialized Khmer content breaks generic workflows fast. Medical, legal, and technical material depends on fixed terminology, consistent phrasing, and context that general models often miss.

As noted earlier, domain-specific systems perform better than generic ones. The practical takeaway is simple. Feed the model approved reference material, keep a glossary close by, and get a reviewer who actually knows the subject. If the translation can affect safety, compliance, or money, do not skip that last step.

Is offline translation good enough for Cambodia travel or field work

For street signs, menus, and basic directions, often yes.

For health guidance, permit instructions, or sensitive conversations, be careful. Offline tools are useful when the signal disappears, but they usually have less context and fewer quality controls. The safer play is to prepare reviewed Khmer text before anyone is standing in a clinic, checkpoint, or rural worksite trying to improvise.

What's the best way to translate a whole document

Translate at the document level, not one isolated sentence at a time. Context decides whether a term should sound formal, instructional, legal, or conversational, and Khmer makes those choices visible quickly.

A solid workflow looks like this: start with the full file, define the audience, add any approved terms, generate a draft, then review the sections where mistakes are expensive. That process is slower than pasting random chunks into a free tool, but much faster than fixing a confused final version after publication.

Do other language workflows help with Khmer projects

Yes. Good translation operations travel well across languages, even when the grammar does not. Briefs, glossaries, document context, review passes, and QA checks are not Khmer-specific habits. They are the stuff that keeps projects from drifting.

If you want a parallel example, this guide to shows how the same process discipline carries across a different language pair.

If you want one workspace for multi-model AI drafting, document-level translation help, and cleaner review workflows, take a look at . It's a practical option for teams that need more than a one-box translator and want translation, prompting, document context, and revision in one place.

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

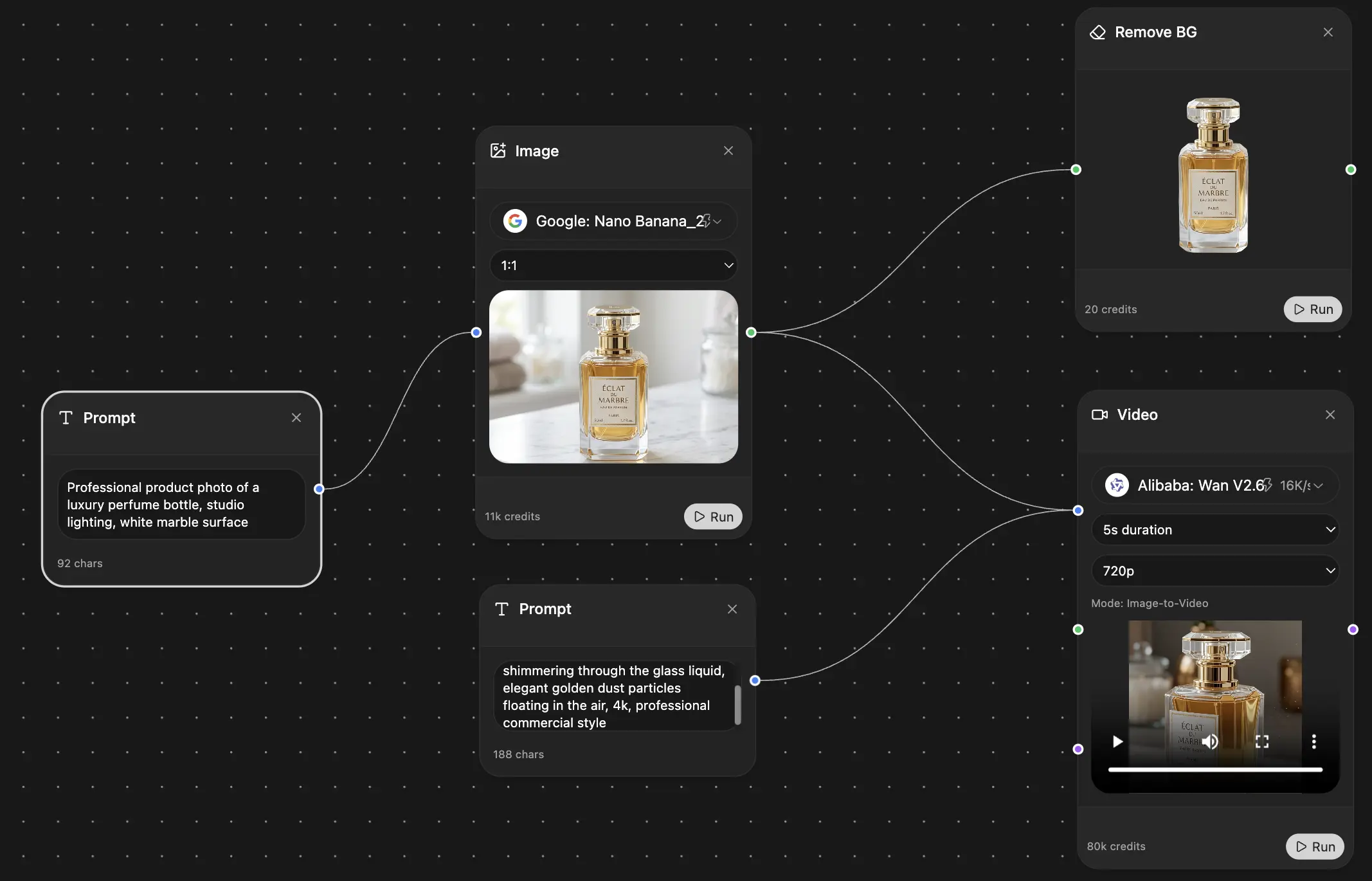

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers

What Our Users Say

Great Tool after 2 months usage

"I love the way multiple tools they integrated in one platform. Going in the right direction."

— simplyzubair

Best in Kind!

"The quality of data and sheer speed of responses is outstanding. I use this app every day."

— barefootmedicine

Simply awesome

"The credit system is fair, models are perfect, and the discord is very responsive. Quite awesome."

— MarianZ

Great for Document Analysis

"Just works. Simple to use and great for working with documents. Money well spent."

— yerch82

Great AI site with accessible LLMs

"The organization of features is better than all the other sites — even better than ChatGPT."

— sumore

Excellent Tool

"It lives up to the all-in-one claim. All the necessary functions with a well-designed, easy UI."

— AlphaLeaf

Well-rounded platform with solid LLMs

"The team clearly puts their heart and soul into this platform. Really solid extra functionality."

— SlothMachine

Best AI tool I've ever used

"Updates made almost daily, feedback is incredibly fast. Just look at the changelogs — consistency."

— reu0691