10 Crucial Exploratory Data Analysis Methods You Need to Master in 2026

Discover 10 powerful exploratory data analysis methods with actionable insights, code snippets, and real-world examples to elevate your data skills.

Sitting on a mountain of data can feel like having a garage full of IKEA boxes. You know there’s something amazing inside, but where do you even start? Jump straight to building a fancy AI model, and you might end up with a wobbly bookshelf that collapses at the first sign of a heavy book. This is where Exploratory Data Analysis (EDA) comes in.

Think of it as laying out all the screws, dowels, and wooden panels before you even glance at the instructions. It’s the art of getting to know your data, asking it questions, and letting it tell you its story. We’re talking about finding the hidden patterns, spotting the weirdos (outliers), and understanding the relationships that will make or break your project. Before diving into specific methods, it's crucial to understand the overall process of . This preliminary step ensures you're not just collecting data, but preparing it for real-world application.

In this guide, we’ll walk through 10 essential exploratory data analysis methods, from foundational stats to advanced visualizations. We'll cover practical techniques for:

- Descriptive Statistics and Summary Analysis

- Data Visualization and Distribution Analysis

- Correlation and Relationship Analysis

- Missing Data and Outlier Handling

- Dimensionality Reduction and Feature Selection

- Clustering and Group Analysis

We’ll skip the robotic jargon and give you actionable insights and code examples so you can go from data-dazed to data-dazzling. By the end, you'll be able to confidently explore any dataset and, dare we say, even enjoy the process. Let's get started.

1. Descriptive Statistics and Summary Analysis

Before you dive headfirst into complex modeling or fancy visualizations, you need to get the basic stats on your data. Think of descriptive statistics as the "get to know you" phase of your data relationship. This foundational step in exploratory data analysis methods involves calculating simple numerical summaries to understand the core characteristics of your dataset. It’s like getting the character sheet for your data before starting the D&D campaign.

These summaries typically include measures of central tendency (mean, median, mode) and measures of variability or spread (standard deviation, variance, range, and quartiles). By calculating these numbers, you’re answering fundamental questions: What's a typical value? How spread out are the data points? Are there any weird, super-high or super-low values throwing things off?

When and Why to Use It

This is your non-negotiable first step. Always. Whether you're a researcher staring at a massive dataset before a deep dive or a content creator analyzing word counts, these summaries provide the initial context. For example, a content team could use descriptive stats to find the average readability score of their top-performing articles, helping them create more effective content. This is one of the key exploratory data analysis steps for beginners because it's so foundational.

Key Insight: Don't skip this step! Jumping straight to complex analysis without understanding the basics is like trying to build a house without checking the foundation. You might get something up, but it’s likely to fall apart.

Actionable Tips for Success

To get the most out of your summary analysis, keep a few things in mind:

- Mind the Gaps: Always check for and handle missing values before calculating statistics. A mean can be misleading if half your data is missing.

- Embrace Robustness: If you suspect your data has outliers (like a few blog posts with a million views while most have a thousand), use robust statistics like the median and Interquartile Range (IQR). They are less sensitive to extreme values than the mean and standard deviation.

- Compare and Contrast: Don't just look at the mean. Compare it to the median. If they are far apart, it’s a big clue that your data is skewed.

- Summarize Your Summaries: For quick insights, a tool like Zemith can be a lifesaver. Instead of manually combing through reports, you can upload a document and ask its AI assistant to pull key metrics and summaries, giving you a head start on your analysis.

2. Data Visualization and Distribution Analysis

If descriptive statistics are the "get to know you" phase, then data visualization is the first date. This is where you actually see what your data looks like, moving beyond abstract numbers to concrete shapes and patterns. This critical step in exploratory data analysis methods involves turning raw data into visual forms like histograms, box plots, and scatter plots. It lets you spot trends, outliers, and relationships that are nearly impossible to find in a spreadsheet.

These visual representations help answer key questions: Is my data bell-shaped, skewed, or totally random? Do two variables move together? Are there obvious clusters or groups? This visual approach makes insights immediately accessible, even for people who don't speak "data."

When and Why to Use It

Right after you’ve got your summary stats, you should start plotting. Data visualization is essential for understanding the distribution of your variables. For instance, a marketing team can use scatter plots to see if there's a relationship between ad spend and website traffic, while a developer might use a histogram to analyze API response times and identify performance bottlenecks. It’s also your best tool for communicating findings to others. A good chart is worth a thousand numbers.

Key Insight: A picture truly is worth a thousand data points. Never trust your stats alone; always visualize your data to confirm what the numbers are telling you (or hiding from you).

Actionable Tips for Success

To make your visualizations effective and not just pretty pictures, follow these tips:

- Start Simple: Begin with basic plots like histograms and bar charts to understand individual variables before moving to more complex multi-variable plots.

- Label Everything: Your future self (and your colleagues) will thank you. Always add clear titles, axis labels, and legends. A chart without labels is just modern art.

- Use Color with Purpose: Don’t just make it a rainbow. Use color strategically to highlight specific categories, distinguish groups, or draw attention to key findings.

- Generate Polished Reports: For presenting your findings, you need clean, professional-looking visuals. Instead of battling with Matplotlib's settings, you can use a tool like Zemith to generate publication-quality charts for reports or dashboards, making your insights shine.

3. Correlation and Relationship Analysis

Once you have a grip on your individual variables, the next logical step in exploratory data analysis methods is to see how they interact with each other. Correlation analysis is like playing matchmaker with your data columns, figuring out which ones have a relationship. It measures the strength and direction of the connection between two variables, telling you if they tend to move together, move in opposite directions, or don't care about each other at all.

This technique uses correlation coefficients, like the famous Pearson or the non-parametric Spearman and Kendall, to put a number on that relationship. A coefficient of +1 means a perfect positive correlation (as one goes up, the other goes up), -1 means a perfect negative correlation (as one goes up, the other goes down), and 0 means no relationship. It’s a powerful way to spot patterns and decide which variables might be important for a deeper look.

When and Why to Use It

Correlation analysis is your go-to whenever you're working with more than a couple of variables and want to understand the bigger picture. For instance, a marketing team could analyze the correlation between ad spend on different platforms and website traffic to see what's actually working. For developers, it's useful for finding if complex code (high cyclomatic complexity) is correlated with a higher frequency of bugs. To further investigate the interplay between different datasets, learning how to can provide valuable insights into relationships and dependencies.

Key Insight: Correlation does not equal causation! Just because two variables are correlated doesn't mean one causes the other. My go-to example? Ice cream sales and shark attacks are highly correlated. Does eating ice cream make you more delicious to sharks? No! A third variable (summer heat) causes both.

Actionable Tips for Success

To get the most out of your relationship analysis, keep these points in mind:

- Visualize First: Always create a scatter plot of your variables before you run a correlation test. This helps you spot non-linear relationships that a simple correlation coefficient might miss.

- Pick the Right Tool: If your data isn't normally distributed or contains outliers, use Spearman's rank correlation instead of Pearson's. It's based on ranks and is less sensitive to extreme values.

- Context is King: A correlation of 0.7 might be huge in social sciences but weak in physics. Always consider the context of your domain when interpreting the strength of a relationship.

- Fact-Check Your Findings: After you find a strong correlation, use a tool like Zemith’s Deep Research feature to see if similar relationships have been documented in academic literature. This can help validate your findings or reveal potential confounding factors. For more on structuring these comparisons, check out our guide on .

4. Missing Data Analysis and Imputation Assessment

Few datasets arrive perfectly complete. Most have gaps, holes, and pesky "NaNs" scattered throughout. Missing data analysis is the detective work you do to figure out why those values are gone and what to do about them. Ignoring them is like ignoring a big hole in your boat; you might stay afloat for a bit, but you're probably going to sink.

This crucial part of exploratory data analysis methods involves understanding the pattern and mechanism behind the missingness. Is the data Missing Completely At Random (MCAR), where the absence is just bad luck? Is it Missing At Random (MAR), where the missingness is related to another variable? Or is it Missing Not At Random (MNAR), the trickiest kind, where the reason it's missing is related to the value itself? The answer dictates your next move, preventing biased results and bad decisions.

When and Why to Use It

You should inspect for missing data right after your initial descriptive stats review. This step is essential for anyone dealing with real-world information, from data scientists cleaning survey responses to content creators analyzing incomplete document metadata. For instance, if you're analyzing user engagement and notice missing "time on page" data for specific mobile devices, that's a clue there might be a technical bug, not just random chance.

Key Insight: The "why" behind missing data is often more important than the "what." Documenting your assumptions about why data is missing is just as critical as the imputation method you choose.

Actionable Tips for Success

To handle missing values like a pro, keep these strategies in your back pocket:

- Visualize the Voids: Before you fill anything in, use a missing data heatmap (libraries like

missingnoin Python are great for this) to see if there are patterns. Do entire rows or columns disappear together? - Don't Default to Deletion: Listwise deletion (dropping any row with a missing value) is tempting but dangerous. Only consider it if a tiny fraction of your data is missing (e.g., under 5%) and it's completely random.

- Impute with Intent: Simple mean or median imputation can work for MCAR data, but for more complex scenarios (like MAR), consider multiple imputation (using tools like R's

micepackage) to create several plausible filled-in datasets. - Compare Before and After: After imputing data, re-run your summary statistics and simple visualizations. Compare the results with your original, non-imputed data to check if you've unintentionally distorted your dataset's original story.

5. Outlier Detection and Anomaly Identification

Every dataset has its rebels, those data points that just don't play by the rules. Outlier detection is the process of finding these anomalies: observations that are so different from other points they raise suspicions. These aren't just extreme values; they are points that deviate significantly from the expected pattern, and identifying them is a core part of any good exploratory data analysis methods.

Think of it as being a detective for your data. You're looking for clues that don't add up, like a single user spending 10,000 hours on your app in one day. This could be a data entry error, a system glitch, or a genuinely bizarre (but real) event. Methods for finding these oddballs range from statistical approaches like Z-scores and the Interquartile Range (IQR) method to more advanced machine learning techniques.

When and Why to Use It

You should look for outliers right after getting your initial descriptive stats. They can seriously skew your summary statistics (like the mean) and lead to incorrect assumptions and flawed models. For example, a content creator might spot an article with exceptionally low engagement. This could signal a broken link, a poorly chosen topic, or a technical issue preventing views, prompting an investigation rather than assuming the content failed. Similarly, developers can use anomaly detection to spot unusual performance metrics that might indicate a bug or a security threat.

Key Insight: Don't just delete outliers! An outlier might be the most important data point you have. It could be a data entry mistake, or it could be your million-dollar customer. Investigate before you eliminate.

Actionable Tips for Success

To handle outliers like a pro, follow these guidelines:

- Be a Detective, Not a Bouncer: Never automatically remove an outlier. Use your domain knowledge to figure out why it’s an outlier. Is it a typo, a measurement error, or a legitimate, rare event? Document every decision you make.

- Run It Both Ways: To understand an outlier's impact, perform your analysis twice: once with the outliers and once without. If your conclusions change dramatically, it shows how sensitive your findings are to these extreme values.

- Pick the Right Tool: Simple methods like the IQR rule are great for single variables. For datasets with many features, use multivariate methods like Mahalanobis distance or machine learning models (e.g., Isolation Forest) that can spot weird combinations of values.

- Automate the First Pass: When dealing with large documents or datasets, finding anomalies manually is a slog. Tools like Zemith's AI-powered analysis can quickly surface unusual patterns or data points from your documents, giving you a starting point for your investigation.

6. Dimensionality Reduction and Feature Selection

Got a dataset with more columns than a Roman temple? When your data is wide, with dozens or even thousands of variables, it's easy to get lost. Dimensionality reduction is the art of simplifying this complexity by reducing the number of variables (dimensions) while holding on to the most important information. It's like turning a massive, unwieldy epic novel into a tight, compelling short story; you keep the plot, just lose the fluff.

This powerful group of exploratory data analysis methods includes techniques like Principal Component Analysis (PCA) and t-SNE, which create new, fewer variables (components) that are combinations of the original ones. It also covers feature selection, where you strategically pick the most impactful original variables and discard the rest. The goal is to make your data easier to visualize, speed up model training, and reduce noise that could lead to overfitting.

When and Why to Use It

This is your go-to move when you're facing "the curse of dimensionality." This happens in fields like genomics, where researchers might have data on thousands of genes for each sample, or in marketing, where customer profiles have hundreds of attributes. For example, a content team could use it to identify the handful of features (like keyword density, sentence length, and image count) that best predict article engagement, ignoring dozens of less important metrics.

Key Insight: Less is often more. Reducing dimensions doesn't just make your computer run faster; it can actually make your model smarter by forcing it to focus on signals instead of noise.

Actionable Tips for Success

To successfully slim down your dataset without losing its essence, follow these tips:

- Choose Your Weapon Wisely: Don't just pick a method at random. Use PCA for datasets where variables have linear relationships. For spotting complex, non-linear clusters (like in customer segmentation), t-SNE is often a better choice.

- Don't Fly Blind: After reducing dimensions, validate that you haven't thrown the baby out with the bathwater. For PCA, check the "explained variance ratio" to see how much information your new components retain.

- Bring in a Human: Feature selection works best when you combine statistical methods with domain expertise. An algorithm might say a feature is unimportant, but a subject matter expert might know it’s critical.

- Automate the Boring Stuff: Manually sifting through hundreds of features is a recipe for a headache. For content-related tasks, like finding key themes in a pile of documents, Zemith can help summarize and pinpoint important topics, giving you a strong starting point for feature selection.

7. Categorical Data and Frequency Analysis

Not all data comes in neat numerical packages. Sometimes, the most valuable information is found in categories, groups, or classifications. This is where categorical data analysis, one of the most practical exploratory data analysis methods, comes into play. It’s all about counting things, seeing how often they appear, and figuring out if certain categories hang out together more than others.

This process involves using tools like frequency tables to get a simple headcount of each category. From there, you can use visualizations like bar charts or more advanced techniques like chi-square tests to check for relationships. It answers critical questions like: Which product category is our top seller? Are users from a specific country more likely to report a certain type of bug? It's the secret to understanding the 'who' and 'what' in your dataset.

When and Why to Use It

Anytime you're dealing with non-numeric data, this is your go-to method. It’s essential for making sense of survey responses, user demographics, or any data that has been sorted into groups. For instance, a content team might analyze the distribution of document tags to see which topics are most popular, helping them focus their content strategy. A developer could examine the frequency of different error types logged in an application to prioritize bug fixes for the most common issues.

Key Insight: Ignoring categorical variables is like reading a book but skipping all the character names. You might get the general plot, but you'll miss the relationships and dynamics that truly drive the story.

Actionable Tips for Success

To get the most out of your categorical analysis, keep these tips in your back pocket:

- Count First, Analyze Later: Always start with a simple frequency table (

.value_counts()in pandas is your best friend). This gives you an immediate sense of which categories dominate and which are rare. - Visualize for Clarity: Use bar charts for simple frequency counts or mosaic plots to visualize the relationship between two categorical variables. Seeing the proportions can reveal patterns that numbers alone might hide.

- Combine the Loners: If you have many categories with very low counts (e.g., less than 5% of the total), consider grouping them into an "Other" category. This can clean up your analysis and make patterns in larger groups more visible.

- Respect the Order: If your categories have a natural order (like "low," "medium," "high"), treat them as ordinal variables. This context is important and can unlock different types of analysis. What's your favorite EDA method? Drop a comment below and let's discuss!

8. Time Series and Sequential Pattern Analysis

Not all data points are created equal, especially when time is involved. Time series analysis is one of the key exploratory data analysis methods for examining data collected sequentially over time. It's about finding the story your data tells as the clock ticks, identifying patterns like trends, seasonality, and unusual spikes. Think of it as watching a movie instead of looking at a single snapshot, allowing you to understand how a variable evolves and what drives its changes.

Using techniques like autocorrelation, seasonal decomposition, and trend extraction, you can answer questions like: Is our website traffic growing? Do sales spike every Black Friday? Did that server update actually improve performance, or was that just a random fluctuation? This analysis is crucial for forecasting, monitoring system health, and understanding the temporal dynamics that affect your data.

When and Why to Use It

Anytime your data has a timestamp, you should consider this approach. It’s a must for marketers examining seasonal sales patterns or content creators tracking engagement trends after publishing. For example, a developer monitoring application performance can use it to spot a memory leak that slowly degrades performance over days. A researcher could track participant recruitment over a study's duration to see if outreach efforts are paying off.

Key Insight: Ignoring the time component in your data is like reading a book with the pages shuffled. You might understand individual sentences, but you'll completely miss the plot.

Actionable Tips for Success

To get the most out of your time-based analysis, keep these points in mind:

- Plot It First, Always: Before you run any fancy models, just plot your data against time. A simple line chart is your best friend and can instantly reveal trends, seasonality, or weird anomalies that need investigation.

- Handle Your Calendar: Be aware of holidays, special events, and seasonal changes. That dip in B2B engagement during the last week of December probably isn't a bug; it's a feature of the holiday season.

- Check for Stationarity: Many time series models assume the data's statistical properties (like mean and variance) are constant over time. You often need to transform non-stationary data, for instance by "differencing" (calculating the change from one point to the next), to stabilize it.

- Automate Your Trend Reports: If you're constantly tracking trends in reports or academic papers, use a tool like Zemith to automate the summary process. You can upload a collection of market reports and ask the AI to synthesize the key trends over the last quarter, saving you hours of manual reading.

9. Clustering and Group Analysis

What if your data could sort itself into meaningful groups, revealing hidden structures you didn't even know to look for? That's the magic of clustering. This unsupervised learning technique is one of the more advanced exploratory data analysis methods, used to group similar data points together. Algorithms like K-means or DBSCAN find natural separations in your data, creating clusters where items inside a group are more similar to each other than to those in other groups. It’s like putting a bunch of LEGO bricks on a vibrating table and watching them naturally sort themselves by shape and size.

You're essentially asking the machine to find the "birds of a feather flock together" patterns without giving it any pre-defined labels. This reveals the inherent structure of your dataset, whether you're segmenting customers based on purchasing behavior or grouping documents by topic. For content creators, this could mean automatically identifying clusters of articles about "SEO," "content strategy," and "email marketing" from a large, unorganized archive.

When and Why to Use It

Clustering is your go-to method when you suspect there are distinct subgroups within your data but don't know what they are. It’s perfect for customer segmentation, anomaly detection (outliers that don't fit into any cluster), and discovering hidden patterns. A marketing team, for instance, could use clustering to find distinct customer personas, allowing them to create hyper-targeted campaigns instead of a one-size-fits-all message. Similarly, developers might cluster user bug reports to identify common root causes.

Key Insight: Clustering turns an ocean of data points into a few manageable islands. It helps you see the forest and the trees by simplifying complexity and revealing the underlying group dynamics.

Actionable Tips for Success

To get meaningful clusters, you need to guide the process carefully:

- Standardize Your Scales: If one variable is in dollars (0-1,000,000) and another is a rating (1-5), the dollar amount will dominate the clustering algorithm. Standardize your variables (e.g., to have a mean of 0 and standard deviation of 1) so each feature gets a fair vote.

- Find the "Elbow" and "Silhouette": Don't just guess the number of clusters. Use the elbow method to find a good potential number of clusters (K), and then confirm it with silhouette scores, which measure how well-defined your clusters are.

- Experiment with Algorithms: K-means is fast and popular, but it assumes your clusters are spherical. Try other methods like DBSCAN (for density-based shapes) or hierarchical clustering to see which approach best fits your data's natural structure.

- Validate with Experts: A cluster is just a collection of data points until you give it meaning. Work with domain experts to interpret the clusters. What makes "Customer Group 3" different from "Customer Group 1"? This is where data turns into business intelligence.

10. Bivariate and Multivariate Relationship Mapping

Once you’ve understood your variables one by one, it's time to play matchmaker. Bivariate and multivariate analysis is where you investigate how two or more variables interact. Think of it as moving from individual character studies to understanding the full plot of your data's story, complete with its complex relationships, alliances, and conflicts. This advanced exploratory data analysis method uses techniques like scatter plot matrices and parallel coordinates to uncover patterns that you would completely miss by only looking at one variable at a time.

These methods help answer more complex questions: Does an increase in one variable correspond to a decrease in another? Do three specific factors work together to produce a certain outcome? It's the difference between knowing the average word count of your articles and knowing if longer articles with more images also get more social shares. This is where the truly deep insights are often hidden.

When and Why to Use It

Use this when your initial analysis is done and you suspect variables aren't acting in isolation. It’s essential for building predictive models because you need to know which variables influence your target. For instance, a content team might use multivariate analysis to see how title length, keyword density, and publication time collectively impact an article's search engine ranking. It’s also critical for scientists studying complex systems, like how temperature, humidity, and nutrient levels jointly affect plant growth.

Key Insight: Your data doesn't live in a vacuum. Variables influence each other. Ignoring these interactions is like trying to understand a movie by only watching one character's scenes. You’ll get their story, but you’ll miss the entire point.

Actionable Tips for Success

To effectively map these complex relationships, here are a few pointers:

- Start Small: Don't try to visualize 20 variables at once. Begin with the 3 to 5 most important ones to avoid creating a "spaghetti chart" that’s impossible to read.

- Encode Information Smartly: Use visual cues like color, size, and shape on your plots to represent additional variables. For example, in a scatter plot of article length vs. shares, you could make the dots' color represent the topic category.

- Embrace Interactivity: Use tools like Plotly or Tableau to create interactive visualizations. Being able to zoom, filter, and hover over data points makes exploring complex, multidimensional relationships much easier.

- Validate Your Findings: If you spot an interesting multivariate pattern, don't just take it at face value. Follow up with more focused bivariate tests (like correlation tests) to confirm the relationship is statistically significant.

10-Method EDA Comparison

From Data Explorer to Insight Superhero

And just like that, you've reached the end of our grand tour of exploratory data analysis methods. Phew! Take a moment, grab another coffee. You've just equipped yourself with a full-blown utility belt for turning messy, mysterious datasets into sources of clear, actionable insight.

Think of yourself as a data detective. You started with the basic tools of the trade: running descriptive statistics to get the lay of the land and whipping up visualizations to see the story's main characters. From there, you graduated to more advanced sleuthing, hunting for clues in correlation matrices, interrogating missing values, and identifying the outliers that just didn't fit the narrative. It’s not just about running code; it's about developing an intuition, a gut feeling for what your data is trying to tell you.

Turning Knowledge into Action

We've covered a lot of ground, from the fundamentals of distribution analysis to the complexities of dimensionality reduction with PCA. It might seem like a daunting list, but remember this: you don't need to use every single method on every single project.

The real skill of a data explorer isn't knowing a hundred techniques, but knowing which two or three to apply to get 80% of the insights in 20% of the time.

Your journey is about building a mental flowchart. Does your data have a time component? Jump to time series analysis. Are your features overwhelming your model? Dimensionality reduction is your friend. This article is your reference manual, your field guide for that journey. The key is to stop seeing EDA as a chore to be completed and start viewing it as a conversation to be had. Each plot you create, each summary you calculate, is you asking a question and the data giving you an answer.

So, what's next on your path to becoming an insight superhero? Practice.

- Grab a Dataset: Go to Kaggle, Google Dataset Search, or use your own project data. Pick something that genuinely interests you. It’s way more fun to analyze data on vintage guitars or coffee bean origins than something you don't care about.

- Start Simple: Don't try to boil the ocean. Begin with

df.describe()orsummary(df). Plot a few histograms. What’s the most basic story you can tell in the first five minutes? - Ask "Why?": See a spike in your distribution? Find a weird outlier? Don't just note it and move on. Dig in. Ask why it's there. This is where the real gold is found.

- Tell a Story: The ultimate goal of all these exploratory data analysis methods is to build a narrative. Your final mission is to explain your findings to someone else, a colleague, a friend, or even your dog (they're great listeners). If you can make them understand the data's core message, you've won.

Mastering EDA is what separates a good analyst from a great one. It’s the foundation upon which solid models, compelling business reports, and game-changing strategies are built. Without it, you're just flying blind. With it, you're the person who can walk into a meeting and say, "I was looking at the data, and I found something interesting..." That’s a superpower.

Ready to make your data exploration workflow faster and more integrated? Instead of juggling multiple scripts, documents, and visualization tools, check out Zemith. It's a platform designed to centralize your research, help you generate code snippets for analysis, and bring all your insights together in one fluid workspace. Give a try and turn your data exploration from a complex process into a streamlined path to discovery.

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

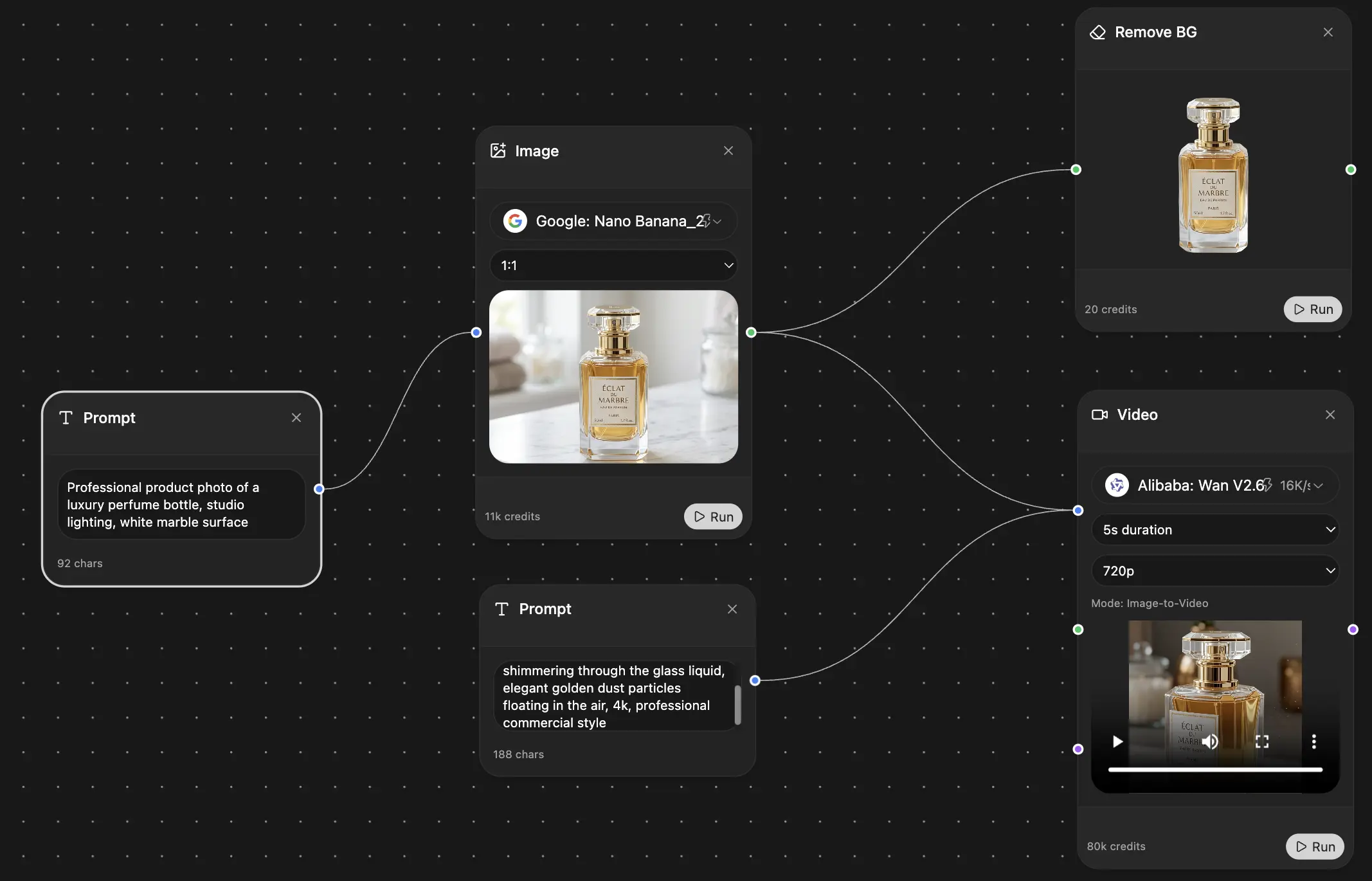

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers

What Our Users Say

Great Tool after 2 months usage

"I love the way multiple tools they integrated in one platform. Going in the right direction."

— simplyzubair

Best in Kind!

"The quality of data and sheer speed of responses is outstanding. I use this app every day."

— barefootmedicine

Simply awesome

"The credit system is fair, models are perfect, and the discord is very responsive. Quite awesome."

— MarianZ

Great for Document Analysis

"Just works. Simple to use and great for working with documents. Money well spent."

— yerch82

Great AI site with accessible LLMs

"The organization of features is better than all the other sites — even better than ChatGPT."

— sumore

Excellent Tool

"It lives up to the all-in-one claim. All the necessary functions with a well-designed, easy UI."

— AlphaLeaf

Well-rounded platform with solid LLMs

"The team clearly puts their heart and soul into this platform. Really solid extra functionality."

— SlothMachine

Best AI tool I've ever used

"Updates made almost daily, feedback is incredibly fast. Just look at the changelogs — consistency."

— reu0691