How to Talk to AI: Get What You Really Want

Learn how to talk to AI. Master prompt design, advanced techniques & tips to get what you want from platforms like Zemith.

You open a chat, type “write me a catchy tagline,” and the AI replies with something that sounds like it belongs on a motivational mousepad in a dentist’s waiting room.

Then you try again. “Make it punchier.” It gets louder, not better.

Then you paste in your whole project brief, your target audience, three examples you like, and a note saying “please don’t make it sound like a robot trying to sell vitamins.” Suddenly the output is usable. Not perfect. But close enough that you can work with it.

That little moment is the whole game. The issue isn't typically an AI problem. It's a conversation problem.

I’ve spent a lot of time talking to AI tools for writing, coding, brainstorming, research, summarizing ugly notes, and turning half-baked ideas into something real. The biggest aha moment wasn’t learning a magic prompt formula. It was realizing that AI works better when you treat it less like a vending machine and more like a collaborator who needs direction, feedback, and the occasional “nope, try again, but this time with less nonsense.”

That shift matters now because AI has moved from novelty to normal work. 9 out of 10 organizations actively support AI implementation for competitive advantage, and AI is projected to contribute $15.7 trillion to the global economy by 2030, according to . If your workplace is already using AI, learning how to talk to ai isn’t a quirky side skill anymore. It’s part communication, part judgment, part workflow design.

The good news is that this is learnable. You don’t need to sound technical. You need to get clearer, more intentional, and more willing to steer the conversation instead of hoping the machine reads your mind.

Are You and Your AI Not on Speaking Terms

A lot of bad AI interactions follow the same script.

You ask for “a blog outline.” It gives you five headings that could fit virtually any topic on Earth. You ask for “help debugging this code.” It returns a wall of confident text and one suggestion that breaks three other things. You ask for “creative social post ideas,” and it serves up content with the personality of a laminated office poster.

That can make AI feel overrated fast.

The real problem usually isn't the model

It's common to blame the tool first. Fair enough. Some outputs are messy. Some are generic. Some are confidently wrong in a way that feels almost impressive. But a big chunk of frustration comes from treating AI like a magic box.

Humans do this too. If you walked up to a designer and said, “Make it better,” they’d have questions. Better for who? Better how? Better for conversions, readability, trust, clicks, or brand fit?

AI has the same issue, except it won’t roll its eyes first.

Good prompting is less like casting a spell and more like briefing a smart intern who works very fast and needs surprisingly specific instructions.

That’s why so many people bounce between tools, chasing the one model that will somehow “just get it.” Usually the bigger win comes from improving the conversation, not opening your twelfth browser tab. If you want a quick primer on what makes AI chat feel more natural in the first place, Zemith has a useful explainer on .

Why this skill suddenly matters at work

The workplace has changed under everybody’s feet. AI isn’t sitting off to the side anymore. Teams use it to draft copy, summarize documents, explain code, generate study materials, brainstorm campaigns, and compare options before a meeting even starts.

That means your edge doesn’t come from merely having access. It comes from knowing how to get reliable, relevant output without wasting half your afternoon in prompt limbo.

Here’s the practical version:

- If you write for work, you need AI to match tone, audience, and format.

- If you build things, you need it to reason through bugs and constraints.

- If you research, you need it to separate signal from fluff.

- If you manage projects, you need cleaner summaries and sharper next steps.

The people getting the most from AI aren’t necessarily the most technical. They’re often the ones who give the clearest instructions and the best feedback.

Collaboration beats button-mashing

The mindset shift is simple. Stop asking, “What prompt should I use?” Start asking, “What does this AI need from me to do this well?”

That question changes everything.

It leads you toward better context, better constraints, better follow-ups, and better results. It also makes your workflow saner because you stop relying on random one-shot prompts and start building a repeatable way of working.

And yes, if you’re juggling too many tools, that friction adds up. Switching between separate chat apps, writing tools, image tools, and research tabs can turn a simple task into digital plate-spinning. One workspace beats ten scattered ones when you’re trying to build a real conversation instead of a pile of disconnected prompts.

The Foundation of a Great AI Conversation

The fastest way to improve AI output is to stop being vague in ways that feel normal to humans. We fill in gaps for each other all the time. AI doesn’t reliably do that. It guesses.

A better approach is to build every prompt on four pieces: clarity, context, constraints, and character.

Clarity beats cleverness

A lot of people try to sound efficient with AI and accidentally become mysterious.

“Landing page help.” “Need better intro.” “Fix this.”

The AI can respond, but it has to guess what you mean. A clear prompt sounds more like this:

Rewrite this landing page intro for first-time SaaS buyers. Keep it under 60 words. Make it sound confident, not hypey. Avoid jargon like “synergy” or “unlock.”

That’s not fancy. It’s usable.

If you want more examples of useful prompt phrasing, Zemith has a handy list of that can help you get out of the “uh, what do I even type?” phase.

Context gives the AI a map

Think of context as the backstory. Without it, the model fills in blanks with generic patterns from training. With it, you get something specific.

Compare these two:

- “Write a product description.”

- “Write a product description for a minimalist desk lamp sold to remote workers. Focus on glare reduction, compact design, and a calm tone. Avoid luxury language.”

The second one gives the AI a world to work inside.

Here are a few kinds of context that matter:

- Audience context. Who is this for?

- Situation context. What is happening?

- Source context. What notes, draft, or document should it use?

- Intent context. What should the reader do, feel, or understand?

Constraints are not boring

People skip constraints because they think constraints make prompts stiff. They instead make outputs better.

Constraints tell the model where the walls are. That helps it stop wandering into weird territory.

Useful constraints include:

- Length limits like “under 120 words”

- Format rules like “give me a table with three columns”

- Tone limits like “friendly, not salesy”

- Content exclusions like “don’t use buzzwords”

- Scope boundaries like “focus only on beginner mistakes”

When you don’t set boundaries, the AI often tries to be broad and helpful. That’s nice in theory. In practice, it can produce a buffet when you asked for one sandwich.

Character changes the voice

This is the part people either overdo or ignore.

Giving the AI a role can help a lot, especially when style matters. You’re not pretending it’s a real person. You’re telling it what lens to use.

Try prompts like:

- “Act as a patient writing coach.”

- “Respond like a senior frontend developer reviewing a pull request.”

- “Explain this as a teacher talking to a smart beginner.”

That role cue helps the model choose vocabulary, structure, and tone.

Practical rule: If the output feels generic, assign a clearer role. If it feels scattered, tighten the constraints. If it feels off-target, add context.

Before and after examples

Here’s where people usually get the aha moment.

A small habit that pays off fast

Don’t try to write perfect prompts in one go. Write a decent prompt, see what breaks, then improve it.

That’s why a low-pressure workspace helps. Draft the request, rephrase it, tighten a sentence, try again. Treat prompting like revision, not a one-time test where the AI either passes or ruins your morning.

If you build this habit early, everything else gets easier.

Level Up Your Prompts with Advanced Techniques

Once the basics click, you can start using a few more effective methods that make AI feel much less random. These aren’t secret hacks. They’re structured ways to help the model reason better, stay on task, and improve through feedback.

Ask for step-by-step reasoning when the task is messy

Some tasks need more than a direct answer. Planning a feature, diagnosing a bug, comparing options, or outlining a long article all benefit from a reasoning path.

That’s where chain-of-thought style prompting helps. In plain English, you’re asking the AI to work through the problem in steps.

Try:

- “Think step by step and identify the likely cause of this bug.”

- “Break this problem into smaller parts before answering.”

- “Walk through your reasoning, then give the final recommendation.”

This is especially useful when the task has multiple moving parts. It slows the model down conceptually, which often makes the output more coherent.

Use examples when you want a specific pattern

If you want the AI to imitate a format, examples beat abstract instructions.

That’s few-shot prompting. You provide a couple of examples of the kind of output you want, then ask for another one in the same style.

For example:

Example 1

Input: “Late delivery”

Output: “The package arrived after the expected date, which caused planning issues.”Example 2

Input: “Confusing checkout”

Output: “The checkout flow made it hard to understand the final total before payment.”Now rewrite this phrase in the same style: “Bad onboarding”

That works because the model can infer the pattern instead of guessing from vague style labels like “professional but approachable but concise but not boring.” That phrase has ended many afternoons.

Set a stable role for the whole conversation

If you’re doing a long task, don’t repeat the same setup every turn. Give the AI a stable operating mode early.

This is often called a system-style instruction or persistent context. You define the job once, then keep building inside that frame.

A good version sounds like this:

- You are helping me write product documentation for non-technical users.

- Prefer short sentences.

- Flag unclear assumptions before drafting.

- Ask for missing information when needed.

That reduces drift. It also makes long sessions smoother because the AI has a clearer sense of what “good” looks like in that conversation. If you want a deeper primer on prompt design vocabulary, Zemith has a straightforward guide on .

The reflective prompting cycle is the sneaky superpower

Many users stop after the first answer. Power users don’t.

One of the most useful techniques is the reflective prompting cycle. You ask the AI to examine what went wrong, then improve the prompt or the response. According to , this can improve performance by up to 30%.

That sounds technical, but the actual move is simple.

You say things like:

- “This answer is too broad. Explain why it missed the mark, then rewrite it.”

- “Reflect on the weak parts of your response and generate a better prompt for this task.”

- “What assumptions caused the confusion in your last answer?”

That extra reflection often produces a much stronger second round because the model is forced to inspect its own miss.

When the first answer is mediocre, don’t throw the whole chat away. Ask the AI what it misunderstood, what was missing, and how the request should be reframed.

A practical sequence you can steal

Here’s a workflow I use a lot when the task matters:

Start natural

“Can you help me draft an onboarding email for new customers?”Add context and limits

“It’s for first-time users of a project management tool. Keep it under 150 words. Friendly tone. One CTA.”Critique the result

“Too generic. It doesn’t acknowledge the user just signed up. Make it more grounded.”Ask for reflection

“Why was the first version generic? Rewrite the prompt you wish I had given you.”Run the improved version

Use the refined prompt and compare outputs.

That loop feels weird at first. Then it starts feeling like cheating, in the good way.

When to switch techniques

Different methods fit different problems.

Don’t confuse complexity with quality

Advanced prompting doesn’t mean writing a giant wall of instructions every time. Sometimes one extra sentence does more than a whole prompt manifesto.

The trick is choosing the right lever. Add reasoning when logic matters. Add examples when pattern matters. Add reflection when revision matters.

That’s how you stop “using AI” and start directing it.

Tailoring Prompts for Any Job Role or Task

A strong AI prompt always has a job. Not just “write something” or “help me think,” but a real task with a real audience and a real output.

That’s why the same prompt style won’t work equally well for writers, developers, researchers, and marketers. Each role needs different ingredients.

Writers need voice and structure

Writers often get tripped up because the AI produces clean sentences with zero personality. It sounds polished, but not like you.

A better writing prompt includes raw material, audience, tone, and format. Try this:

Turn these bullet points into a blog intro for small business owners who feel overwhelmed by AI tools. Keep it plain-English, slightly playful, and practical. Don’t sound corporate. End with a sentence that sets up the main lesson.

That prompt works because it gives the model something to build from and a voice to aim for.

If you’re using AI while planning a career move, the same principle applies. I like this guide on because it shows how small prompt changes can improve resumes, outreach, and interview prep without making everything sound canned.

Developers need boundaries and verification

Developers usually need one of three things from AI: generation, explanation, or debugging.

Here are examples that work better than “write code for X”:

- “Create a React component for a pricing card with props for title, price, features, and CTA text. Keep it readable and use functional components.”

- “Explain this sorting function to a junior developer. Focus on what each loop does.”

- “Write unit tests for this function. Cover normal use, edge cases, and invalid input.”

The key is that each prompt tells the AI what kind of engineering help you want. Build, explain, review, or test. Those are different jobs.

Researchers need synthesis, not summary sludge

Research prompts fail when they ask for “everything.” AI loves to over-answer. A better prompt narrows the lens.

Try:

Compare the main arguments across these documents about remote work policy. Identify agreements, disagreements, and unanswered questions. Present the result as a research memo for a team lead.

That framing pushes the model toward synthesis.

If you want deeper output, ask follow-ups like:

- “What assumptions show up repeatedly?”

- “What important question are these sources not answering?”

- “Where would a skeptical reader want more evidence?”

That last one is especially useful. It makes the AI act more like an analyst and less like an enthusiastic summarizer.

Marketers need angle, audience, and channel fit

Marketing prompts go wrong when they ask for “10 content ideas” with no audience or business goal attached.

A stronger version:

Generate campaign ideas for a B2B email sequence targeting operations managers at midsize companies. Focus on time savings, clarity, and low-friction adoption. Avoid gimmicky subject lines.

Now the AI has a target.

You can also ask for competitive thinking:

Based on these competitor pages, identify the messaging themes they repeat most often, the gaps they leave open, and one angle we could own instead.

That kind of prompt is useful because it asks for interpretation, not just output volume.

A quick table for prompt building

Mini prompt recipes you can copy

Here are a few practical starting points.

For a writer

“Rewrite this draft to sound more human and less formal. Keep the meaning. Shorten long sentences. Don’t remove the joke in paragraph two.”For a developer

“Review this code for readability and likely bugs. Explain the top three issues first, then show a cleaner version.”For a researcher

“Summarize these notes into themes, then list what I still need to verify before presenting this.”For a marketer

“Write three ad variations for this product. One should be benefit-led, one curiosity-led, and one problem-solution.”

The more your prompt sounds like a real work brief, the more useful the answer tends to be.

One platform can make this easier because your writing, coding, research, and image work stop living in separate silos. That reduces copy-paste chaos and helps you keep context attached to the task.

Beyond Text Navigating Voice and Visual AI

Typing is only one way to talk to AI now. Voice and images change the interaction a lot, and they can be surprisingly useful when typing feels slow, awkward, or too rigid.

Voice works best when you think out loud

People often over-structure voice prompts because they’re trying to speak like they type. That usually makes the conversation clunky.

Voice AI tends to work better when you talk naturally, then tighten things after. For example:

“Help me think through this landing page. I want it to feel calm and useful, not pushy. The audience is first-time buyers. Ask me questions if I’m being vague.”

That sounds human because it is human.

This is where long-term trust matters too. As AI becomes more embedded in daily work and more personal interactions, it helps to treat the conversation as something you manage over time, not just a one-off command. Pew’s discussion of AI as a “cognitive prosthetic” argues for trust-based use and for prompts that surface bias, such as asking the system to “Assess your response for cultural bias from your training data” in more intimate interactions like voice chat, as noted in .

That’s a useful habit for voice because spoken interaction feels more immediate. You may share more than you intended. You may also accept answers too quickly because the conversation feels smooth.

Visual prompting is still a conversation

Images are prompts too.

You can upload a screenshot and ask the AI to describe design issues, turn a product photo into ad copy, or analyze a visual style so you can create something similar. The trick is to tell the model what kind of seeing you want.

Try requests like:

- “Describe the visual style of this image in a way a designer could reuse.”

- “Look at this dashboard screenshot and list the usability problems.”

- “Use this product photo to generate three caption ideas for social media.”

Those are all forms of communication. You’re giving the AI a visual input and a textual job.

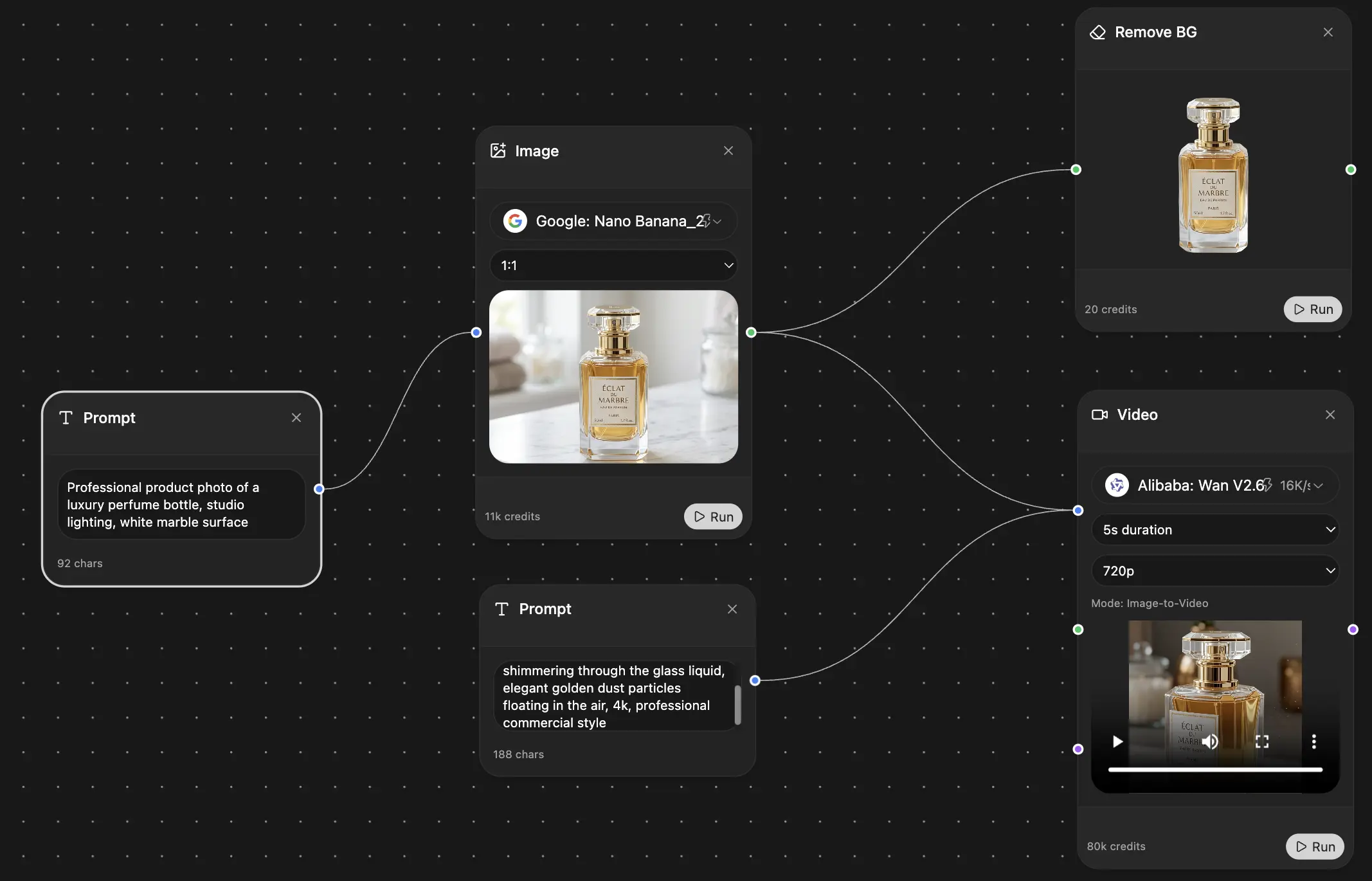

A short walkthrough helps if you want to see multimodal prompting in action:

One conversation across modes

The interesting part is that text, voice, and image prompting aren’t separate skills. They’re variations of the same collaboration.

You still need:

- Clear intent so the model knows what to do

- Context so it knows what matters

- Boundaries so it doesn’t drift

- Feedback so the next response improves

If you’re speaking instead of typing, keep requests shorter and more conversational. If you’re using an image, tell the model what lens to apply. Designer, analyst, teacher, marketer, critic. Same principle, different medium.

For people who use voice input regularly, a guide to can help smooth out that shift from typed prompts to spoken ones.

A good trust habit for multimodal AI

Ask the AI to check itself when the task touches identity, culture, accessibility, or personal decision-making.

For example:

Review your answer for possible cultural bias, missing context, or assumptions based on dominant-language norms.

That one sentence won’t make the model perfect. It will make you a better collaborator. And that matters more as AI moves closer to the way we naturally think, speak, and work.

Sidestepping Common AI Pitfalls and Hallucinations

AI can sound persuasive while being wrong. That’s the part that catches people. The answer arrives in full sentences, with confidence, and occasionally with the vibe of a student who didn’t do the reading but still volunteered first.

You need a few defensive habits.

When the answer feels off, diagnose before you rage-quit

Most failures come from a short list of issues:

- The prompt was too broad and the AI filled the gaps with generic filler.

- The chat got messy and earlier context started pulling the model in the wrong direction.

- The task mixed too many goals so the result became blurry.

- The model invented details because it didn’t know and tried to be helpful anyway.

The fix depends on the problem.

Ask better follow-up questions

A weak answer doesn’t always mean the session is doomed. Sometimes you just need a sharper follow-up.

Useful recovery prompts:

- “What assumptions are you making here?”

- “Which part of this answer is least certain?”

- “Rewrite this using only the information I gave you.”

- “What would you need clarified to answer this properly?”

Those questions expose the wobble.

If an answer matters, don’t just ask “is this correct?” Ask “what might be wrong, missing, or based on assumption?”

That gets you closer to verification mode, which is where human judgment becomes essential.

The invisible gap most guides ignore

There’s another pitfall that standard prompting advice barely touches. Language and culture.

According to , AI language models cover only a fraction of the world’s 7,000 languages, and over 2,500 are at risk of digital extinction due to training data biases. That has real consequences for how to talk to ai if your first language, local idioms, or cultural norms fall outside dominant training patterns.

In plain terms, the AI may understand your words less well, miss politeness cues, flatten local context, or produce awkward translations that sound “correct” but aren’t culturally right.

Better prompting for non-dominant languages

If you work across languages or communities that are underrepresented in training data, try these tactics:

State the language goal clearly

Say whether you want translation, simplification, preservation of tone, or adaptation for a different audience.Explain local meaning

If a phrase is idiomatic or culturally specific, define it before asking the AI to rewrite it.Ask for uncertainty flags

Prompt the AI to note where translation or cultural interpretation may be weak.Compare outputs

Run the same request through different models and inspect where they differ.Back-translate important text

Ask the AI to translate the result back into the original language so you can spot drift.

If you’re checking factual material, source quality matters too. A practical guide to is worth keeping in your toolkit because hallucinations and weak citations often travel together.

The deeper lesson is simple. Don’t mistake fluency for accuracy. Smooth wording can hide weak reasoning, cultural mismatch, or fabricated detail. Your job isn’t to be suspicious of everything. It’s to know when the answer deserves a second pass.

Becoming an AI Whisperer with Zemith

The people who get the most from AI usually do three things well. They give clear instructions, they revise instead of settling for the first draft, and they keep the whole conversation attached to the work instead of scattering it across a pile of tabs.

That’s why the workflow matters as much as the prompt.

A single workspace can help when you’re moving from brainstorm to research to draft to revision. Zemith is one example of that kind of setup. It combines multi-model chat, document work, writing support, coding help, image tools, and project organization in one place, which is useful if you want one ongoing conversation instead of a disconnected trail of copied prompts and half-lost outputs.

If you write a lot, it also helps to look sideways at other tool stacks and workflows. This roundup of the is a practical way to compare how different writing-focused tools fit different creative habits.

The main point is bigger than any single app. Learning how to talk to ai is really learning how to think with it. You bring judgment, goals, context, and standards. The model brings speed, range, and iteration. That partnership gets stronger when your tools stop fighting your process.

If you want one place to practice better prompting, compare models, organize chats, work with documents, generate images, and keep projects from turning into tab soup, take a look at .

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers

What Our Users Say

Great Tool after 2 months usage

"I love the way multiple tools they integrated in one platform. Going in the right direction."

— simplyzubair

Best in Kind!

"The quality of data and sheer speed of responses is outstanding. I use this app every day."

— barefootmedicine

Simply awesome

"The credit system is fair, models are perfect, and the discord is very responsive. Quite awesome."

— MarianZ

Great for Document Analysis

"Just works. Simple to use and great for working with documents. Money well spent."

— yerch82

Great AI site with accessible LLMs

"The organization of features is better than all the other sites — even better than ChatGPT."

— sumore

Excellent Tool

"It lives up to the all-in-one claim. All the necessary functions with a well-designed, easy UI."

— AlphaLeaf

Well-rounded platform with solid LLMs

"The team clearly puts their heart and soul into this platform. Really solid extra functionality."

— SlothMachine

Best AI tool I've ever used

"Updates made almost daily, feedback is incredibly fast. Just look at the changelogs — consistency."

— reu0691