Master Statistics with Our 2026 Math Statistics Solver

Stuck on a stats problem? Our ultimate math statistics solver guides you. Get solutions via manual calculations, Python/R code, and 2026's AI tools.

You’ve got a stats problem open, a spreadsheet that looks vaguely threatening, and some result that feels wrong. Maybe it’s a p-value that seems too neat. Maybe your homework says “show all work” while your brain says “absolutely not.” Maybe you searched for a math statistics solver and found a pile of calculators that can compute something, but not help you think.

That’s the frustrating part. Users don’t just need an answer. They need to know which test to use, how to check the assumptions, how to explain the result, and how to move from raw data to something they can turn in, publish, or present without sweating through their shirt.

I like to think about stats solving in three modes. There’s the by-the-book way, where you do enough by hand to understand what the math is doing. There’s the smart coder way, where Python or R handles the repetitive work. Then there’s the new-age AI way, where you can discuss the problem, generate code, inspect documents, and keep the whole workflow in one place instead of playing tab-juggling Olympics.

Stuck in Statistical Purgatory? Here’s Your Escape Plan

A common scene goes like this. You have a small dataset, a question from class or work, and a deadline that’s no longer “upcoming” so much as “staring directly into your soul.” You know the variables. You vaguely remember words like correlation, t-test, significance, maybe regression if things have gotten spicy. But picking the right move feels harder than the actual arithmetic.

The trap is assuming there must be one magical math statistics solver that handles everything. In practice, good stats work is more like using the right wrench for the right bolt. A handheld calculator helps with one-off computations. Code helps when the same analysis needs to be run again. AI helps when the bottleneck isn’t only calculation, but interpretation, formatting, debugging, and turning findings into a useful explanation.

That’s why I don’t treat stats tools as rivals. I treat them as stages of maturity.

- Manual work builds intuition. You learn what the formula is trying to measure.

- Code builds repeatability. You stop redoing the same chores.

- AI helps when the work spills beyond math into documents, prompts, and messy real-world context.

If you’re the kind of person who wants to get better, not just faster, it helps to sharpen your thinking first. This piece on is a good companion because stats errors usually start before the calculator does.

Standard deviation sounds scary until you realize it’s just the dataset’s way of saying, “I contain multitudes.”

Solving Stats by Hand and Why It’s Still Worth Knowing

Doing stats by hand feels old-school because it is. It’s also one of the fastest ways to stop being fooled by tools.

When you work through even one problem manually, the formulas stop looking like wizard spells. You start noticing what changes the result, what doesn’t, and when an output from software looks suspicious. That instinct matters more than people admit.

A simple example with correlation

Take a tiny example. Say you want to check whether hours of sleep and cups of coffee move together. You record a few days of data:

You don’t need to compute every decimal here to learn something useful. The point is the structure.

Find the mean of each variable

You need a center point for sleep and a center point for coffee.Measure how far each observation is from its mean

This shows whether each day is above or below average.Multiply those paired deviations

If high sleep tends to come with low coffee, many products will be negative. If both rise together, many products will be positive.Scale the result by the spread of each variable

This turns the raw covariance idea into a standardized correlation.

That last step is the secret sauce. Correlation isn’t just “do they move together.” It’s “do they move together after accounting for their scale.”

Why this matters more than memorizing formulas

If you do this once by hand, a few useful things click:

- Direction matters because the sign tells you whether variables move together or in opposite directions.

- Spread matters because wildly variable data can weaken an apparent relationship.

- Outliers matter because one weird row can bend the answer more than you’d expect.

Practical rule: Solve one clean example manually before you automate anything. It’s the fastest insurance policy against garbage output.

A similar lesson applies to t-tests. Even if software computes the statistic instantly, you should still know the logic. You compare a difference to the amount of noise around that difference. Big separation with low variability pushes you toward a stronger signal. Tiny separation with noisy data does not.

That intuition also helps when you scan handwritten homework or textbook equations into a tool. If you need to convert formulas from notes into something machine-readable, this guide to is handy.

What hand-solving does not do well

Manual solving is bad at scale. It’s slow. It’s error-prone. One bad minus sign and suddenly coffee causes sleep, which would be nice, but no.

Use the manual route to learn the mechanics and verify small examples. Don’t use it as your full production workflow unless you enjoy pain as a hobby.

Let Python and R Do the Heavy Lifting

Once you understand the bones of the calculation, code becomes the obvious next step. You stop babysitting arithmetic and start building a process you can rerun.

That matters for homework, but it matters even more for research and recurring analysis. If your professor changes the dataset or your manager adds a few rows five minutes before a meeting, you don’t want to recalculate everything with a calculator and a trembling hand.

Python for quick, repeatable stats

Here’s a simple Python example using the same sleep and coffee idea:

Small example dataset

data = pd.DataFrame({ "sleep_hours": [8, 7, 6, 5, 4], "coffee_cups": [1, 2, 2, 3, 4] })

Correlation test

corr, p_value = pearsonr(data["sleep_hours"], data["coffee_cups"])

print("Correlation:", corr) print("P-value:", p_value)

What this buys you:

- Less arithmetic risk

- Easy edits when data changes

- A script you can reuse later

- A record of exactly what you did

If your work starts moving beyond toy examples into apps, dashboards, or internal tools, it helps to understand the surrounding ecosystem too. Teams building analysis workflows often rely on when they want their scripts to become actual products instead of lone files named final_final_v2.py.

R is still a stats machine

R stays popular for good reason. It’s concise, strong for statistical work, and has a culture built around analysis.

result <- cor.test(sleep_hours, coffee_cups)

print(result)

That one command gives you a lot. Correlation estimate, p-value, confidence information, and a standard structure that many courses and labs already expect.

What code gets right, and where it bites back

Code shines when you need reproducibility. If you make a chart, clean data, run a test, and export results in one script, you can rerun the same pipeline whenever the input changes.

But code has a very real learning curve.

- Syntax errors happen.

- Package issues happen.

- Wrong defaults happen.

- Misread outputs happen.

That’s why a coding assistant can save time even for people who already know the basics. If you want help generating snippets, debugging, or translating plain-English analysis tasks into scripts, these are worth a look.

A quick visual walkthrough helps if you’re more of a see-it-first person:

When code is the right answer

Use Python or R when:

If hand-solving teaches you what’s happening, code gives you an advantage.

Your New AI Partner A Math Statistics Solver

General AI made stats more accessible, but also messier. You can paste in a question and get an explanation, ask for Python code, request an interpretation, then ask for a cleaner write-up. That’s useful. It’s also fragmented fast.

A lot of people end up bouncing between a chatbot, a spreadsheet, a PDF, a code editor, and a browser full of tabs that all somehow matter. The analysis itself might take minutes. The workflow mess takes the rest of the afternoon.

General AI is helpful, but scattered

Used carefully, a general AI model can do several useful things:

- suggest which statistical test fits your question

- explain assumptions in plain English

- generate starter code in Python or R

- translate output into a report-ready paragraph

That’s already better than many standalone calculators. A basic calculator often gives only the final number. It doesn’t help you decide whether the number means anything.

The bigger issue is fragmentation among available tools. Examination of current statistical tools reveals a major gap: standalone stats calculators are too basic, and general AI chat is too fragmented. For example, user threads on platforms like Reddit (r/statistics) in 2025 show that roughly 70% of student and researcher queries remain unsolved by simple tools like Photomath because they lack true statistical and document analysis integration, a gap Zemith's multi-tool workspace is designed to fill ().

That tracks with what many people run into in practice. Solving the formula is only one part of the job. You also need the context around it.

The real upgrade is an integrated workflow

An all-in-one workspace is often a better solution than a collection of separate tools. Zemith combines document chat, coding help, deep research, and writing tools in one place, which is useful when your stats problem lives inside a paper draft, a class handout, a dataset description, and a final report at the same time.

That setup fits the modern version of a math statistics solver better than a standalone calculator does. You can upload supporting material, ask the model to interpret your notes, generate code for the analysis, then turn the result into readable output without hopping across five tools.

If you’re curious how people are using autonomous helpers in analysis tasks more broadly, this overview of is a useful external read.

A solver becomes much more valuable when it can explain the method, inspect the source material, and help draft the final answer.

Comparison of Statistical Solving Methods

The catch with AI

AI still needs supervision. It can choose the wrong test if your prompt is vague. It can write code that almost works, which is coder for “annoying.” It can produce a polished explanation that sounds smart but ignores a broken assumption.

That’s why I treat AI as a partner, not an oracle. It’s strongest when you give it clean inputs, a clear question, and a habit of checking the output. It also helps to use it for the whole reporting workflow, especially if you need to turn numbers into something readable. That’s where tools focused on become practical.

Is Your Answer Right? How to Sanity-Check Your Solver

A result can be mathematically correct and still be wrong for your problem. That’s the uncomfortable truth.

Maybe you ran the wrong test. Maybe the data was messy. Maybe one outlier did all the talking. Maybe your software output is fine, but your interpretation is nonsense. This is why every math statistics solver needs a second layer. A sanity check.

A practical validation checklist

Run through these five checks before you trust the answer:

Review the input data

Look for missing values, weird categories, duplicate rows, and obvious entry mistakes. Plenty of bad analyses begin with one spreadsheet column that got sorted independently. Chaos, but in Excel form.Check the test assumptions

Don’t run a method just because it’s familiar. Ask whether your variables, sample structure, and distribution shape fit the test.Ask whether the result makes real-world sense

If the output says coffee causes happiness, but the data came from five interns during finals week, maybe pump the brakes.Cross-check with a second method

If possible, verify with another approach. Compare hand work against code, or code against an AI explanation. Agreement doesn’t guarantee truth, but disagreement is a red flag.Plot the data

A scatterplot, boxplot, or histogram catches problems fast. Visuals expose patterns that summaries can hide.

What to question besides the p-value

A lot of learners stop at significance. That’s too thin.

Ask:

If you work on experiments, product data, or site testing, it helps to understand the business side of significance too. This piece on connects the stats idea to real optimization decisions without turning it into mush.

Key takeaway: Never trust a result you haven’t challenged at least once.

For research projects, I also like a basic paper-trail habit. Save the dataset version, the script or prompt, and the final output together. If you revisit the analysis later, you won’t have to reconstruct your logic from memory and vibes. This guide on is useful if you want a cleaner process.

The Right Statistical Tool for Every Job

The right math statistics solver depends on the job in front of you.

If you’re learning a concept, do one example by hand. That’s how you build instinct. You start recognizing what correlation, variance, and test statistics are measuring instead of treating them like random symbols from the textbook dimension.

If you’re working repeatedly with real datasets, code is the practical choice. Python and R give you a workflow you can rerun, inspect, and adapt. They turn statistics from a one-time performance into a repeatable system.

If your work spans notes, PDFs, prompts, code, interpretation, and final write-ups, an integrated AI workflow makes more sense than a pile of single-purpose tools. That’s the point where a solver stops being a calculator and starts becoming a workspace.

You don’t need to pick one identity forever. Good analysts switch modes. They use hand methods to learn, code to scale, and AI to reduce friction around the messy parts that surround the math.

If you’re tired of hopping between separate tools for stats, coding, documents, and write-ups, try . It gives you one workspace for problem-solving, research, and drafting, which is often the difference between getting an answer and finishing the whole assignment or project.

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

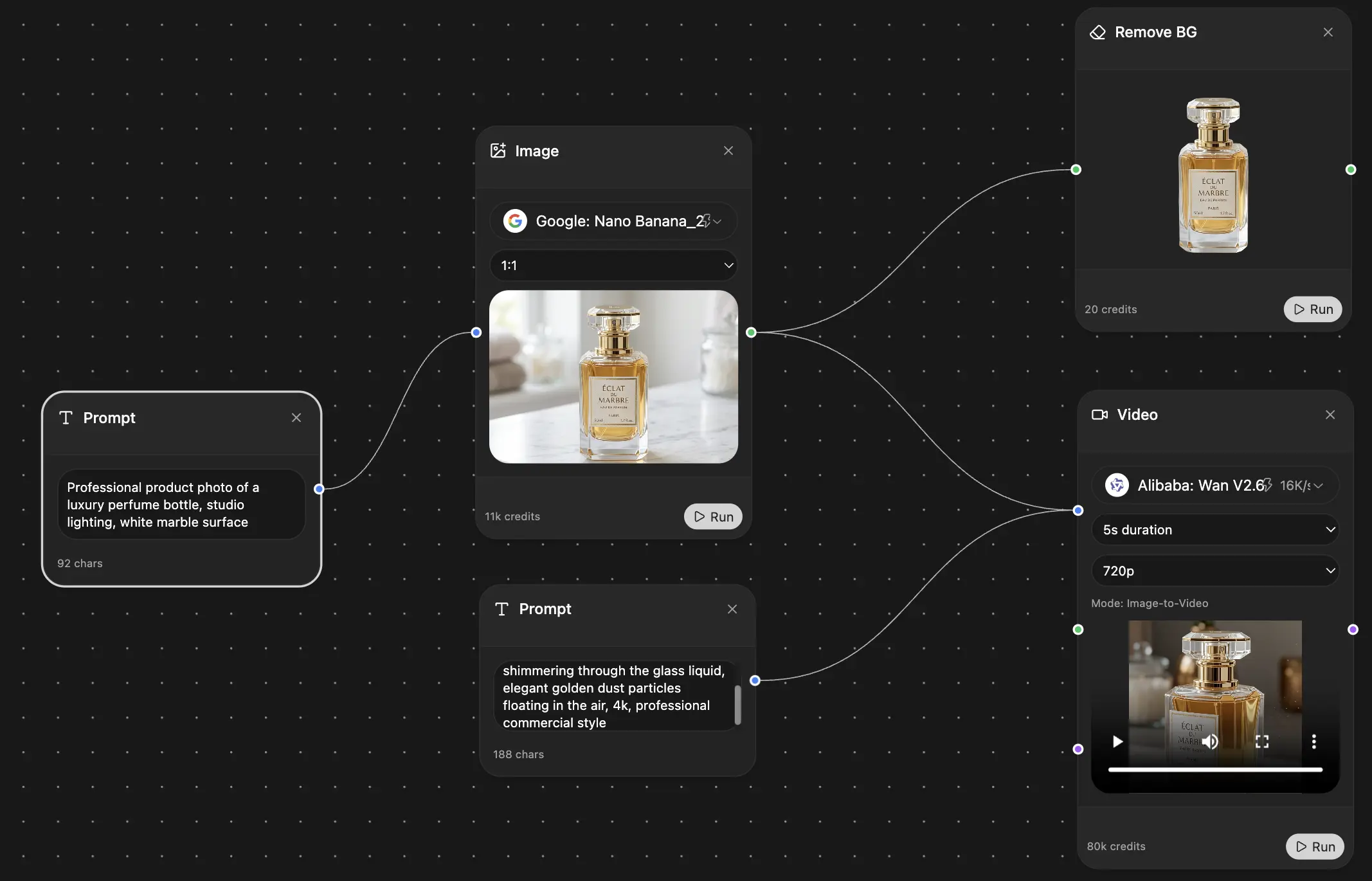

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers

What Our Users Say

Great Tool after 2 months usage

"I love the way multiple tools they integrated in one platform. Going in the right direction."

— simplyzubair

Best in Kind!

"The quality of data and sheer speed of responses is outstanding. I use this app every day."

— barefootmedicine

Simply awesome

"The credit system is fair, models are perfect, and the discord is very responsive. Quite awesome."

— MarianZ

Great for Document Analysis

"Just works. Simple to use and great for working with documents. Money well spent."

— yerch82

Great AI site with accessible LLMs

"The organization of features is better than all the other sites — even better than ChatGPT."

— sumore

Excellent Tool

"It lives up to the all-in-one claim. All the necessary functions with a well-designed, easy UI."

— AlphaLeaf

Well-rounded platform with solid LLMs

"The team clearly puts their heart and soul into this platform. Really solid extra functionality."

— SlothMachine

Best AI tool I've ever used

"Updates made almost daily, feedback is incredibly fast. Just look at the changelogs — consistency."

— reu0691