Mastering Partial Differential Equation Solver

Unlock the power of a partial differential equation solver. Learn numerical methods, stability, code examples, and how to choose the best one for your projects.

You are probably here for one of three reasons.

You have a model in your head, a deadline on your calendar, and a PDE on your screen. Or you opened a library like FEniCS, SciPy, or FiPy and felt that familiar mix of excitement and dread. Or someone casually said, “just use a partial differential equation solver,” as if that were as straightforward as opening a spreadsheet.

It is not magic, but it is also not reserved for mathematicians in tweed jackets guarding chalkboards.

A good partial differential equation solver is just a way to turn laws of change into numbers your computer can work with. Once that clicks, the topic gets much less intimidating. You stop asking “what is the one best solver?” and start asking better questions: What shape is my domain? What physics matters? How accurate do I need to be? How much implementation pain can I tolerate before lunch?

What Is a PDE Solver and Why Should You Care

A partial differential equation, or PDE, describes how something changes across space, time, or both.

Heat spreading through a metal rod. Air moving around a drone. Pressure changing in a pipe. A ripple traveling across water. These are all situations where the state at one point depends on nearby points, and often on what happened a moment ago.

The pizza test

Take a pizza out of the oven.

The crust cools faster than the center. The cheese near the edge behaves differently from the cheese in the middle. If you wanted to predict the temperature everywhere on the pizza over time, regular algebra would wave a white flag. A PDE can describe that evolution.

A partial differential equation solver is the practical tool that turns that description into an approximation you can compute. It translates “how the system changes” into many small arithmetic steps.

That translation matters because very few PDEs hand you a neat closed-form answer. Most useful problems are messy. Boundaries are awkward. Materials vary. Initial conditions are not polite.

Why developers and researchers run into PDEs

You do not need to be writing a physics engine for NASA to care.

You may run into PDEs when you are:

- Modeling diffusion in heat transfer, image smoothing, or chemical transport

- Simulating flow for CFD, porous media, or environmental models

- Studying fields such as electromagnetics, acoustics, or stress

- Building surrogates for optimization or inverse design workflows

- Analyzing spatiotemporal data from sensors, experiments, or simulations

The field itself has deep roots. The study of PDEs began in the 18th century through the work of Euler, d'Alembert, Lagrange, and Laplace, and that history helped turn PDEs from empirical modeling tools into a rigorous mathematical discipline that underpins modern computation, as described in this .

Why the solver is the central story

Most beginners focus on the equation itself.

Practitioners focus on the solver. That is where the trade-offs live. Accuracy, runtime, memory use, implementation complexity, and numerical headaches all show up there.

A PDE tells you the rules. The solver decides whether those rules become a useful simulation or a long evening of debugging.

If your background is more software than math, the learning curve feels a lot like learning programming in the first place. You do not master everything at once. You build intuition by solving small, concrete problems. That same mindset shows up in good guides on .

And yes, your first simulation may look wrong.

That is normal. Welcome to computational science, where “the graph seems haunted” is a valid intermediate result.

The Big Three Numerical Methods Explained

Most practical PDE work starts with three families of methods: Finite Difference Method, Finite Element Method, and Finite Volume Method.

They all chase the same goal. Approximate a solution the computer can calculate.

Finite Difference Method

Finite Difference Method (FDM) is the easiest place to start.

Think of laying graph paper over your domain. At each grid point, you estimate derivatives using nearby values. Instead of the exact slope or curvature, you use small differences between neighboring points.

That makes FDM feel friendly in problems like a 1D rod, a rectangular plate, or a simple diffusion model on a uniform grid.

A mental picture helps:

- You know the temperature at many grid points.

- You estimate how curved the temperature field is around each point.

- You update values step by step.

That is the core idea.

Why beginners like it

FDM is appealing because it is direct.

You can implement a basic heat equation solver in NumPy without needing a giant framework. You can print arrays, inspect values, and understand what each line is doing. For learning, this is gold.

Where it starts to complain

FDM gets grumpy when your geometry stops being simple.

A rectangle is easy. A turbine blade, artery, or oddly shaped battery pack is not. You can still do it, but the setup becomes awkward fast.

Finite Element Method

Finite Element Method (FEM) handles complicated geometry much better.

Instead of grid paper, think of building the domain from many small pieces, often triangles or tetrahedra. Each element carries a local approximation, and the full solution comes from assembling all those local pieces into a global system.

If FDM is graph paper, FEM is a carefully fitted mosaic.

That is why FEM is so common in structural analysis, biomechanics, electromagnetics, and many engineering simulations with irregular shapes.

Why FEM is powerful

FEM shines when you need to represent:

- Irregular geometry such as curved or complex domains

- Mixed materials where properties vary in space

- Boundary conditions that are more realistic than textbook boxes

It asks more from you up front. Meshing, weak forms, basis functions, and assembly sound like a committee designed them. But once the workflow clicks, FEM becomes a very flexible tool.

Finite Volume Method

Finite Volume Method (FVM) is built around conservation.

Instead of focusing only on pointwise derivatives, FVM tracks how much of a quantity enters or leaves small control volumes. That makes it especially natural for fluid flow, transport problems, and conservation laws.

If you care about mass, momentum, or energy being conserved in a physically meaningful way, FVM earns your attention.

A simple analogy is bookkeeping.

Each cell is a little account. Flux goes in. Flux goes out. The balance changes. No mysterious disappearance of heat, fluid, or species concentration. At least in principle. In practice, your discretization still needs to behave.

A quick comparison

The iterative solver layer inside FDM

Even after discretization, you still need to solve the resulting algebraic system.

That is where Jacobi, Gauss-Seidel, and Successive Over-Relaxation (SOR) often enter. Within finite difference methods, these solvers come with clear trade-offs. Jacobi is highly parallelizable and works well for GPUs, while SOR can converge 2-5x faster on serial hardware for common elliptic PDEs, according to this .

So what does this mean in practice

If you are a developer prototyping a solver, your choice often starts with workflow, not elegance.

- Choose FDM when the domain is simple and you want something working quickly.

- Choose FEM when geometry is central to the problem.

- Choose FVM when conservation and transport dominate the physics.

If your first goal is understanding, use the simplest method your geometry allows. Fancy methods are wonderful. So is finishing.

A lot of early frustration comes from using an advanced tool for a beginner-sized question. That is like learning to fry an egg by first designing a commercial kitchen.

How to Choose the Right Solver Algorithm

Most solver advice online sounds like this: “it depends.”

Annoying, yes. Also true.

The useful version of “it depends” is a checklist. You are choosing a tool, not joining a religion.

Start with the shape of the problem

Geometry rules a surprising amount of the decision.

If your domain is a line, rectangle, or box with tidy boundaries, FDM is often the fastest route from idea to results. If the domain looks like something a CAD engineer exported after too much coffee, FEM usually makes more sense.

FVM often becomes attractive when your physical model revolves around fluxes and conservation, especially in fluid and transport problems.

A practical geometry test

Ask this before anything else:

- Can I place a structured grid on the domain without ugly hacks?

- Do boundaries matter a lot to the physics?

- Will I need local mesh refinement near corners, interfaces, or gradients?

If the answer to the first is yes, FDM stays in the running.

If the next two dominate, FEM or FVM deserve stronger consideration.

Match the method to the physics

Different PDEs reward different instincts.

A diffusion problem on a clean rectangular domain is a gift to finite differences. A stress analysis in a weird mechanical part is classic finite element territory. A flow or transport problem where conservation matters cell by cell often leans toward finite volume.

That sounds obvious after the fact. Before the fact, people still pick tools based on the first tutorial they found.

That is how innocent researchers end up forcing beautiful physics through a terrible discretization choice.

Think about implementation cost

Many projects live or die based on this consideration.

A mathematically elegant solver is not helpful if your team cannot implement, debug, or maintain it. Solver choice is also a software architecture choice. You are deciding how much abstraction, library dependence, and complexity your codebase can handle. Good engineering judgment here looks a lot like the trade-offs described in .

A decision table you can use

Accuracy versus effort

A common mistake is chasing the most advanced method before defining the goal.

If you need a first-pass answer, a simpler method may be the right answer. If your simulation will inform design choices, experimental planning, or scientific conclusions, then setup quality matters more.

Ask yourself four blunt questions

What decision will this simulation support? A rough trend and a publishable result are not the same target.

How ugly is the geometry? Be honest. “Mostly rectangular except for the complicated parts” means not rectangular.

What hardware do I have? Parallel-friendly methods matter if you plan to scale.

Who will maintain this code? Future-you is a stakeholder. Future-you has opinions.

The best partial differential equation solver is usually the one that matches your domain, your physics, and your available time. Not the one with the most intimidating documentation.

A sensible default path

For many developers and researchers, this progression works well:

- First pass: FDM on a reduced or simplified geometry

- Second pass: move to FEM or FVM if geometry or conservation demands it

- Third pass: optimize the implementation, improve meshing, and validate carefully

That path gives you insight early and sophistication later.

It also reduces the risk of spending a week configuring a framework only to discover your boundary conditions were wrong on day one.

Stability and Accuracy Dont Let Your Simulation Explode

A simulation can fail in two very different ways.

It can explode spectacularly, with values shooting off into nonsense. Or it can remain calm, smooth, and completely wrong. The second one is more dangerous because it looks respectable.

Stability is the no-chaos rule

Numerical stability asks whether small errors stay under control as the computation proceeds.

Every simulation accumulates approximation errors. Roundoff, discretization, iterative solver tolerances. Stability decides whether those errors remain manageable or become the main event.

A good mental model is walking downhill.

Take steps that are too large on a steep slope and you lose balance fast. In time-dependent PDEs, your time step can behave exactly like that oversized step.

Accuracy is a separate issue

A stable simulation is not automatically accurate.

You can choose tiny, very safe steps and still solve the wrong discrete problem, use poor boundary conditions, or smear out important physics. Stability means the method behaves. Accuracy means the result reflects reality well enough for your purpose.

Keep the distinction straight

- Stable but inaccurate: the run finishes, the plot looks smooth, the answer is still not useful

- Accurate in principle but unstable in practice: the method could work, but your parameter choices wreck it

- Stable and accurate: everyone smiles and pretends this was inevitable

The CFL idea without the scary formula

For many explicit time-stepping schemes, there is a practical speed limit.

People often meet this through the Courant-Friedrichs-Lewy condition, usually called the CFL condition. You do not need the formula first. You need the intuition.

Information should not numerically travel farther in one step than your discretization can responsibly handle. If your time step is too large relative to your grid and the physics, the method can become unstable.

That is the simulation equivalent of trying to read every fifth page of a novel and then claiming the plot still makes sense.

If your output suddenly oscillates, blows up, or turns into jagged nonsense, check the time step before accusing the laws of physics.

Three habits that save hours

Run a coarse sanity check

Start with a tiny test problem where you know what “reasonable” looks like. If heat should diffuse smoothly and your curve grows teeth, something is wrong.

Refine one thing at a time

Change the grid spacing or time step, not both together. Otherwise you will not know what fixed the issue.

Debug numerics like code

Boundary conditions, indexing, sign errors, and update order all matter. Numerical bugs are still bugs. The debugging mindset in guides about applies here more than commonly expected.

What convergence feels like in practice

Convergence asks whether your numerical solution approaches a consistent answer as you refine the discretization.

A practical test is simple. Run the same problem with finer grids or smaller time steps and compare the results. If the solution settles down, that is a good sign. If it changes wildly every time, you are not done.

This part is less glamorous than writing the solver.

It is also the difference between a simulation and a colorful rumor.

Popular PDE Solver Libraries and Modern Tools

Once you move beyond toy scripts, tool choice matters almost as much as method choice.

A partial differential equation solver is not just an algorithm. It is a workflow. You need meshing, linear algebra, visualization, parameter handling, and often a fair amount of patience.

Good tools for different levels of ambition

For small educational or prototype problems, NumPy and SciPy are often enough. They let you build finite difference solvers directly and inspect every moving part.

For more serious finite element work, many developers look at FEniCS. It is powerful, expressive, and widely used for complex PDE problems. It also has a learning curve that can make you question your life choices for an afternoon.

For finite volume workflows, FiPy is a common Python option. In MATLAB-heavy environments, MATLAB PDE Toolbox remains a familiar route for teams that prefer integrated numerical tooling.

The awkward gap many encounter

There is a significant jump between “I followed a 1D heat equation tutorial” and “I need to solve something with irregular geometry and realistic boundary conditions.”

One recurring challenge for non-experts is exactly that transition. Applying PDE solvers to irregular geometries comes up often in forum discussions, and while expert libraries such as FEniCS exist, accessible guides between structured-grid toy examples and real-world setups remain thin, as noted in this .

That gap is why many smart developers feel stuck.

Not because they cannot understand PDEs, but because examples online often stop right before things become useful.

Classical tools versus modern AI-flavored methods

The solver environment is changing.

One important development is the rise of differentiable PDE solvers, which blend traditional discretization with neural network frameworks. For inverse problems, this can be a major shift. Differentiable PDE solvers can reduce parameter identification time by 70-90% and require 5-10x fewer forward PDE evaluations compared with classical approaches, according to this AIAA paper on differentiable PDE solvers.

That matters when your task is not just solving the PDE forward, but inferring unknown parameters from data.

Where differentiable solvers help most

- Parameter identification in thermal or materials models

- Inverse design where you optimize inputs based on desired behavior

- Learning-based workflows that need gradients through the solver

A practical tool map

Pick tools based on the problem you need to solve next week, not the one you may solve in two years.

There is also a productivity side to all this. Documentation is dense. Examples are fragmented. Boilerplate is repetitive. That is why many developers now pair numerical libraries with AI coding workflows and research assistants, especially when comparing documentation, translating equations into code, or reviewing alternatives among the .

Used well, those tools do not replace understanding.

They reduce friction so you can spend more time thinking about the model and less time hunting for the missing bracket in your weak form.

Solving a Real Problem The Heat Equation in Python

The classic starter problem is the 1D heat equation.

It models how temperature changes along a rod over time. This example is simple enough to code in one sitting and rich enough to teach the habits you will use later.

The setup

Suppose you have a rod.

The ends are held at zero temperature. The middle starts hot. Over time, heat diffuses outward and the profile smooths.

For a beginner, this is the right kind of problem because:

- the geometry is simple

- finite differences fit naturally

- you can visualize the result immediately

That last point matters. A lot.

People often understand PDEs only after they watch one evolve. If you have been using AI to speed up coding tasks already, the workflow feels similar to practical experiments with . You iterate, inspect, fix, and rerun.

A minimal explicit finite difference solver

Here is a compact Python example using NumPy and Matplotlib.

Domain

L = 1.0 # rod length nx = 51 # number of spatial points dx = L / (nx - 1) x = np.linspace(0, L, nx)

Physical parameter

alpha = 0.01 # thermal diffusivity

Time settings

dt = 0.0005 nt = 400

Initial condition: hot bump in the middle

u = np.exp(-100 * (x - 0.5)**2)

Boundary conditions

u[0] = 0.0 u[-1] = 0.0

Store snapshots

snapshots = [u.copy()] times = [0]

Explicit finite difference update

for n in range(nt): u_new = u.copy() for i in range(1, nx - 1): u_new[i] = u[i] + alpha * dt / dx**2 * (u[i+1] - 2*u[i] + u[i-1])

Plot

plt.figure(figsize=(8, 5)) for snap, t in zip(snapshots, times): plt.plot(x, snap, label=f"t = {t:.4f}") plt.xlabel("Position") plt.ylabel("Temperature") plt.title("1D Heat Equation with Finite Differences") plt.legend() plt.grid(True) plt.show()

What the code is doing

The update rule uses the temperature at each interior point and its two neighbors.

If the center is hotter than its neighbors, heat spreads outward. Repeating that update over many time steps produces the evolving temperature field.

The pieces to notice

dxsets spatial resolutiondtsets the time stepalphacontrols how quickly heat diffuses- Boundary conditions keep the rod ends fixed

- Initial condition determines how the system starts

Later, when you tackle more realistic models, these same ingredients still matter.

What beginners usually get wrong

The most common issue is choosing a time step that is too large.

The code runs. Then the solution wiggles, blows up, or becomes negative in places where it makes no physical sense. That is usually not Python being dramatic. It is the numerical method telling you the update is unstable.

Another common issue is misapplying boundary conditions. Off-by-one indexing also deserves its own museum exhibit.

A short visual explanation can help if you want to see the mechanics from another angle:

Why this toy problem matters

This little script teaches several habits that scale:

- discretize the domain clearly

- define initial and boundary conditions explicitly

- inspect intermediate results

- validate that the behavior makes physical sense

The bigger challenge comes later, when the geometry is not a line and the physics are not so tidy. That transition is exactly where many newcomers struggle. Simple structured-grid examples are common, but support for moving into irregular real-world scenarios is much thinner, which is why this step-by-step style matters before jumping to expert libraries.

If you can write, run, and sanity-check a 1D heat solver, you are not a spectator anymore. You are doing numerical PDE work.

That is a meaningful shift.

Your PDE Journey and Next Steps with AI

A lot of PDE anxiety comes from trying to absorb everything at once.

You do not need everything at once. You need a working mental model and one problem small enough to finish.

The mental model to keep

A useful partial differential equation solver workflow usually comes down to this:

- Choose the method that matches your geometry and physics

- Discretize carefully so the computer can approximate the equation

- Check stability and convergence before trusting pretty plots

- Use the simplest test case first before scaling up complexity

If your domain is simple, start with finite differences. If geometry is the challenge, look seriously at finite elements. If conservation is central, finite volume deserves a long look.

Where the field is moving

One of the most interesting changes is that solvers are no longer only hand-built from known equations.

Modern data-driven methods can work in the opposite direction. Algorithms such as PDE-FIND can discover governing PDEs directly from measurement data, which marks a major shift for reverse-engineering systems across fields from climate science to biology, as shown in this .

That changes the “so what?” for researchers.

You may not always begin with the equation. Sometimes you begin with the data and infer the model.

A practical next step

Pick one problem and finish it.

Not five tabs of theory. One problem. A 1D heat equation, a diffusion process, or a simple Laplace problem on a grid. Solve it, visualize it, perturb it, and break it a few times on purpose.

That is how the ideas stick.

The good news is that modern AI tools make the journey less lonely. They can help summarize dense papers, compare libraries, explain confusing code, and speed up the repetitive parts of implementation. That does not remove the need for judgment. It gives you more room to apply it.

If you stay curious and keep the scope sane, PDEs stop feeling like a locked room. They start feeling like a toolkit.

If you want one workspace for research, code help, document analysis, and idea exploration while you learn numerical methods, take a look at . It is useful when you need to compare solver approaches, summarize technical papers, draft simulation code, and keep your notes in one place without bouncing across a dozen tabs.

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

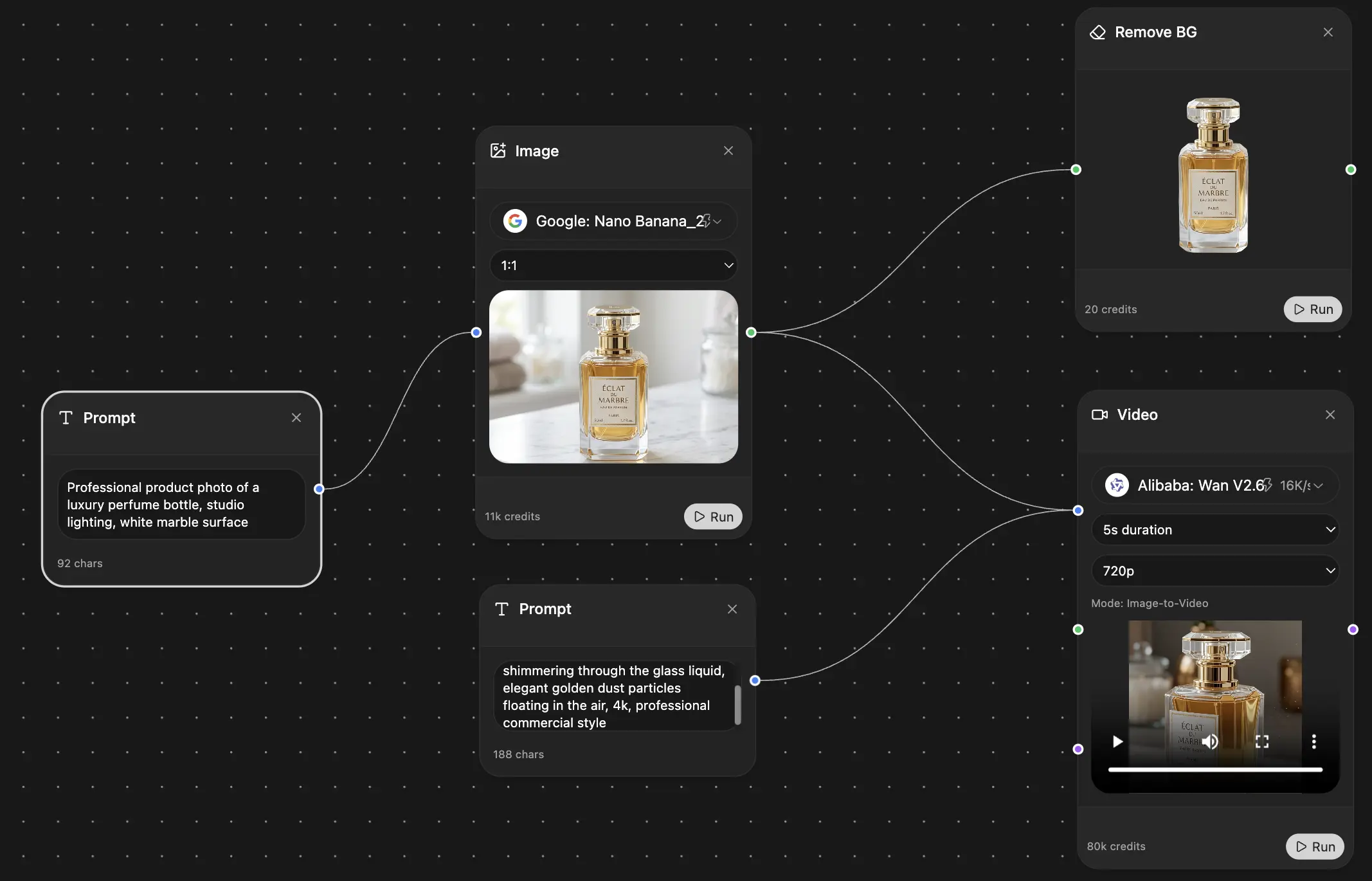

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers

What Our Users Say

Great Tool after 2 months usage

"I love the way multiple tools they integrated in one platform. Going in the right direction."

— simplyzubair

Best in Kind!

"The quality of data and sheer speed of responses is outstanding. I use this app every day."

— barefootmedicine

Simply awesome

"The credit system is fair, models are perfect, and the discord is very responsive. Quite awesome."

— MarianZ

Great for Document Analysis

"Just works. Simple to use and great for working with documents. Money well spent."

— yerch82

Great AI site with accessible LLMs

"The organization of features is better than all the other sites — even better than ChatGPT."

— sumore

Excellent Tool

"It lives up to the all-in-one claim. All the necessary functions with a well-designed, easy UI."

— AlphaLeaf

Well-rounded platform with solid LLMs

"The team clearly puts their heart and soul into this platform. Really solid extra functionality."

— SlothMachine

Best AI tool I've ever used

"Updates made almost daily, feedback is incredibly fast. Just look at the changelogs — consistency."

— reu0691