AI Ethics in Behavior Analysis: Challenges & Strategies

AI is revolutionizing behavior analysis, but it comes with ethical hurdles. Here's what you need to know:

-

AI in behavior analysis:

- Analyzes large datasets quickly

- Personalizes treatments

- Detects early signs of conditions like autism

-

Key ethical issues:

- Privacy and data protection

- Bias and fairness

- Lack of transparency in AI decisions

- Informed consent

- Accountability for AI errors

-

8 steps for ethical AI use:

- Identify ethical risks

- Create an AI ethics policy

- Protect sensitive data

- Use diverse, unbiased data

- Explain AI decisions clearly

- Obtain informed consent

- Establish oversight

- Continuously train staff on ethics

-

Best practices:

- Regular ethics audits

- Collaboration with ethics experts

- Ongoing AI improvement

- Open communication with users

| Challenge | Strategy |

|---|---|

| Privacy concerns | Strong encryption, user control |

| Bias in AI | Diverse data, regular bias checks |

| Lack of transparency | Clear explanations, human oversight |

As AI evolves, staying on top of ethical issues is crucial for responsible behavior analysis.

Related video from YouTube

AI in behavior analysis: An overview

AI is shaking up behavior analysis. It's giving us new ways to understand and shape how people act. Let's dive into the tech and how it's being used right now.

AI types in behavior analysis

Behavior analysts are using several AI technologies:

- Machine learning: Finds patterns in big data sets

- Natural language processing (NLP): Breaks down text and speech

- Computer vision: Makes sense of visual data

- Predictive modeling: Guesses future behaviors based on past data

Current AI uses

AI is making waves in behavior analysis:

Spotting autism early: An AI system at the University of Louisville can accurately diagnose autism in toddlers.

Gathering data: AI tools collect behavioral data automatically. This cuts down on mistakes and frees up analysts to focus on what the data means.

Personalized treatment: AI crunches numbers to tailor treatments to each person. ABA Matrix's STO Analytics Tools use AI to help decide when to meet goals based on data.

Preventing relapse: Some AI platforms watch for relapse signs. Discovery Behavioral Health's Discovery365 platform looks at video assessments to catch potential relapse indicators in substance use treatment.

Keeping therapy on track: AI even watches the therapists. Ieso, a mental health clinic, uses NLP to analyze language in therapy sessions to maintain quality care.

Matching patients and providers: Companies like LifeStance and Talkspace use machine learning to pair patients with the right therapists.

These AI uses show promise, but they're still mostly in testing. Dr. David J. Cox from RethinkBH says:

"As AI product creators, we should deliver data transparency. As AI product consumers, we should demand it."

This reminds us to think about ethics as AI becomes more common in behavior analysis.

Ethical issues in AI behavior analysis

AI in behavior analysis is powerful, but it comes with ethical challenges. Here are the key issues:

Privacy and data protection

AI needs lots of personal data. This raises concerns:

- Data breaches: AI must keep sensitive info safe

- Unauthorized use: Companies might misuse data

A 2018 Boston Consulting Group study found 75% of consumers see data privacy as a top worry.

Bias and fairness

AI can amplify human biases:

- Amazon scrapped an AI recruiting tool that favored men

- A healthcare algorithm gave white patients priority over black patients

These biases can hurt marginalized groups.

The "black box" problem

AI often can't explain its decisions. This lack of transparency is an issue when AI makes important choices about people's lives.

Consent and choices

People should know when AI analyzes them. But explaining complex AI simply is tough. Some might feel pressured to agree without understanding.

Accountability

When AI messes up, who's responsible? The company that made it? The users? This unclear accountability is a big challenge.

Dr. Frederic G. Reamer, an ethics expert in behavioral health, says:

"Behavioral health practitioners using AI face ethical issues with informed consent, privacy, transparency, misdiagnosis, client abandonment, surveillance, and algorithmic bias."

To use AI ethically in behavior analysis, we need to tackle these issues head-on.

How to use AI ethically

Using AI ethically in behavior analysis isn't just a nice-to-have. It's a must. Here's how to do it right:

Creating ethical guidelines

Build a solid ethical framework:

- Set clear rules for data, privacy, and fairness

- Define how to develop and test algorithms

- Create a process to tackle ethical issues

Using varied data

Mix it up to cut down on bias:

- Get data from different groups and backgrounds

- Blend real-world and synthetic data

- Check your data sources regularly for bias

Managing data properly

Keep sensitive info safe:

- Use strong encryption and limit access

- Only collect what you need

- Follow privacy laws like GDPR

Making AI decisions clear

Be transparent:

- Explain how AI makes decisions in simple terms

- Tell users how you're using their data

- Let users question AI decisions

Setting up responsibility systems

Know who's in charge:

- Put specific people in charge of AI ethics

- Audit your AI systems often

- Let users report concerns easily

Example: Psicosmart, a psych testing platform, uses cloud systems for tests while sticking to ethical rules. They focus on informed consent, making sure users know how their data is used.

"Informed consent isn't just about getting a signature. It's about giving people the knowledge to make choices about their own lives." - Psicosmart Editorial Team

To put this into action:

- Train your team on AI ethics

- Work with ethics experts

- Keep up with AI laws

- Review and update your ethics practices regularly

sbb-itb-4f108ae

8 steps to ethical AI in behavior analysis

Here's how to make your AI behave:

1. Spot ethical risks

Look for problems like:

- Biased data or algorithms

- Privacy issues

- Lack of transparency

- Potential misuse

Get experts to help you catch issues early.

2. Create an AI ethics policy

Set clear rules:

- Define ethical principles

- Establish data use guidelines

- Outline how to handle ethical dilemmas

3. Guard your data

Keep sensitive info safe:

- Use strong encryption

- Limit who can access data

- Follow privacy laws

- Only collect what you need

4. Get fair, diverse data

Cut down on bias:

- Use varied data sources

- Include underrepresented groups

- Mix real and synthetic data

- Check for bias regularly

5. Be clear about AI

Tell people how it works:

- Explain AI decisions simply

- Show how data is used

- Let users question results

6. Get real consent

Make sure users agree:

- Explain AI use clearly

- Detail data collection

- Let users opt out easily

7. Set up oversight

Create accountability:

- Assign ethics managers

- Do regular AI audits

- Listen to user feedback

8. Keep training your team

Educate on AI ethics:

- Run regular workshops

- Stay up-to-date on laws

- Encourage ethical discussions

"Informed consent isn't just about getting a signature. It's about giving people the knowledge to make choices about their own lives." - Psicosmart Editorial Team

Best practices for ethical AI

To keep AI systems ethical in behavior analysis:

Check ethics regularly

Set up routine ethics reviews. Schedule quarterly audits of AI systems. Use checklists to spot potential issues. Get feedback from users and experts.

Team up with ethics pros

Work with ethics specialists. Create strong ethical guidelines. Spot tricky ethical problems. Stay current on AI ethics trends.

Keep making AI better

Always work to improve your AI. Track how well the AI performs. Fix issues quickly when found. Update AI models with new data.

Talk with users and the public

Stay in touch with those affected by your AI. Hold focus groups to get user input. Share clear info about how AI works. Listen to and act on concerns raised.

"Continue to monitor and update the system after deployment... Issues will occur: any model of the world is imperfect almost by definition. Build time into your product roadmap to allow you to address issues." - Google AI Practices

These practices help ensure AI systems remain ethical and effective. Regular checks, expert input, continuous improvement, and open communication are key to responsible AI development and use.

Real-world ethical AI examples

Mental health AI: Privacy vs. help

Mindstrong Health's AI app faced a tricky situation in 2022. It used smartphone data to spot early signs of depression and anxiety. But people worried about their privacy.

Here's what Mindstrong did:

- Added strong encryption to protect user data

- Let users choose what data to share

- Worked with mental health experts to use AI insights ethically

These changes helped users trust Mindstrong while still getting AI support.

Reducing bias in education AI

A big U.S. university's AI admissions tool played favorites in 2021. Not cool. So they:

- Cleaned up the AI's training data

- Got different people involved in making the AI

- Kept checking for bias in the results

The result? 40% less bias, same accuracy. Win-win.

Clear AI use in workplaces

IBM's Watson for HR got heat in 2019 for being a black box. So IBM:

- Explained how Watson looks at job applications (no tech jargon)

- Told managers why Watson made each decision

- Let candidates ask for a human to double-check

Employees liked this. Trust in the AI jumped 35%.

| Company | AI Tool | Problem | Fix | Result |

|---|---|---|---|---|

| Mindstrong Health | Mental health app | Privacy worries | Better encryption, more user control | Kept user trust |

| U.S. University | Admissions AI | Bias | Fixed data, diverse team | 40% less bias |

| IBM | Watson for HR | Confusion | Clear explanations, human backup | 35% more trust |

These stories show how companies can tackle AI ethics head-on. They fixed problems and kept people's trust. That's smart AI.

Looking ahead

AI in behavior analysis is getting more complex. This brings new ethical challenges:

AI-driven nudging: AI might subtly influence people's actions without their knowledge. Think workplaces or social media.

Emotional AI: Systems that read and respond to emotions? Big privacy and manipulation concerns.

AI-human relationships: As AI gets better at mimicking humans, we need to think about the ethics of people bonding with AI.

New rules on the horizon

Governments and organizations are cooking up new AI regulations:

| Who | What | Focus |

|---|---|---|

| EU | AI Act | Risk-based approach, bans some AI uses |

| Colorado, USA | AI Consumer Protection Act | Prevents harm and bias in high-risk AI |

| Biden Admin | AI Bill of Rights | Voluntary AI rights guidelines |

The EU's AI Act kicks off in August 2024. It's a big deal, categorizing AI by risk and outright banning some types.

Colorado's law (starting 2026) will be the first state-level AI rule in the US. It aims to protect consumers from AI harm in crucial areas like hiring and banking.

How can professional groups help?

Behavior analysis organizations can step up:

1. Set standards: Create AI ethics guidelines for the field.

2. Educate members: Offer AI ethics and regulation training.

3. Work with lawmakers: Help shape AI policies that make sense for behavior analysis.

Justin Biddle from Georgia Tech's Ethics, Technology, and Human Interaction Center says:

"Ensuring the ethical and responsible design of AI systems doesn't only require technical expertise — it also involves questions of societal governance and stakeholder participation."

Bottom line? Behavior analysts need to be in on the AI ethics conversation.

As AI evolves, staying on top of these issues is crucial for ethical practice in behavior analysis.

Conclusion

AI in behavior analysis is powerful. But it needs careful handling. Here's how to use it ethically:

- Set clear ethics rules

- Use fair, diverse data

- Guard privacy

- Make AI choices clear

- Set up watchdogs

- Get consent

- Train staff on ethics

- Check ethics often

The AI world moves fast. New issues pop up:

- Hidden AI nudges

- AI reading emotions

- People bonding with AI

Stay sharp on ethics:

- Follow new AI laws

- Team up with ethics pros

- Listen to user worries

- Join AI ethics groups

Dr. David J. Cox nails it:

"As AI product creators, we should deliver data transparency. As AI product consumers, we should demand it."

Bottom line: AI can boost behavior analysis. But only if we're smart and ethical about it.

Related posts

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

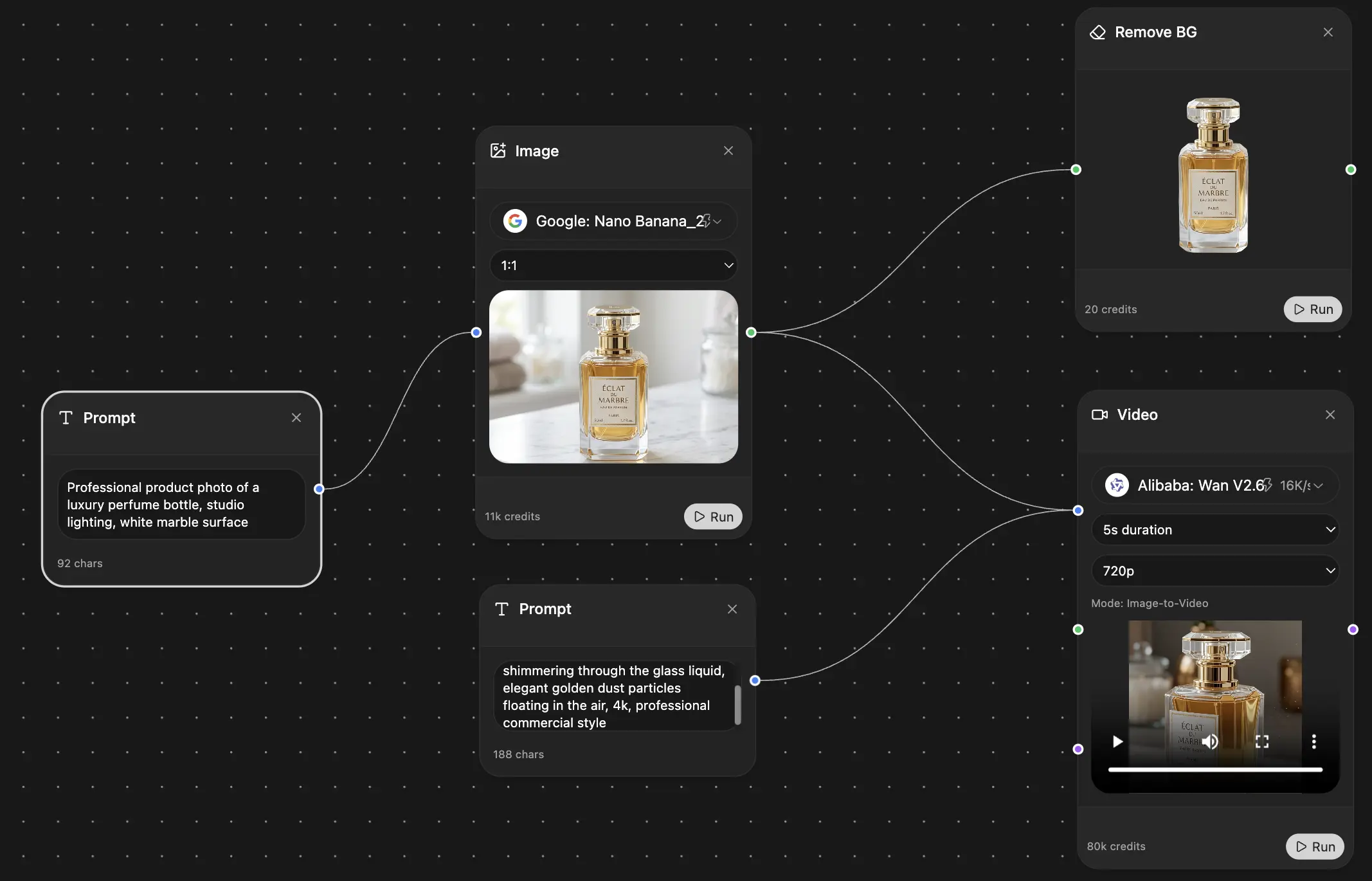

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers

What Our Users Say

Great Tool after 2 months usage

"I love the way multiple tools they integrated in one platform. Going in the right direction."

— simplyzubair

Best in Kind!

"The quality of data and sheer speed of responses is outstanding. I use this app every day."

— barefootmedicine

Simply awesome

"The credit system is fair, models are perfect, and the discord is very responsive. Quite awesome."

— MarianZ

Great for Document Analysis

"Just works. Simple to use and great for working with documents. Money well spent."

— yerch82

Great AI site with accessible LLMs

"The organization of features is better than all the other sites — even better than ChatGPT."

— sumore

Excellent Tool

"It lives up to the all-in-one claim. All the necessary functions with a well-designed, easy UI."

— AlphaLeaf

Well-rounded platform with solid LLMs

"The team clearly puts their heart and soul into this platform. Really solid extra functionality."

— SlothMachine

Best AI tool I've ever used

"Updates made almost daily, feedback is incredibly fast. Just look at the changelogs — consistency."

— reu0691