Mastering The GPT-4o Context Window: Your Practical (and Slightly Sarcastic) Guide

Tired of your AI forgetting things? Learn to master the GPT-4o context window with real-world tips and avoid common pitfalls. Maximize your AI's memory today.

The headline number for GPT-4o is a 128,000-token context window. Think of this as the AI's short-term memory for any single conversation you have with it. This massive capacity lets it process and hold onto an amount of information roughly equal to a 300-page book, giving it a much deeper grasp on long documents, complex code, or sprawling chat histories. Basically, it can remember more about your project than you can after a long weekend.

What The GPT-4o Context Window Actually Means

"Context window" might sound like some overly technical term cooked up by engineers, but it’s actually a simple idea: it's the AI's working memory. It's the total amount of information—everything you feed it and everything it says back—that the model can "see" at any given moment.

A small context window is like talking to a goldfish. You can't have a very deep conversation. But with a huge 128,000-token context window, like GPT-4o has, it’s like talking to someone who remembers the entire book you’ve been discussing from cover to cover. This is a massive leap from older models that would often forget the beginning of a conversation by the time you got to the middle. Annoying, right?

So, What the Heck is a Token?

Before we go any further, let's quickly clear up what tokens are. An AI doesn't see words the way we do. Instead, it breaks text down into common pieces of words, called tokens, to process and understand language. It's how the machine "reads."

As a general rule of thumb, 100 tokens is about 75 words. This means the 128k context window can hold approximately 96,000 words.

This is a complete game-changer for anyone working with large volumes of information. It elevates AI from a simple question-and-answer bot into a real collaborator that can understand deep, complex context.

If you want to get a better handle on how AIs make sense of human language, our guide on breaks down the core concepts without making your brain hurt.

What 128,000 Tokens Looks Like In Real Life

A quick comparison to help you visualize the massive scale of the GPT-4o context window. And no, we didn't measure this in bananas.

This ability to "remember" so much information at once opens the door to tasks that just weren't feasible before.

- Analyzing large documents: You can upload a lengthy legal contract, a dense scientific paper, or a detailed financial report and ask specific questions. The AI can pull answers from anywhere in the document.

- Debugging complex code: A developer can drop in an entire codebase, and the AI can spot bugs or suggest optimizations while understanding how all the different files and functions relate to each other.

- Summarizing long conversations: Feed it hours of meeting transcripts and get a summary that’s not just a collection of keywords, but a coherent overview of the discussion.

This expanded memory is what makes tools like Zemith's Document Assistant so darn effective. When you combine a massive context window with a smart interface, you can have a natural, back-and-forth conversation with your documents. Ask follow-up questions and get answers that pull from the entire text, not just a small piece of it. It’s about creating a genuine dialogue with your data, not just shouting commands at it.

The Hidden Downsides Of A Giant AI Memory (Yes, There Are Downsides)

So, GPT-4o's 128,000 token context window sounds like a dream come true, right? The fantasy is to dump a whole novel into the prompt and ask about some obscure detail in the middle. But as anyone who has actually tried this knows, there’s a catch. A big memory comes with its own unique and frustrating problems.

Think of it less like a perfect computer database and more like human memory—it has its limits and strange quirks. One of the biggest issues is a phenomenon researchers have dubbed "Lost in the Middle."

It’s pretty much what it sounds like. When you feed an AI a massive amount of text, it tends to pay close attention to the beginning and the end, but the details buried deep in the middle get hazy. This explains why an AI might miss a critical bug in a huge file of code or gloss over a key statistic on page 50 of a 100-page report. It's not dumb; it's just overwhelmed. Sound familiar?

The U-Shaped Performance Curve of Doom

This isn't just a fluke; it's a predictable pattern. When put to the test, large language models show a very clear U-shaped performance curve for information retrieval. This means that anything you put at the very start or very end of your prompt is front and center. Everything else? It might as well be in the nosebleed seats.

This diagram gives you a sense of just how much you can technically stuff into GPT-4o's context, but it doesn't guarantee the AI will pay equal attention to all of it.

While the AI can hold that entire volume of information in its short-term memory, its ability to pinpoint a specific fact from the middle can drop off a cliff.

In one stress test of a 128k context window, the results were shocking. When filled with just 64,000 tokens—only half its maximum capacity—the model could only find 1 out of every 10 facts embedded in the text. The study found a consistent blind spot for information located anywhere between the 7% and 50% mark of the document. Yikes.

The Real-World Costs You Can't Ignore

Beyond the "lost in the middle" problem, there are two other very practical trade-offs that come with trying to max out the context window:

- Latency (aka The Waiting Game): Sending 100,000+ tokens to an AI isn't instant. The model has to crunch through all that data before it can even begin to generate a response. If you need an answer fast, giving it a massive document is like asking someone to sprint right after they’ve run a marathon. It’s going to be slow.

- Cost (aka Your Wallet): Models charge you for the tokens you send (input) and the tokens you get back (output). Constantly using the full context window is the most expensive way to work with an AI. It's the equivalent of renting a massive moving truck just to transport a single armchair—sure, it gets the job done, but it's a huge waste of money.

The big takeaway? More context isn't always better. A larger context window is a powerful tool, but using it blindly without understanding its limits leads to slow, expensive, and surprisingly inaccurate results.

This is why simply having access to a huge context window isn't the whole story. You need smart strategies to manage it. It's a bit like how we need techniques for learning; simply reading a book won't help if you don't know . For AI, this means using tools like Zemith that intelligently manage what information gets sent to the model, ensuring it sees the right details at the right time.

How to Tame the 128k Context Window Beast

Alright, so you've seen the pitfalls. You now know that just dumping a 300-page document into the GPT-4o context window can be a recipe for disaster, leading to everything from painfully slow responses to that frustrating "Lost in the Middle" problem. So, how do you actually get work done without wanting to throw your laptop out the window?

This is your field guide. Let's walk through a few battle-tested strategies to turn that massive context window from an unpredictable monster into your most reliable workhorse. These aren't just theories; they're practical moves you can start using right away.

Master the Instruction Sandwich

Remember that U-shaped curve we talked about, where the AI pays most attention to the beginning and end of a prompt? You can totally use this to your advantage with a simple but surprisingly powerful technique: the "instruction sandwich."

It's exactly what it sounds like. You place your main instruction at the top of the prompt, then you repeat it right at the very end.

- Top Bun (Beginning): Start with your primary command. "You are an expert legal analyst. Your job is to analyze the following contract draft and identify every clause related to intellectual property rights."

- The Filling (Middle): This is where you paste your long document, lines of code, or transcript. It’s the bulk of your context.

- Bottom Bun (End): Repeat the core instruction to bring it home. "Now, after reviewing the entire contract I provided, list all clauses that pertain to intellectual property rights."

This method works like a constant reminder, keeping the AI locked onto the goal and dramatically cutting down the chances of it getting sidetracked. It’s the prompt engineering equivalent of putting sticky notes all over the house.

Don't Ditch Document Chunking

Even with a massive 128k context window, breaking down huge documents is still a brilliant move. Why? Because it gives you precision and control. Instead of asking the AI to find a needle in a giant haystack, you’re handing it a much smaller, more manageable handful of hay.

This is especially true when you're wrestling with extremely long or dense material. If you're working with a 500-page technical manual, trying to find one specific troubleshooting step can be a tall order, even for GPT-4o.

A much smarter approach is to break the manual into logical chapters or sections. This is where a tool like Zemith's Document Assistant becomes a lifesaver. You can upload multiple documents (or chunks of one) into a Project, letting the AI access the information without you stuffing it all into one gigantic prompt. The result is almost always faster and more accurate answers. And if you work with PDFs a lot, you might want to check out our guide on to make this process even easier.

Create a "Cheat Sheet" Summary

Here's another pro-level trick: give the AI a "cheat sheet" right at the start of your prompt. Before you paste in that massive wall of text, write a short, bulleted summary of the key contents.

Example: Imagine you're about to paste a 10-hour meeting transcript. You could start your prompt with a summary like this:

"Below is the transcript of a 10-hour project kickoff meeting. Key topics included:

- Hour 1-2: Project goals and introductions. (The boring part)

- Hour 3-5: Deep dive into the technical architecture. (The geeky part)

- Hour 6-8: Brainstorming the marketing and GTM strategy. (The fun part)

- Hour 9-10: Finalizing next steps and assigning action items. (The 'uh oh, now we have to do work' part)"

This upfront summary acts like a table of contents for the AI. It primes the model with the document's overall structure and main themes, making it far easier for it to navigate the content and find exactly what you're looking for. To make this even more effective, you can use that provide structured notes, which you can then feed into the context window for even sharper analysis.

All this theory about context windows is great, but how do you actually make GPT-4o's massive memory work for you without it becoming a huge headache? It’s one thing to have the raw power; it's another to use it effectively in your day-to-day work.

This is where you stop juggling a dozen different AI tools and browser tabs. We're going to connect the dots and show you how to apply these strategies in a single, focused workspace like Zemith. It’s all about turning that abstract power into something you can actually use to get stuff done.

Go Beyond the Chatbox with an Integrated Workflow

Let's be honest—just having access to GPT-4o isn't a magic bullet. The real breakthroughs happen when you weave its power directly into your tasks. Imagine uploading a 300-page annual report and just… talking to it. You could ask complex questions that require it to pull information from page 12, page 87, and page 250, all at once.

That’s exactly what Zemith's Document Assistant was built for. You can finally stop the tedious copy-paste dance and interact with your information in a way that feels natural.

Here’s what that looks like. You can see how all your documents are neatly organized in one spot, ready for you to dive in and start asking questions. No more "where did I save that file again?" moments.

When you keep all your source material in one place inside Zemith, you’re essentially creating the perfect playground for GPT-4o. It can now connect ideas across your entire knowledge base, shifting the conversation from generic Q&A to a genuinely informed discussion with your own data.

Building a Long-Term AI Memory

A standard chat with GPT-4o is like having a conversation with someone who has short-term memory loss. The GPT-4o context window holds onto information for that single session, but once it’s over, everything is forgotten. It's a huge pain for ongoing projects. Who wants to re-explain the project goals every single time they start a new chat? No one.

This is where features like Zemith’s Library and Projects are a game-changer. They act as a persistent, long-term memory for the AI.

- The Library: Think of this as your personal knowledge hub. You can toss all your important documents, research papers, and notes in here.

- Projects: You can group related documents and chats into a single project space. The AI gets access to this shared knowledge, maintaining context across every single interaction you have within that project.

By organizing your work this way, you give the AI a "memory" that can span weeks or even months. It remembers the project's nuances, the key people involved, and the decisions you made last Tuesday, making every new chat smarter than the last.

This approach neatly sidesteps the limitations of a single chat session. It's like giving your AI assistant a dedicated filing cabinet for each of your projects, ensuring it always has the right files ready to go.

Working with GPT-4o’s Input and Output Limits

Zemith gives you access to multiple models so you can pick the right tool for the job, but it helps to know their quirks. GPT-4o has that massive 128,000 token context window—a huge 4x jump from older models. But here’s the catch: it’s currently limited to generating about 4,096 tokens in its response.

Understanding this input-to-output gap is crucial for designing prompts that actually work. You can’t just dump a novel in and ask for a detailed summary that’s half as long. You have to be strategic. For a deeper dive, you can to see how other engineers are tackling these real-world limits.

By combining Zemith’s organizational tools with the raw power of the GPT-4o context window, you're not just using an AI—you're building an intelligent system that’s fine-tuned to your specific work. And if you’re inspired to create something even more specialized, you might want to check out our guide on for your own custom solutions.

The Future Is A Million Tokens And Beyond (Hold Onto Your Hats)

Just when you’ve finally wrapped your head around the 128k beast that is GPT-4o, the AI world is already moving on. The future of context is expanding at a dizzying speed, and it's set to change everything… again. So, let’s look at what’s coming next, because that big GPT-4o context window is really just a warm-up act.

It sounds like a sci-fi movie, but AI labs are already road-testing models with absolutely enormous context windows. We're talking one million tokens. No, that's not a typo. Recent advancements with early GPT-4.1 models, for example, have shown support for up to 1 million tokens of context—an incredible 8x leap from GPT-4o. You can dive deeper into what this means for developers by checking out these insights on .

What A Million-Token World Looks Like

So, what does it actually feel like when an AI can hold that much information in its head at once? It’s the difference between asking someone to read a chapter versus asking them to read an entire library. Tasks that seemed impossible a year ago are suddenly on the verge of becoming reality.

Complete Codebase Analysis: Imagine feeding your company's entire codebase—every single file—into one massive prompt. The AI could then perform a full system audit, hunt down security vulnerabilities across all microservices, or even suggest major architectural changes because it understands every single dependency.

Enterprise-Wide Customer Insights: Think about analyzing every support ticket, chat log, and feedback email your company has ever received, all in one shot. The model could spot systemic issues, trace bugs back to their origin, and tell you what your customers really want, based on years of their own words.

Deep Scientific Discovery: A researcher could give an AI a whole field of study—hundreds of papers, clinical trial results, and historical data—and ask it to find the hidden connections and hypotheses that no human team could ever spot.

This isn't just a bigger version of what we have now; it's a fundamental shift in what we can ask an AI to do. It moves the AI from a task-doer to a true system-level thinker.

Staying Ahead Without The Headache

The pace is relentless. One day, 128k tokens feels like the pinnacle; the next, a million is already on the horizon. Trying to keep up with this constant evolution can feel like a full-time job in itself. Who has the time to rebuild their entire workflow every time a new model is released?

This is exactly where a smart platform like Zemith becomes so valuable. It’s built from the ground up to be model-agnostic. Instead of tying you to one specific AI, Zemith’s multi-model access means that when these next-gen models with massive context windows are ready for prime time, they can be plugged right into the platform.

This approach lets you get the best tools as they emerge without having to overhaul your process. As AI models continue to grow, understanding advanced will also be key to making sure your content gets seen. Ultimately, the goal is to have a platform that handles all the backend complexity for you, so you can just focus on getting your work done.

Your Action Plan For Winning With Large Context

So, we've unpacked the good, the bad, and the expensive when it comes to GPT-4o's massive context window. Now it’s time to get practical. This isn't just a summary—it's your game plan for making that huge memory work for you, not against you.

Your Go-To Checklist

Think of this as your pre-flight check before you give the AI a heavy-duty task. A few seconds spent on these steps can save you a ton of frustration, time, and money down the line.

- Sandwich Your Instructions: This is a classic for a reason. Put your most important command at the very start of your prompt, and then repeat it right at the end. It's the best way to keep the AI from getting lost in the middle of a long document.

- Create a "Cheat Sheet" Summary: Don't just dump a 50-page report on the model. Give it a helping hand by writing a quick, bulleted summary of the key sections or arguments at the top. This acts like a table of contents, helping the AI navigate the information far more effectively.

- Chunk When in Doubt: Yes, the 128,000 token window is huge, but it's not foolproof. Breaking down enormous documents into smaller, more focused pieces is still a fantastic strategy for improving accuracy and getting faster responses.

The goal is to turn that massive context window from an intimidating feature into your greatest productivity asset. Don't just throw data at it; guide it. A little strategy goes a long way.

One Final Power Tip For Your Workflow

Here’s probably the most important shift you can make: stop treating your AI chats like one-off conversations. To really get the most out of large context, you need to build a persistent, organized space where the AI can find information across all your tasks.

This is exactly why we built features like the Zemith Library and Projects. Instead of uploading the same files over and over or reminding the AI about project details, you just create a central hub. When you group your research, reports, and notes into a Project, GPT-4o gains a long-term memory that makes every interaction smarter.

If you're trying to centralize knowledge for your whole team, our guide on is a great place to start.

Answering Your GPT-4o Context Window Questions

Once you start working with large context windows, a few practical questions always seem to pop up. Let's clear up some of the most common ones I hear.

Does Using The Full 128k Context Window Cost More?

You bet it does. API providers, including OpenAI, charge based on the number of tokens you process—both what you send in your prompt (input) and what the model generates (output).

Think of it like paying for shipping. Sending a massive 128k token prompt is like shipping a huge, heavy box. It costs more, plain and simple, even if a lot of what's inside isn't strictly necessary. It’s almost always smarter and more budget-friendly to be selective about what you send the model.

A great way to manage this is to use a tool that lets you easily switch between different models. I use Zemith for this, picking something cheaper for quick summaries and saving the powerhouse GPT-4o for the really complex jobs that need its massive context.

Can I Just Use The Full Context Window For Everything?

You could, but it would be a huge mistake. For most everyday prompts, it's complete overkill. If you're just asking a simple question or drafting a quick email, using the full context window will dramatically slow down your response time and drive up costs for no real benefit.

Save that massive 128k context window for the heavy-lifting, where having all that background information is an absolute must.

- Analyzing a dense annual financial report from top to bottom.

- Summarizing a book or a transcript from a multi-hour meeting.

- Debugging a large codebase with lots of interconnected files.

For pretty much everything else, a smaller, more focused context is your friend.

With A 128k Window, Is RAG Still Necessary?

Yes, 100%. In fact, it's arguably more critical than ever. RAG (Retrieval-Augmented Generation) is the technique of intelligently finding and feeding the AI only the most relevant snippets of information for a specific task.

Remember the "Lost in the Middle" problem? An AI can easily lose track of key details in a mountain of text. RAG acts like a super-smart research assistant. Instead of handing the AI a 500-page manual and saying "good luck," RAG finds the exact page and paragraph needed to answer the question. This results in faster, cheaper, and far more accurate responses than just hoping the model finds what it needs. Platforms like Zemith build this retrieval logic right into their features, making sure the AI gets the perfect context every time.

Ready to stop wrestling with context windows and actually get work done? Zemith brings the power of GPT-4o and other top models into a workflow designed for real-world use, with smart document chat and project-based memory. See how it works for yourself at .

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

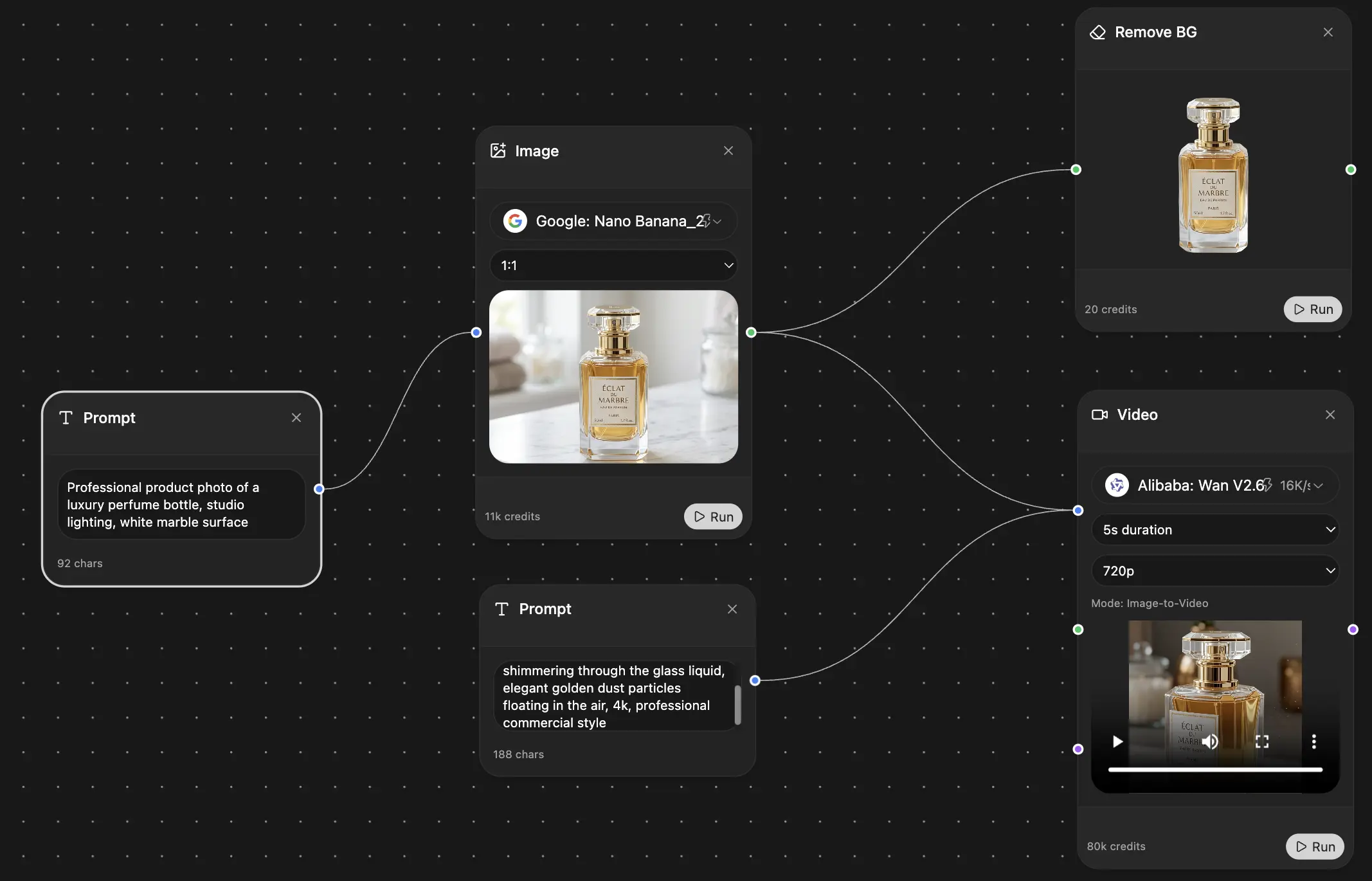

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers

What Our Users Say

Great Tool after 2 months usage

"I love the way multiple tools they integrated in one platform. Going in the right direction."

— simplyzubair

Best in Kind!

"The quality of data and sheer speed of responses is outstanding. I use this app every day."

— barefootmedicine

Simply awesome

"The credit system is fair, models are perfect, and the discord is very responsive. Quite awesome."

— MarianZ

Great for Document Analysis

"Just works. Simple to use and great for working with documents. Money well spent."

— yerch82

Great AI site with accessible LLMs

"The organization of features is better than all the other sites — even better than ChatGPT."

— sumore

Excellent Tool

"It lives up to the all-in-one claim. All the necessary functions with a well-designed, easy UI."

— AlphaLeaf

Well-rounded platform with solid LLMs

"The team clearly puts their heart and soul into this platform. Really solid extra functionality."

— SlothMachine

Best AI tool I've ever used

"Updates made almost daily, feedback is incredibly fast. Just look at the changelogs — consistency."

— reu0691