How to Do Market Research Online (Even With No Budget)

Learn how to do market research online with our step-by-step guide. Discover AI-powered methods with Zemith, practical tips, and tools for any budget in 2026.

You’ve probably seen this happen.

A founder gets excited about a product, builds the landing page, writes the tagline, maybe even orders inventory, then launches to an audience that responds with the digital equivalent of polite silence. No clicks. No signups. No “where has this been all my life?” comments. Just crickets and a growing urge to blame the algorithm.

Most of the time, the problem is not effort. It’s guessing.

Learning how to do market research online fixes that. Not in a stuffy, enterprise-only, twenty-slide-deck way. In a practical way. You can test demand, study competitors, spot customer language, and pressure-test your offer before you sink months into the wrong idea.

That matters even more now because online research is no longer reserved for big brands with giant budgets and a department full of analysts. The tools are lighter, faster, and much easier to use than they used to be.

Stop Guessing and Start Knowing Your Market

A bakery owner once asked why her new product line wasn’t selling. She had beautiful packaging, premium ingredients, and strong confidence that local buyers wanted “healthy convenience snacks.” Her confidence was sincere. Her market, unfortunately, had other ideas.

When she finally looked at online comments, local search behavior, and direct customer feedback, the issue became obvious. People were not looking for “healthy convenience snacks.” They were looking for quick breakfast options, high-protein office snacks, and lunchbox-friendly items for kids. Same category. Different intent. Different language. Different buying trigger.

That gap is where businesses waste money.

The old excuse was cost. That excuse is weaker now. Email surveys can cost between $3,000 and $5,000, while offline surveys can exceed $100,000 according to . For a startup, that difference changes what is possible.

The bigger mindset shift is this. Research is not a luxury line item you add later. It is protection against building the wrong thing too well.

What online research gives you fast

When done well, online market research helps you answer questions like these:

- Who wants this? Not who you hope wants it.

- What problem are they trying to solve? In their words, not your brand words.

- What are they comparing you against? Direct competitors, substitutes, or doing nothing.

- What makes them hesitate? Price, trust, timing, confusion, or lack of urgency.

If you are still figuring out how to , start there before you obsess over tactics. Bad audience assumptions ruin good research.

The practical shift

Small teams do not need a giant report. They need enough signal to make the next smart move.

That usually means a lean cycle:

- Form a hypothesis

- Collect signals online

- Check if the signals agree

- Adjust your offer, messaging, or audience

- Run the next round

Research works best when it feels like part of execution, not homework.

If you want a good mental model for that style of work, this piece on is worth reading. The core idea is simple. Decisions improve when you force them to compete with evidence.

Chart Your Course with Clear Research Objectives

Most bad research starts with a mushy goal.

“Understand the market” sounds responsible, but it is too vague to guide anything. You need a sharper question. Otherwise you collect interesting trivia, not useful evidence.

A useful objective has three parts:

That gets you from “do market research” to something actionable like this:

- Validate demand: Are people already trying to solve this problem?

- Refine positioning: Which message makes the offer feel relevant?

- Find a better segment: Is there a smaller audience with stronger urgency?

- Understand churn: Why did people leave after trying the product?

- Test expansion: Will the same offer travel to a new market or niche?

Write research questions that can survive contact with reality

Try writing questions that a real dataset could answer.

Weak question:

- Do people like our idea?

Better questions:

- What problem do buyers think this product solves?

- What alternatives are they using today?

- What would stop them from switching?

- Which words do they use when describing the problem?

- Which subgroup reacts differently from the rest?

If your question invites compliments, it is probably too soft. Markets are not there to preserve your feelings. Rude, but useful.

Start with the audience, not the feature

A lot of founders begin with “I built X.” Better research starts with “for whom, in what situation, and why now?”

Build a quick persona using five fields:

- Role or identity: Founder, freelancer, parent, operations lead

- Pain point: What keeps interrupting progress

- Current workaround: Spreadsheet, agency, manual process, competitor tool

- Buying trigger: What makes them start searching

- Selection criteria: Price, speed, trust, ease, integrations, proof

Then add one more thing most templates skip. Context.

The same person can behave like two different buyers depending on context. A founder buying for a side project behaves differently than that same founder buying for a funded team.

Go narrower than feels comfortable

Broad segments sound bigger. Hyper-niche segments are usually easier to serve and easier to understand.

Identifying underserved hyper-niche segments is a key growth move, and AI-enhanced tools can analyze social forum data and behavioral patterns to uncover those gaps, as noted by Luth Research. Many guides stay too broad and miss that opportunity.

A few examples of useful narrowing:

Not “small businesses”

But service businesses with fewer repeatable sales systems

Not “fitness customers”

But busy parents trying to fit workouts between school runs and work calls

Not “B2B teams”

But operations managers cleaning up messy handoffs between sales and onboarding

If everyone is a possible customer, your research will return polite nonsense.

A solid methodology helps here. This guide on is a practical reference for turning fuzzy questions into an actual research plan.

Once your objective is clear, your method gets easier to choose. You stop collecting random screenshots and start gathering evidence with a purpose.

A short explainer can help if you want another framing for this step:

Choose Your Online Research Weapons Wisely

Not every research method answers the same question.

That’s where people get tangled. They run a survey when they should have studied search behavior. Or they stalk competitors for hours when the core issue is message clarity. Different tool, different job.

A simple way to think about how to do market research online is to use four methods together. Each one gives you a different angle on the same market.

Surveys for direct answers

Surveys are useful when you need to ask a focused question and compare responses across a group.

Use them for:

- feature prioritization

- purchase objections

- willingness to switch

- satisfaction patterns

- message testing

Surveys are weak when the audience is too broad or the questions are vague. They are also weak when you ask people to predict behavior they have never faced. “Would you buy this?” is notorious for producing fantasy football answers. People become wildly optimistic when no wallet is involved.

Good survey questions sound like this:

- What were you trying to solve when you started looking?

- What nearly stopped you from choosing this option?

- Which alternative did you consider most seriously?

- What mattered more in your decision, speed, price, or confidence?

Keyword and trend research for market intent

Search behavior tells you what people are actively trying to solve. That makes it one of the fastest ways to spot demand, confusion, urgency, and language patterns.

You are not just looking for high-volume keywords. You are looking for:

- recurring problem phrases

- comparison searches

- “alternative to” patterns

- beginner questions

- geographic or niche-specific language

If you want a grounded walkthrough, this guide on is a good companion.

What works here is clustering queries by intent:

- Problem-aware searches

- Solution-aware searches

- Comparison searches

- Ready-to-buy searches

That gives you a clearer picture than one big spreadsheet full of disconnected terms.

Social listening for emotional truth

Search tells you what people seek. Social listening tells you how they feel about it.

That can include:

- complaints about existing tools

- recurring frustrations

- language people use when they describe bad experiences

- hidden objections buyers may never write in a survey

- shifts in attention around trends, creators, or communities

Over 65% of internet users discover new brands via social media, and 99% of shoppers research online before a purchase, according to . If buyers discover and evaluate options online, then social listening and search analysis stop being “nice to have.”

They become part of basic market awareness.

Competitor analysis for strategic contrast

Competitor research is not copying your rival’s homepage and changing a few adjectives. It is studying how the market is currently being framed.

Look at:

- who they target

- what they promise

- what they avoid saying

- how they package features

- what their customers praise

- what their customers resent

The most useful question is not “What are they doing?”

It’s “What are they leaving open?”

That open space could be:

- a neglected customer segment

- a price point mismatch

- weak onboarding

- a missing use case

- stale messaging that no longer matches how buyers talk

Pick the method by the decision

Here is a common shortcut.

One practical option for handling several of these tasks in one workflow is Zemith, which includes deep research, document analysis, a notepad, and organized project spaces in the same workspace. If you want more on that category, gives a useful overview without forcing you into a patchwork stack.

The method should match the decision. Otherwise you collect data that feels busy but changes nothing.

Gather High-Quality Data Without Losing Your Mind

Collecting data online sounds easy until you’re knee-deep in junk responses, vague comments, duplicate notes, and a spreadsheet that looks like it lost a fight.

The fix is not “collect more.” The fix is collect cleaner.

Write questions normal people can answer

A surprising number of surveys fail because they sound like they were written by a committee trapped in a conference room.

Bad question:

- How satisfied are you with the overall value proposition of our integrated solution?

Better question:

- What made this feel worth paying for, or not worth it?

Use plain language. Ask one thing at a time. Skip jargon. Skip leading phrasing that nudges people toward your favorite answer.

Here are some reliable question templates you can steal:

- Problem discovery: What were you trying to fix when you started looking for a solution?

- Current behavior: How are you handling this today?

- Decision trigger: What happened that made you start searching now?

- Objection mining: What gave you pause before choosing?

- Alternative mapping: What other options did you seriously consider?

- Value test: What would need to be true for this to feel like an easy yes?

Stop surveying the people most likely to flatter you

This is the trap that wrecks otherwise decent research.

Poor sampling is the most critical mistake in market research. Relying on convenience audiences like existing customers or social media followers introduces systematic bias according to . In plain English, if you only ask people who like you, you get a suspiciously cheerful dataset.

That does not mean existing customers are useless. It means they answer a different question.

Ask existing customers when you want to understand:

- why current buyers chose you

- what they value after purchase

- what might improve retention

Do not rely on them alone when you want to understand:

- total market demand

- category objections

- competitor comparisons

- why non-buyers ignore you

Aim for representation, not convenience

A better sample includes people from the market you want, not just the audience you already have.

Ways to improve that:

- Use multiple recruitment paths: Email list, communities, partnerships, panels, outreach, review sites

- Screen carefully: Add a few qualifying questions before the main survey

- Balance segments: Make sure one customer type does not dominate the results

- Check device experience: If the survey is painful on mobile, people drop off or rush through it

If you are collecting text from forums, reviews, or exported customer comments, keep your process clean and ethical. This resource on is useful for understanding where teams often create avoidable messes.

The goal is not to collect the most answers. It is to collect answers from the right people in a format you can trust.

Clean before you analyze

Do a quick quality pass before you draw conclusions.

Look for:

- duplicate responses

- rushed or nonsense answers

- contradictory answers

- missing context on open-ended replies

- obvious category mix-ups

A short cleaning checklist helps:

- Remove junk responses

- Tag respondents by segment

- Separate customers from non-customers

- Group open-ended comments by topic

- Mark uncertain data instead of forcing a conclusion

Messy data can still be useful. Unchecked messy data turns into fake confidence. And fake confidence is expensive.

Find the Gold Hidden in Your Data

A spreadsheet rarely tells the story on first glance.

You might see a top-line percentage and feel tempted to call it a day. Don’t. That is where a lot of mediocre research stops, and it is exactly why teams miss the interesting part.

A major pitfall is stopping at top-line results. Advanced analysis requires cross-tabulation to segment data by demographics or behaviors, as explained in .

Top-line results answer, “What happened overall?”

Good analysis asks, “For whom did it happen, under what conditions, and what changed between groups?”

Segment first, summarize second

Averages smooth over important differences.

If one group loves your pricing and another group hates it, the average can look “fine.” Fine is a dangerous word in research. Fine usually means two different realities mashed together.

Useful cuts include:

Questions worth asking:

- Do newer buyers care about speed more than experienced buyers?

- Do people in one region ask for different features?

- Do high-intent respondents use different language than casual browsers?

- Do dissatisfied users complain about the same thing, or several different things?

Treat comments like clues, not decoration

Open-ended responses are where buyers explain themselves without squeezing into a multiple-choice box.

Read them for recurring themes:

- confusion

- trust

- price pressure

- feature gaps

- onboarding friction

- timing issues

- comparison language

Then group comments by theme and by segment.

For example, if trial users complain about setup while long-term users complain about reporting, those are not the same product problem. One is acquisition friction. The other is retention friction.

Turn patterns into a story

You are not trying to impress anyone with complexity. You are trying to answer a business question clearly.

A useful analysis narrative sounds like this:

- What we learned: Buyers do want the category

- Who feels it most strongly: A narrower subgroup with urgent need

- What blocks conversion: Unclear setup and weak trust signals

- What that means: Fix onboarding message before adding more traffic

That structure makes your findings usable.

Data becomes valuable when it changes a decision, not when it fills a dashboard.

If you want a practical reference for organizing this step, is a good companion.

Prompts that help you analyze faster

If you are working with exported survey comments, support transcripts, review text, or interview notes, useful prompts include:

- Identify the most common complaints from low-satisfaction respondents

- Compare feature requests between first-time users and repeat buyers

- Group open-ended responses into themes and rank them by frequency

- Pull out exact customer phrases that describe the main pain point

- Summarize differences between respondents in the US and Europe

The magic is not in sounding technical. The magic is in asking your data better questions.

Create Your Actionable Research Playbook

Research sitting in a folder is just expensive procrastination with charts.

A good market research process ends with decisions, owners, and next steps. Otherwise everyone nods through the findings, says “super insightful,” and promptly returns to doing whatever they were already doing.

Build a report people will read

You do not need a bloated report. You need a useful one.

A strong structure is simple:

Executive summary What matters most in a few paragraphs.

Key findings The patterns that appeared consistently.

Implications What those findings mean for product, marketing, sales, or positioning.

Recommended actions The changes worth making now.

Open questions What still needs another round of research.

This format respects attention. It also forces you to move from observation to action.

Translate findings into decisions

Every insight should connect to one of these:

- Audience: Who should we target first?

- Offer: What should we change, remove, or emphasize?

- Message: Which language reflects buyer intent best?

- Channel: Where should we show up?

- Experience: What friction blocks trust or conversion?

If a finding does not help one of those areas, it may be interesting but not urgent.

A simple action table helps.

Keep the workflow in one place

Keep the workflow in one place. Integrated AI tooling proves useful here.

Existing guides often miss how integrated AI platforms support deep research synthesis. AI adoption in research surged 40% in the last year, yet many users still struggle with fragmented workflows, according to .

That workflow problem is real. Research gets messy when your notes live in one app, transcripts in another, summaries in a third, and the final report in whatever doc tab you can still find.

One practical fix is to create a single research hub with:

- raw files

- cleaned notes

- competitor snapshots

- analysis outputs

- summary drafts

- final recommendations

If your team likes audio, a short spoken summary can help busy stakeholders absorb the findings without opening the report at all. A five-minute recap is often more useful than another unread document named “final_v7_reallyfinal.”

Frequently Asked Online Research Questions

How much should I budget for online market research

It depends on scope.

You can do a surprising amount with lean methods, especially when you combine search analysis, competitor review, community research, and a small targeted survey. If you do run email surveys, the cost range can still be far lower than offline research. Earlier in this article, I cited the Andava comparison showing a much lower cost than traditional offline approaches.

If you are on a tight budget, start with:

- search intent research

- review mining

- social listening

- a small, focused survey

That gets you signal without pretending you are running a global consumer panel from your kitchen table.

How do I do research if I do not have an audience yet

Borrow proximity to the market.

Use:

- communities where your buyers already talk

- review sites for competing tools or products

- public comments on social platforms

- partnerships with creators or niche newsletters

- targeted outreach to likely users

The mistake is waiting to “build an audience first.” Research is one of the things that helps you build the right audience.

How do I know if my results are reliable

Look for three things:

- Better sampling: You asked the right types of people, not just the easiest people to reach.

- Clear questions: No leading wording, no stacked questions, no jargon salad.

- Pattern consistency: The same themes show up across more than one method.

When search behavior, survey responses, and customer comments all point in the same direction, confidence improves. When they disagree, do not force a neat conclusion. That tension usually means you found something worth investigating.

If you want one place to organize research notes, analyze documents, compare competitors, and turn messy findings into something your team can act on, take a look at . It is built for people who want fewer tabs, fewer tool hops, and a cleaner way to do serious research online.

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

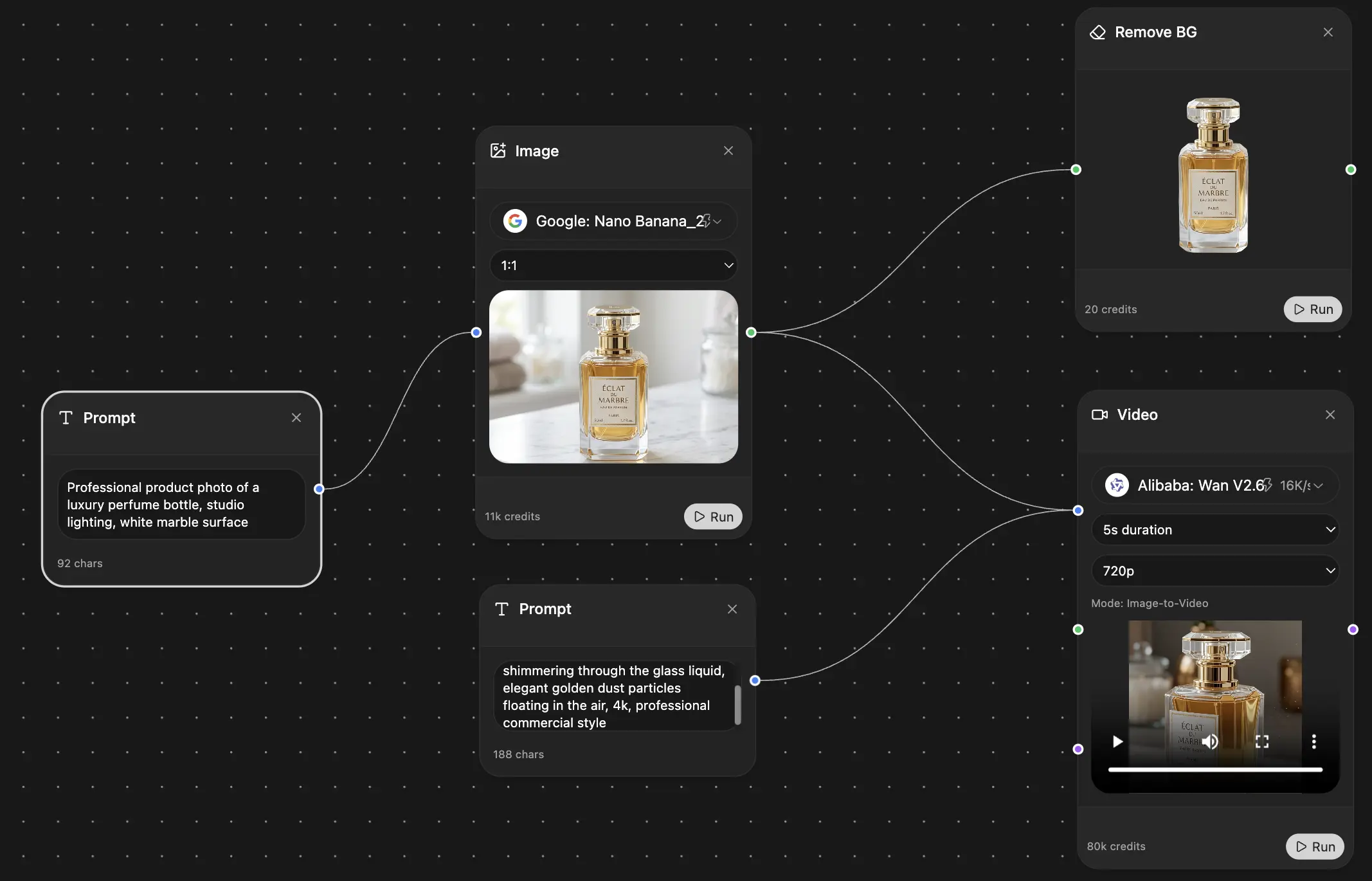

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers

What Our Users Say

Great Tool after 2 months usage

"I love the way multiple tools they integrated in one platform. Going in the right direction."

— simplyzubair

Best in Kind!

"The quality of data and sheer speed of responses is outstanding. I use this app every day."

— barefootmedicine

Simply awesome

"The credit system is fair, models are perfect, and the discord is very responsive. Quite awesome."

— MarianZ

Great for Document Analysis

"Just works. Simple to use and great for working with documents. Money well spent."

— yerch82

Great AI site with accessible LLMs

"The organization of features is better than all the other sites — even better than ChatGPT."

— sumore

Excellent Tool

"It lives up to the all-in-one claim. All the necessary functions with a well-designed, easy UI."

— AlphaLeaf

Well-rounded platform with solid LLMs

"The team clearly puts their heart and soul into this platform. Really solid extra functionality."

— SlothMachine

Best AI tool I've ever used

"Updates made almost daily, feedback is incredibly fast. Just look at the changelogs — consistency."

— reu0691