AI Brain Fry Is Real: Why More AI Tools Are Making You Less Productive

BCG research on 1,488 workers found that using 4+ AI tools drops productivity. Here's what 2026's data says about AI tool overload and how many you actually need.

AI Brain Fry Is Real: Why More AI Tools Are Making You Less Productive

TL;DR

What you need to know: Adding more AI tools past a certain point actively hurts your output. A BCG study of 1,488 workers found productivity peaks with 1-3 AI tools, then declines at 4 or more.

Key findings:

- BCG (March 2026): using 4+ AI tools triggers "AI brain fry" — mental fatigue from managing more tools than you can effectively oversee

- High-oversight AI use causes 14% more mental effort, 12% greater fatigue, 19% more information overload

- Goldman Sachs found "no meaningful relationship" between AI adoption and economy-wide productivity

- AI delivers ~30% productivity gains only in narrow, well-defined tasks: customer support and software development

- Software developers predicted AI would save them 24% of their time — a controlled study found it actually made them 19% slower

- The fix is consolidation, not expansion: fewer tools used deeply outperform many tools used shallowly

You probably know this feeling. You started with ChatGPT. Then added Claude because someone said it was better for writing. Then Perplexity for research. Then an AI notetaker for meetings. Then a dedicated coding assistant. Now you have six AI subscriptions and somehow feel busier than before.

BCG has a name for this: AI brain fry.

In March 2026, BCG published research on 1,488 full-time U.S. workers in Harvard Business Review. The finding that got the most attention wasn't that AI doesn't work. It's that too much AI makes you worse at your job.

What Is AI Brain Fry?

BCG defines it as "mental fatigue from excessive use or oversight of AI tools beyond one's cognitive capacity."

Participants described it with phrases like: a "buzzing" feeling, mental fog, difficulty focusing, slower decision-making. One senior engineering manager put it plainly: "I was working harder to manage the tools than to actually solve the problem."

That sentence sums up the trap. You add AI tools to offload cognitive work. But each tool creates its own overhead: prompting it, evaluating its output, catching its mistakes, switching contexts. At some point the overhead costs more than the tool saves.

The BCG data shows where that tipping point is. Productivity increased when workers went from 1 to 2 tools, continued rising at 3, then dropped at 4 or more.

Marketing workers were the most affected — 26% reported AI brain fry symptoms. Engineering, finance, HR, and IT all showed significant rates. Legal was lowest at 6%.

The Numbers Behind the Overload

When AI tasks required high levels of human oversight, BCG measured specific cognitive costs:

- 14% more mental effort than non-AI tasks

- 12% greater mental fatigue

- 19% greater information overload

The effort isn't coming from the work itself. It's coming from supervising the AI. You still have to read every output, check for hallucinations, decide what to keep, edit what's wrong. That's a new job that didn't exist before, and it compounds when you're doing it across multiple tools simultaneously.

This is consistent with what we know about cognitive load theory. Your working memory has a finite capacity. Every tool you add requires a context-switch cost and an evaluation burden. When those costs stack up, your output quality drops even as your tool count rises.

Goldman Sachs: The Bigger Picture

The BCG finding doesn't stand alone. In early March 2026, Goldman Sachs published its own uncomfortable conclusion: "We still do not find a meaningful relationship between productivity and AI adoption at the economy-wide level."

That's a remarkable statement given how much has been invested in AI tooling across enterprises over the past three years.

Goldman did find productivity gains — but only in two specific contexts: customer support and software development tasks. In those narrow, well-defined use cases, the median productivity gain was around 30%. The key phrase is "well-defined." Tasks with clear success criteria, measurable outputs, and limited oversight requirements. Not open-ended knowledge work where you're constantly judging quality.

For context on how few companies are actually measuring any of this: only 10% of S&P 500 management teams quantified AI's impact on specific use cases. Only 1% quantified its impact on earnings. Meanwhile, 70% discussed AI on quarterly calls.

Most companies are talking about AI productivity without measuring it.

The Developer Experiment That Broke the Narrative

The METR study from 2025 is the most concrete data point on this. METR researchers hired 16 experienced open-source developers to complete 246 tasks using Cursor Pro with Claude 3.5/3.7 Sonnet — one of the best AI coding setups available at the time.

Before the study, the developers predicted AI would make them 24% faster.

The actual result: they were 19% slower.

What makes this finding particularly striking is the post-study survey. Even after completing the tasks and experiencing the slowdown firsthand, the developers still believed AI had made them faster. They predicted 24% speedup going in, and after being measurably slower, they estimated they'd been 20% faster.

This is a perception gap, not just a productivity gap. When you feel productive using AI — when outputs feel faster, when you're generating more content, when the work feels effortful in a forward-moving way — it's easy to mistake that sensation for actual efficiency gains.

Sometimes the feeling of productivity and actual productivity are pointing in opposite directions.

Why More Tools Feel Like Progress

There's a psychological reason the AI tool stack keeps growing even as the returns diminish.

Each new tool solves a real problem in isolation. The AI notetaker genuinely does take better notes than you would. The dedicated research tool does surface sources faster than manual searching. The coding assistant does autocomplete boilerplate faster than typing it by hand.

The problem isn't any individual tool. It's the system they create together.

When you have six tools, you also have six interfaces to learn, six different prompt styles to master, six outputs to evaluate simultaneously, and six subscription fees to justify. The cognitive overhead becomes ambient. You're not just working — you're managing your AI stack.

This is also why the free vs. paid AI tools question is more complicated than it looks. It's not just about cost. Every additional paid tool is another set of decisions: when to use it, whether to trust its output, how to integrate its results with what your other tools are producing.

What Actually Works

The BCG research and Goldman data point to the same underlying principle: narrow, deep use of AI beats broad, shallow use.

A few patterns that show up in the data:

Specific task assignment. Workers who assigned discrete, well-bounded tasks to AI (summarize this, draft a response, find citations for this claim) showed better outcomes than those who tried to use AI as a general thought partner throughout their workflow. The more you define the task, the less oversight it requires.

One tool per workflow. Rather than bouncing between three AI tools for a single project, choosing one and using it deeply reduces the context-switching overhead. You learn its strengths and failure modes. You develop judgment about when to trust it.

Human review at boundaries, not throughout. Reviewing AI output at decision points rather than continuously throughout a task reduces the cognitive monitoring burden. Let it run, then evaluate. Don't read over its shoulder.

This connects directly to what makes automating daily tasks with AI actually work: automation that requires constant human intervention isn't really automation. It's just outsourcing to a tool that's slightly faster than you but needs babysitting.

The Consolidation Argument

If BCG is right that the productivity curve peaks at 1-3 tools, most knowledge workers are already past the optimal point.

The practical implication isn't "stop using AI." It's: be deliberate about which 2-3 tools earn a permanent place in your stack and ruthlessly cut the rest.

The criteria worth applying:

- Does this tool reduce supervision requirements, or does it create them?

- Can I define a specific set of tasks this handles better than anything else?

- Does the output quality justify the evaluation overhead?

- Am I using this because it's genuinely helpful, or because I don't want to miss out?

That last question matters. FOMO is a real driver of AI tool adoption, and it doesn't correlate with productivity.

For solopreneurs and independent workers, this is especially relevant. You don't have a team to absorb the overhead of managing a complex AI stack. Every tool you add is one more thing only you can evaluate and maintain.

FAQ

Is AI brain fry permanent?

No. BCG describes it as situational fatigue, not chronic damage. Workers who reduced their AI tool count or shifted to lower-oversight AI tasks recovered. The cognitive load model suggests the effect is reversible when you reduce the demands on working memory.

Which professions are most at risk?

Marketing (26% of workers reported symptoms), followed by people operations, operations, engineering, finance, and IT. Work that involves constant judgment, quality evaluation, and decision-making creates more oversight demands than structured, repetitive tasks.

Does this mean AI tools don't work?

Not exactly. Goldman Sachs found real 30% productivity gains in customer support and software development. BCG found gains at 1-3 tools. The issue is scale and oversight — AI works well when tasks are narrow and outputs are easy to evaluate. It struggles when tasks are open-ended and quality requires expert judgment.

How many AI tools should I be using?

BCG's data suggests 1-3 is the productive range for most workers. Past that, you're likely spending more cognitive energy managing tools than you're saving. The right number depends on how bounded your use cases are and how much oversight each tool requires.

Is the METR developer study conclusive?

The researchers themselves were careful here. The study covered early-2025 AI capabilities on a specific type of open-source development task. Results may differ for other task types or with newer models. It's one strong data point, not a universal law.

Conclusion

The most counterintuitive finding from early 2026: the people getting the most out of AI aren't the ones using the most AI tools. They're the ones who figured out which 2-3 tasks AI does reliably well and built their workflow around those, rather than trying to AI-ify everything.

AI brain fry is real, it's measurable, and it's being created by the same instinct that drives most software adoption: when something's useful, add more of it. That logic works until it doesn't.

The data now gives you the threshold. Most of us have already crossed it.

If you want an AI platform that handles research, writing, and analysis in one place instead of requiring you to juggle multiple subscriptions, Zemith is built around that consolidation model.

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

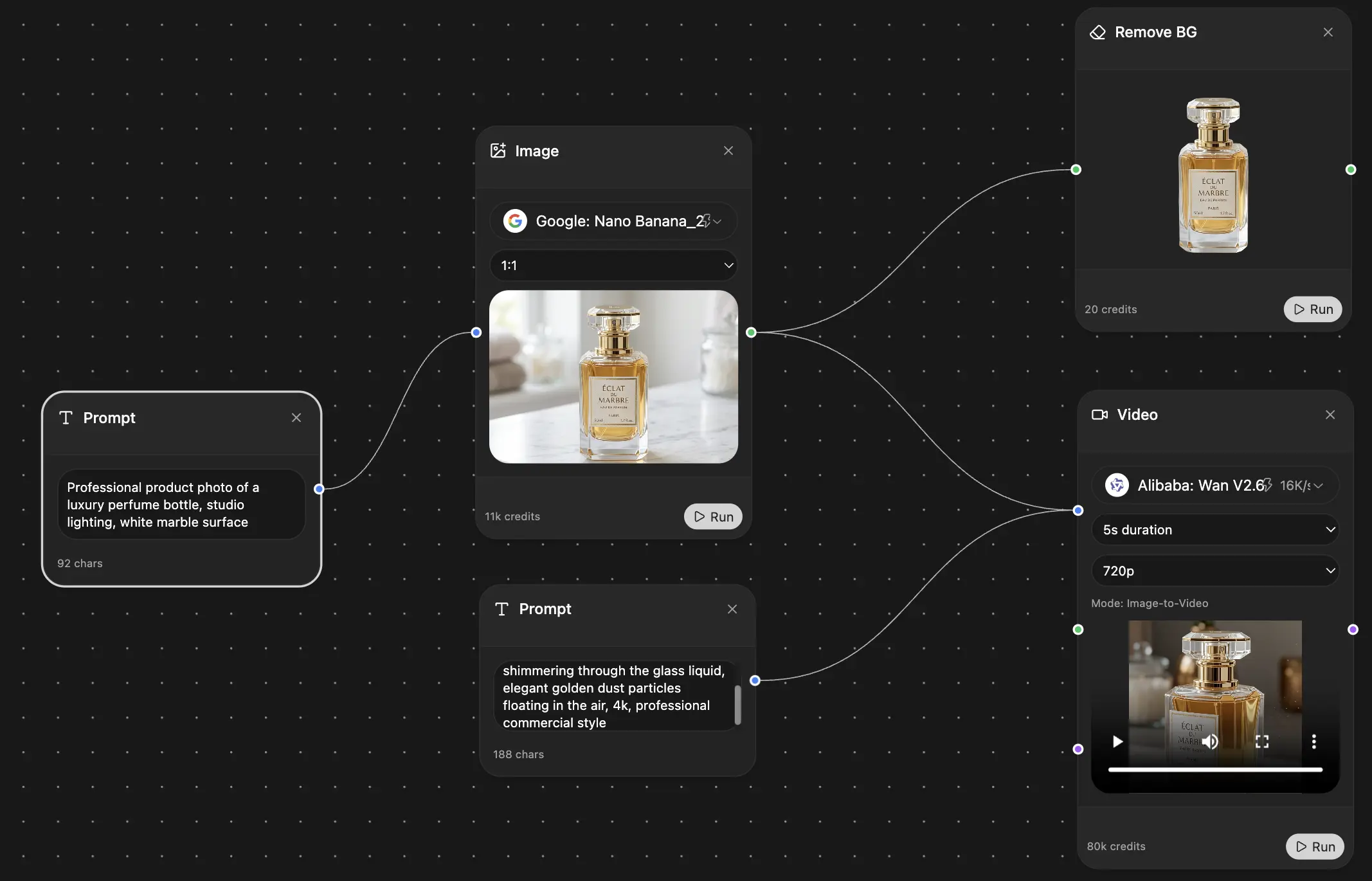

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers