AI Prompt Engineering Tips for Beginners: Get Better Results Starting Today

Learn 5 practical prompt engineering techniques that work with ChatGPT, Claude, and Gemini. No experience needed — just better output from AI you already use.

AI Prompt Engineering Tips for Beginners

TL;DR

What you need to know: The difference between a mediocre AI response and a great one is almost always the prompt. Specific, context-rich prompts consistently outperform vague ones — and the techniques take about an hour to learn.

Key findings:

- Role + goal + output format in a single prompt is the highest-impact technique for beginners

- Chain-of-thought prompting ("think step by step") significantly improves AI reasoning on complex tasks

- Few-shot examples — giving the AI 1-2 sample outputs first — is the fastest way to get consistent, style-matched results

- Explicit formatting instructions (word count, bullet points, tables) dramatically reduce editing time

- All five techniques work with ChatGPT, Claude, Gemini, and any other major LLM

You're already using AI. But results are inconsistent. Sometimes it writes exactly what you needed. Other times it's generic, too long, or completely misses the point.

The AI isn't broken. The prompt is.

Prompt engineering sounds technical. It's really just learning to communicate what you want clearly. Five techniques cover 90% of cases, and you can apply all of them today.

What Makes a Bad Prompt

Most people write AI prompts the way they type a Google search: short, vague, no context.

"Write an email about our product launch."

The AI doesn't know your product, your audience, your tone, or what you want the email to accomplish. Without that context, it takes its best guess — and that guess is always the average, safest version of the request.

The AI can only work with what you give it. Give it more, get more back.

Technique 1: The Core Formula (Role + Goal + Format)

This single change will improve most of your prompts immediately.

Instead of telling the AI what to do, tell it three things:

- Who it is (role)

- What you want (goal + context)

- How you want it (output format)

Before:

Write an email about our product launch.After:

You are a product marketer at a B2B SaaS company. Write a 3-paragraph email

to existing customers announcing a new analytics feature. Tone: professional

but not stiff. End with a CTA to book a 15-minute demo call.The second prompt gives the AI a lens. The output will be dramatically more useful and need far less editing.

Technique 2: Role Prompting

Assigning the AI a specific role shapes its vocabulary, assumptions, and level of detail.

"Act as a senior software engineer reviewing this code for security vulnerabilities" gets a very different response than "review this code."

High-performing role templates:

- "You are a [profession] with [X] years of experience in [specialty]. Your audience is [target audience]."

- "Act as a [specific expert] who specializes in [niche area]."

- "You are a [role] writing for [specific audience]. Use language appropriate for [background level]."

The role doesn't need to be a real job title. "Act as a highly critical editor who cuts unnecessary words without mercy" works perfectly for getting tight, edited copy.

A few roles worth keeping handy:

| Task | Effective Role |

|---|---|

| Email drafting | "Product marketer writing to [audience]" |

| Code review | "Senior engineer focused on security/performance" |

| Simplifying concepts | "Technical writer explaining to non-technical readers" |

| Strategy work | "Business consultant with [industry] expertise" |

| Writing feedback | "Harsh but constructive editor who values clarity" |

Technique 3: Chain-of-Thought Prompting

For complex tasks, tell the AI to think through the problem before answering. This is called chain-of-thought prompting, and it significantly improves output quality on reasoning-heavy tasks.

The simplest version: add "Think step by step before giving your final answer" to any prompt.

Good use cases:

- Complex analysis or strategic questions

- Troubleshooting code or technical problems

- Math and logic tasks

- Decision-making scenarios with trade-offs

Example:

I'm choosing between two CRMs for a 5-person team. We need email integration,

a mobile app, and a pipeline view. Our budget is $50/month. Think through the

trade-offs step by step before making a recommendation.This forces the AI to show its reasoning. The output becomes more useful and the logic is easier to check.

If you're using reasoning models (OpenAI's o1, o3, or Claude's extended thinking mode), they do this automatically. For standard chat models, the nudge matters.

Technique 4: Few-Shot Examples

This is the fastest way to get consistent, style-matched output: show the AI exactly what you want before asking it to do it.

Give the AI 1-3 examples of the format, style, or structure you're after. Then make your request.

Template:

Here are examples of the tone I want:

Example 1: [input] → [output]

Example 2: [input] → [output]

Now do the same for: [your actual request]Real example:

Here are examples of the writing style I want:

Input: Product announcement for a scheduling tool

Output: "Scheduling just got easier. Set your availability once, share a link,

done. No more back-and-forth emails."

Input: Product announcement for a file storage tool

Output: "Your files, wherever you are. Upload from any device, access from all

of them. Simple."

Now write one for: a new AI chat assistant feature in our project management tool.The AI picks up on length, rhythm, and tone from your examples. Two examples is usually enough.

Technique 5: Output Format Instructions

Most beginners forget to specify how they want the output structured. The AI defaults to whatever feels natural, which may not match what you need.

Tell it exactly what you want:

| Instead of... | Try... |

|---|---|

| "Write a summary" | "Summarize in 3 bullet points, each under 15 words" |

| "Analyze this data" | "Format as a table: Metric, Value, What It Means" |

| "Give me pros and cons" | "List 3 pros and 3 cons. One sentence each." |

| "Explain this concept" | "Explain for a smart non-technical reader. Under 100 words." |

| "Draft a report" | "Use markdown headers. Start each section with the key takeaway." |

Formatting instructions don't just make output prettier. They force the AI to prioritize differently. "3 bullet points, each under 15 words" forces concision. "Start with the key takeaway" forces bottom-line-up-front structure.

Putting It Together: Before vs. After

Here's the full technique stack applied to a real scenario.

Scenario: You want a LinkedIn post about a career lesson you learned.

Basic prompt:

Write a LinkedIn post about a lesson I learned about time management.Result: Generic. Could have been written by anyone, about anything.

Engineered prompt:

You are a senior product manager with 10 years of experience. Write a LinkedIn

post about learning to say no to meetings that don't require your decision-making.

Tone: honest and direct, not preachy. No hashtags. Under 150 words. Start with

a short punchy opening line that hooks the reader. End with one practical tip.Result: Specific, well-structured, sounds like it came from someone with real experience.

The difference isn't complexity. It's specificity. More concrete constraints give the AI less room to default to generic.

How to Iterate When Results Are Still Off

Good prompts are rarely written on the first try. Treat it like a draft.

If the first output is 80% there, continue the conversation:

- "Good. Now make it 30% shorter."

- "Remove the formal language — make it sound more like a colleague talking."

- "The third point is weak. Rewrite just that section with a concrete example."

- "Change the tone from persuasive to informational."

You don't need to rewrite the entire prompt every time. Build on what's working.

This is especially useful for writing and content tasks. For a deeper look at AI tools built specifically for writing workflows, see the best AI for writing in 2026.

Common Mistakes

Too vague. "Help me with marketing" vs "Write three subject line options for a re-engagement email to customers who haven't purchased in 90 days." The second prompt leaves almost no room for a bad answer.

Too many tasks at once. Don't cram five requests into one prompt. Break complex tasks into steps: ask for an outline first, then expand each section.

No context about your audience. The AI doesn't know if you're writing for a 12-year-old or a PhD. State it explicitly.

Accepting the first answer. Iterate. Most good outputs take 2-3 exchanges, not one perfectly worded prompt.

Expecting it to read your mind. If you have constraints (word count, must-avoid topics, preferred format), state them upfront. Don't discover that you needed to add them after reading a response you can't use.

Once you have solid prompts for common tasks, you can use them as building blocks for automating repetitive AI workflows with tools like Zapier or Make.

Does This Work on All AI Models?

Yes. The core techniques work across ChatGPT, Claude, Gemini, and any other major LLM.

A few minor differences worth knowing:

ChatGPT (GPT-4o): Responds well to structured prompts with clear sections. Handles multi-constraint prompts cleanly. Fast for most tasks.

Claude (Anthropic): Particularly good at following detailed, nuanced instructions. Handles long context and subtle tone requirements better than most models. If you're specific about voice and style, Claude usually delivers.

Gemini (Google): Strong on tasks that benefit from real-time information access. Same prompting techniques apply; output style differs slightly.

The techniques on this page work with all of them. Pick the model you already use and apply them there.

If you're still deciding which AI to work with, the ChatGPT vs Claude comparison covers the practical differences for common use cases.

FAQ

What's the difference between prompt engineering and just "using AI"?

Prompt engineering is the deliberate practice of designing prompts to get specific, high-quality output. Most people "use AI" by typing casual requests and accepting whatever comes back. Prompt engineering means being intentional about what you ask and how you frame it. The gap between the two is often a 5x difference in output quality.

How long should a prompt be?

Long enough to include role, goal, context, and format instructions — short enough to stay clear. For most tasks, 50-150 words is the sweet spot. Complex technical tasks can go longer. Simple formatting or summarization requests can be a single sentence. If your prompt is longer than a paragraph and still vague, that's a sign you need to be more specific rather than longer.

Do I need to memorize these techniques?

No. Most people internalize 2-3 techniques after a week of deliberate practice. Start with the core formula (role + goal + format) since it improves every prompt immediately. Add the others when you encounter a task where they'd help.

What if I follow all these tips but still get bad results?

Try a different model — some tasks genuinely suit some models better. Also check whether your role and context are actually accurate and internally consistent. If you tell the AI to "write like an expert" but your examples are casual, it gets conflicting signals. And iterate: treat the first response as a starting point, not a finished product.

Is this worth learning when AI tools keep improving?

Yes. Better models still respond better to well-structured prompts. The gap between a good prompt and a vague one hasn't closed as models have improved — if anything, more capable models have more room to do better things when given clear direction. The basics you learn today will apply to every model that comes after.

Conclusion

Most people get a fraction of what AI can deliver because they haven't changed how they ask. Add a role, specify the output format, give the AI a concrete goal — results improve immediately.

The five techniques are:

- Role + goal + format in one prompt

- Assign a specific role that fits the task

- Ask it to think step by step for complex reasoning

- Give examples when you want consistent style or structure

- State exactly how you want the output formatted

Start with just the first one. The core formula alone will make a noticeable difference on any task you use AI for regularly.

For anyone who wants to apply these techniques across multiple AI models without switching between tabs, Zemith gives you access to ChatGPT, Claude, and others in one place.

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

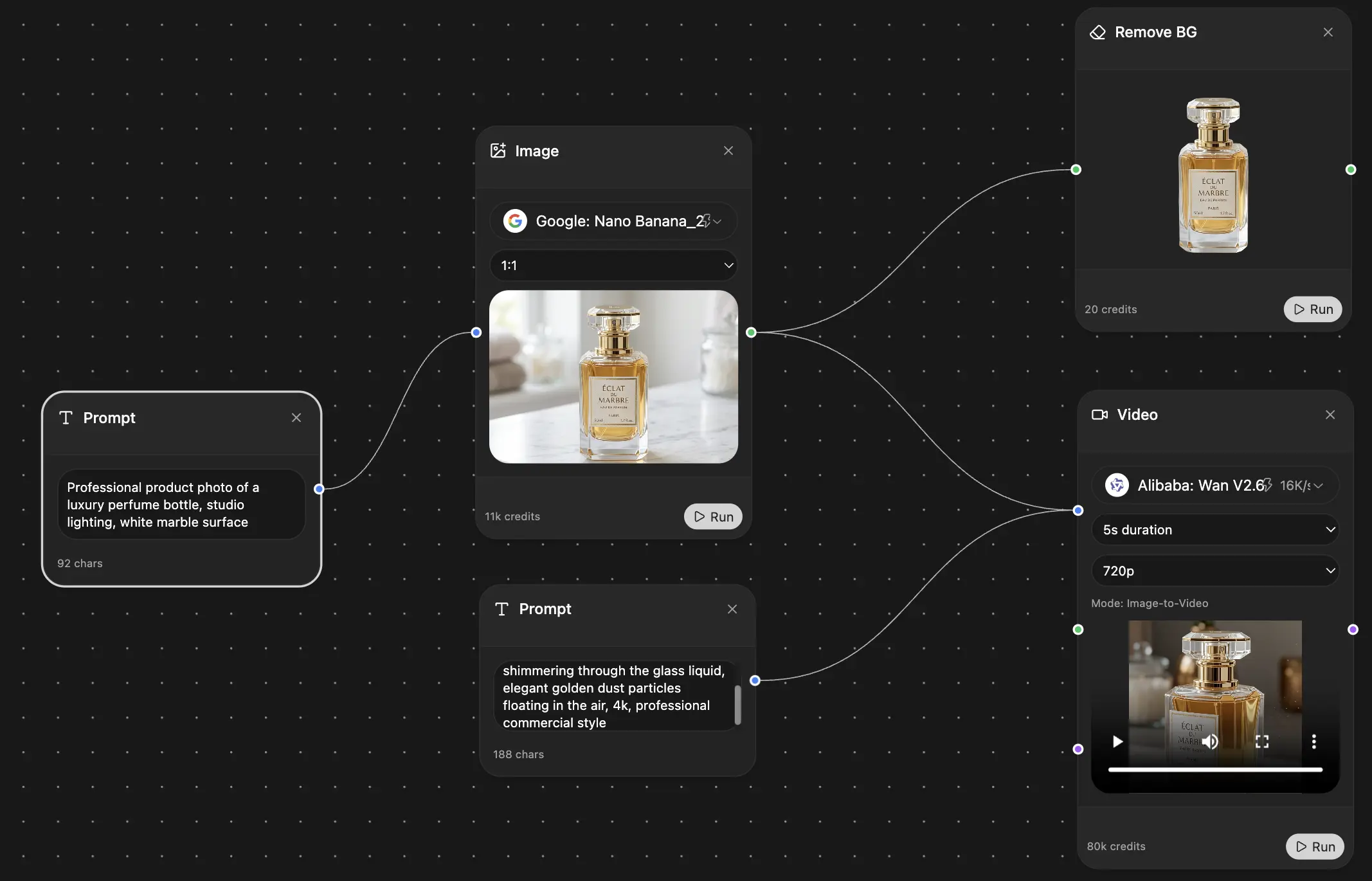

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers