Black Forest Labs FLUX.2: The New Standard for AI Image Generation?

Black Forest Labs just released FLUX.2, and it's a massive leap forward. From 4MP resolution to multi-reference support, here's why this matters for creators and developers.

Black Forest Labs FLUX.2: The New Standard for AI Image Generation?

I've been building Zemith for a while now, and if there's one thing I've learned about the AI space, it's that "new" doesn't always mean "better." We see models launch every week with hype that rarely survives the first few days of real-world testing.

But then there's Black Forest Labs. When they released the original FLUX, it genuinely shifted the landscape. It wasn't just hype; it was a tool that creators actually wanted to use.

Now, they've dropped FLUX.2, and after digging into the specs and seeing what it can do, I have to say: this feels like another leap, not just a step.

Here's my take on what makes FLUX.2 different and why it matters if you're building or creating with AI.

What is FLUX.2?

FLUX.2 is Black Forest Labs' second-generation image generation system. It's built on a latent flow matching architecture—specifically coupling a Mistral-3 24B parameter vision-language model with a rectified flow transformer.

If that sounds like technical jargon, here's the translation: It understands the world better. It's not just matching keywords to pixels; it has a deeper grasp of spatial relationships, material properties, and real-world logic.

But specs are one thing. Features are what we actually use. Here's what stands out to me.

The Features That Actually Matter

1. Multi-Reference Support (The Game Changer)

This is the big one. FLUX.2 allows you to combine up to 10 reference images into a single output.

For anyone working in branding, character design, or consistent storytelling, this is huge. You're not just hoping the model remembers what your character looks like; you're giving it the blueprints. It enables a level of consistency across assets that was previously a nightmare to achieve without complex fine-tuning.

2. 4MP Resolution & Photorealism

We're talking about native generation at resolutions up to 4 megapixels. That's print-quality territory.

But it's not just about pixel count. The "AI look"—that weird, plastic sheen that plagues so many models—is significantly reduced here. The textures are sharper, the lighting is more stable, and the details feel grounded. For product photography or high-end visualization, this is a serious upgrade.

3. Typography That Works

We've all struggled with AI text. You ask for a sign that says "Coffee" and get "Cofefe" written in alien hieroglyphs.

FLUX.2 has made a major push here. It can reliably render complex typography, infographics, and UI mockups with legible, fine text. For designers mocking up concepts, this saves hours of Photoshop work.

4. Precision Control

They've introduced advanced control primitives like hex color steering and direct pose control. This moves us closer to "directing" the AI rather than just prompting it. You can tell it exactly what color you want or exactly how someone should be standing.

The Variants

Black Forest Labs understands that one size doesn't fit all. They've released a family of models:

- FLUX.2 [pro]: The heavy hitter. Top-tier quality for production workflows.

- FLUX.2 [flex]: For developers who need to balance latency and accuracy.

- FLUX.2 [dev]: An open-weight checkpoint for the research community.

- FLUX.2 [klein]: A forthcoming optimized version for smaller setups.

This tiered approach is smart. It acknowledges that a hobbyist, a researcher, and a production studio have completely different needs.

Why This Matters for Builders

As a founder, I look at tools like FLUX.2 and see opportunity. The barrier to creating professional-grade visual assets is dropping rapidly.

The ability to use JSON prompting and structured instructions means we can build more reliable, programmatic workflows on top of these models. It stops being a slot machine and starts being a rendering engine.

Try FLUX.2 on Zemith

We believe in giving you access to the best tools the moment they're ready. That's why I'm excited to share that FLUX.2 is available on Zemith right now.

You don't need to set up complex local environments or manage API keys. We've integrated it directly into our platform. You can test its multi-reference capabilities, push the resolution limits, and see if it fits your workflow.

Whether you're generating assets for your next campaign or just exploring the bleeding edge of generative AI, FLUX.2 is worth your time.

Ready to see what FLUX.2 can do? Try it now on Zemith.

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

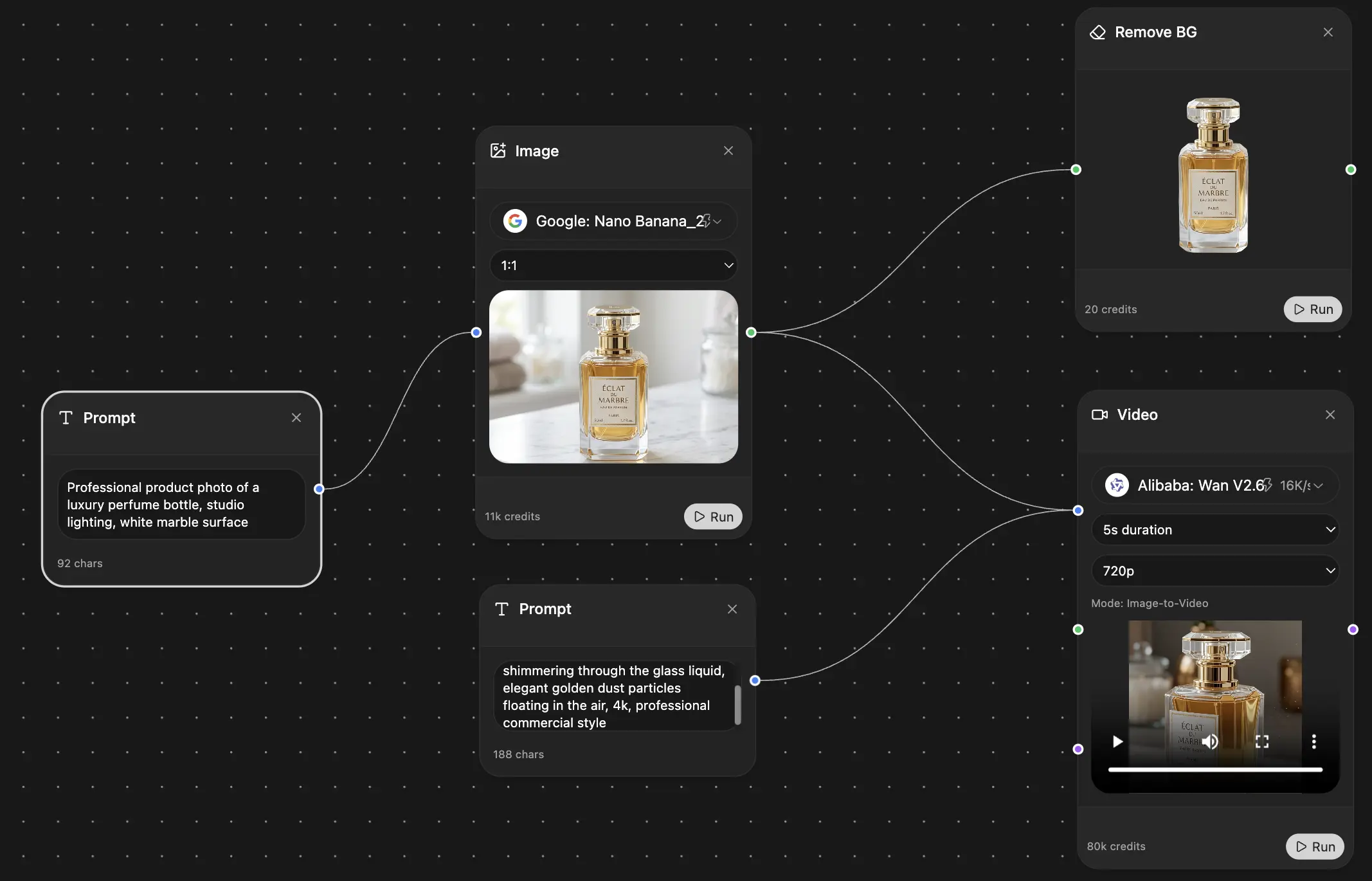

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers