Why AI Makes Some Workers 25% More Productive (And Others Slower)

A Harvard/BCG study found AI users finished tasks 25% faster. But the same study showed some workers got 19% worse. Here's what separates the two groups.

Why AI Makes Some Workers 25% More Productive (And Others Slower)

TL;DR

What you need to know: A Harvard and BCG study of 758 consultants found AI users completed 12.2% more tasks, worked 25.1% faster, and produced higher-quality outputs. But the same study found that for certain tasks, AI users were 19% less accurate than those working without AI. The researchers called this the "jagged technological frontier." The workers getting the biggest gains have learned where that frontier is. Most people haven't.

Key findings:

- AI gives a real productivity edge for tasks inside its strengths: writing, summarizing, brainstorming, coding

- For tasks outside those strengths, AI can actively hurt your accuracy

- The gap between calibrated AI users and everyone else is growing in 2026

- Building AI into a repeatable workflow matters more than which tool you use

- Keeping your judgment in the loop is what separates power users from overusers

Harvard Business School and Boston Consulting Group ran a widely cited AI workplace study. They gave 758 consultants 18 realistic work tasks. Some used AI. Some didn't.

The results split sharply.

The AI-using group finished 12.2% more tasks, worked 25.1% faster, and produced measurably better outputs. For a knowledge worker putting in 40 hours a week, that 25% speed gain means finishing Friday's work by Thursday afternoon.

But here's what most people skip over.

For tasks that fell outside AI's strengths, the same consultants using AI were 19% less likely to produce the correct answer than those working without it. In some cases, AI actively hurt performance.

The researchers called this the "jagged technological frontier." The workers getting the biggest gains know where that frontier is. Most people don't.

The Jagged Technological Frontier, Explained

Picture a map of every task a knowledge worker does. Writing emails. Researching markets. Analyzing data. Building presentations. Debugging code. Making judgment calls on ambiguous problems.

Now draw a jagged, irregular border through that map. Inside the border: tasks where AI excels. Outside: tasks where AI struggles, confuses, or actively misleads.

The frontier is jagged because it's counterintuitive. AI is excellent at drafting a persuasive email but can confidently produce a wrong financial calculation. It can summarize a 50-page report in 30 seconds but miss the single paragraph that changes everything. It writes solid boilerplate code but gets logic wrong when the problem is genuinely novel.

The workers thriving with AI learn this frontier through experience. They stop treating AI like a universal answer machine. They start treating it like a specialist with a specific range.

Three Types of AI Users

After two-plus years of watching how professionals use AI, most people fall into three groups.

Skeptics barely use AI. They tried it once, got a mediocre result, and went back to what they know. They're leaving 25% efficiency gains on the table. The gap between them and their AI-fluent peers compounds over time.

Overusers apply AI to everything. Every email, every decision, every piece of research. They're the ones the BCG study warned about. They're faster on easy tasks but worse on the ones that actually matter. They've outsourced their judgment without realizing it.

Calibrated users are selective. They've mapped their own jagged frontier through trial and error. They know which parts of their workflow AI accelerates. They trust AI for the first draft but not the final decision.

Most people sit somewhere between skeptic and overuser. Very few are fully calibrated yet. That's exactly what makes it an advantage.

What Calibrated AI Users Actually Do Differently

They've mapped their frontier

Calibrated users have a mental shortlist of what AI reliably helps with. For most knowledge workers, that list includes:

- First drafts of anything: emails, proposals, reports, code

- Summarizing long documents, meeting notes, research papers

- Brainstorming options when you're stuck and need to see the space quickly

- Explaining unfamiliar concepts without jargon

- Repetitive formatting and data transformation tasks

And the list excludes:

- Novel problems that require original judgment

- Anything where being wrong has real consequences and won't be caught in review

- Tasks depending on context AI doesn't have: company strategy, recent events, niche domain knowledge

- Creative work where "good enough" isn't good enough

Knowing this list changes how you use AI. You stop throwing hard judgment calls at it and expecting a reliable answer.

They write prompts with context, not commands

The difference between a vague prompt and a useful one is almost always context. Most people write "summarize this" or "write me an email about X." Power users add the audience, the goal, the tone, and the constraints.

This isn't complicated. It's briefing AI like a capable colleague who needs background information rather than a search engine that will figure it out. A good briefing covers who this is for, what you're trying to accomplish, what format you need, and what to avoid.

The prompt engineering guide for beginners covers the specific techniques that make the biggest difference, including role-based prompting and few-shot examples.

They've built repeatable workflows

Casual AI users go to their AI tool when they're stuck. Calibrated users have built AI into their regular process. They have a set of proven prompts for their most common tasks. They reuse what works. They don't start from scratch each time.

A freelance content strategist might have a template for client briefs, a research framework for articles, and a revision checklist. Each involves AI at a specific step. She doesn't just use AI occasionally. She has a system.

If you want to build that kind of setup, the guide to automating daily tasks with AI is a practical starting point for identifying which tasks to automate first.

They keep their judgment in the loop

The 19% accuracy drop for out-of-frontier tasks happened because consultants trusted AI outputs they should have verified. Calibrated users treat AI as the first draft, not the finished product. They check claims that matter. They edit rather than copy-paste.

This sounds obvious, but it's where overusers go wrong. The more familiar AI feels, the easier it is to stop reading carefully. The BCG study found this affected consultants regardless of seniority. Experienced professionals fell into the same trap as junior ones when they stopped questioning the output.

The Productivity Gap Is Growing

In 2023, most knowledge workers were still figuring out what AI could do. By 2026, two groups have pulled apart.

One group has built consistent AI habits. They have 10 to 20 hours of mental work each week that AI now handles at first-draft quality. They respond faster, produce more, and take on scope that used to require a team.

The other group hasn't changed much. Maybe they use AI occasionally. Maybe they tried a few tools and didn't see the value. They're doing the same work at roughly the same pace.

This gap shows up in what people can charge and what they can take on. Solopreneurs with strong AI workflows are competing for contracts that used to require agencies. The best AI tools for solopreneurs category has grown because the ROI is real. But only for people who've actually learned to use these tools well.

You don't have to be a heavy AI user. But being a calibrated one is increasingly a baseline expectation in competitive fields.

How to Find Your Own Jagged Frontier

You don't need a framework or a productivity consultant. You need five days of honest observation.

Pick one work week. At the end of each day, note two things:

- A task where AI gave you a noticeably better result, faster, than you'd have gotten alone

- A task where AI slowed you down, gave you wrong information, or required so much correction it wasn't worth it

After five days, you'll have 10 data points. That's your starting map. The wins show you where to use AI more. The losses tell you where to stop.

Repeat this every couple of months. The tools improve. Your skills improve. The frontier shifts.

How to Get Into the Calibrated Group

A few practical moves that make a real difference:

Start with your highest-volume task. Whatever you do most often is where calibrating AI use pays off fastest. If that's email, start there. If it's code review, start there.

Keep a prompt library. When a prompt gives you a great result, save it. Most calibrated users have a document with their 10 to 20 most-used prompts. That's the whole playbook for consistent results.

Set a verification habit. For any AI output going to someone else, build in one review step. You don't need to verify everything. But having a standard check before sending prevents the trust creep that leads to accuracy drops.

Use AI for exploration, not decisions. AI is excellent at generating options and mapping a problem space. It's less reliable at making the final call. Use it to see what you might be missing, then decide yourself.

FAQ

Does it matter which AI tool I use?

Less than most people think. The BCG study used GPT-4, but the habits apply to any tool: knowing your frontier, giving context in prompts, keeping your judgment in the loop. That said, some tools are genuinely better at specific tasks. A purpose-built coding assistant handles code review differently than a general chat tool.

What if I've never really used AI for work?

Start with the task you spend the most time on. If it's writing, try using AI for first drafts. If it's research, try summarization. Pick one use case. Get good at it. Then expand. Trying to use AI for everything at once is how people become overusers.

Can senior professionals still fall into the accuracy trap?

Yes. The BCG study found the accuracy drop happened across seniority levels. The deciding factor wasn't experience. It was whether someone recognized when a task was outside AI's strengths. Senior workers who assumed AI was reliable across the board performed worse than junior workers who stayed skeptical.

How long does it take to become a calibrated AI user?

Most people start seeing the pattern within 2 to 4 weeks of consistent use. You don't need months. You need enough repetition across different task types to notice which tasks AI reliably helps with and which ones it doesn't.

The Bottom Line

The Harvard/BCG findings still hold in 2026: AI makes calibrated users significantly more productive and can make overconfident users less accurate. The difference isn't which tool you have. It's knowing where AI's actual strengths are for your specific work.

The frontier is different for everyone. A developer's frontier looks different from a writer's or a strategist's. But everyone has one, and mapping it is more valuable than adding another tool to your stack.

If you want one place to test AI across different tasks and find what actually works for your workflow, Zemith gives you access to multiple leading AI models with chat, coding assistance, and AI agents in one place. Easier to find your frontier when you're not switching between platforms.

Explore Zemith Features

Every top AI. One subscription.

ChatGPT, Claude, Gemini, DeepSeek, Grok & 25+ more

Always on, real-time AI.

Voice + screen share · instant answers

What's the best way to learn a new language?

Immersion and spaced repetition work best. Try consuming media in your target language daily.

Voice + screen share · AI answers in real time

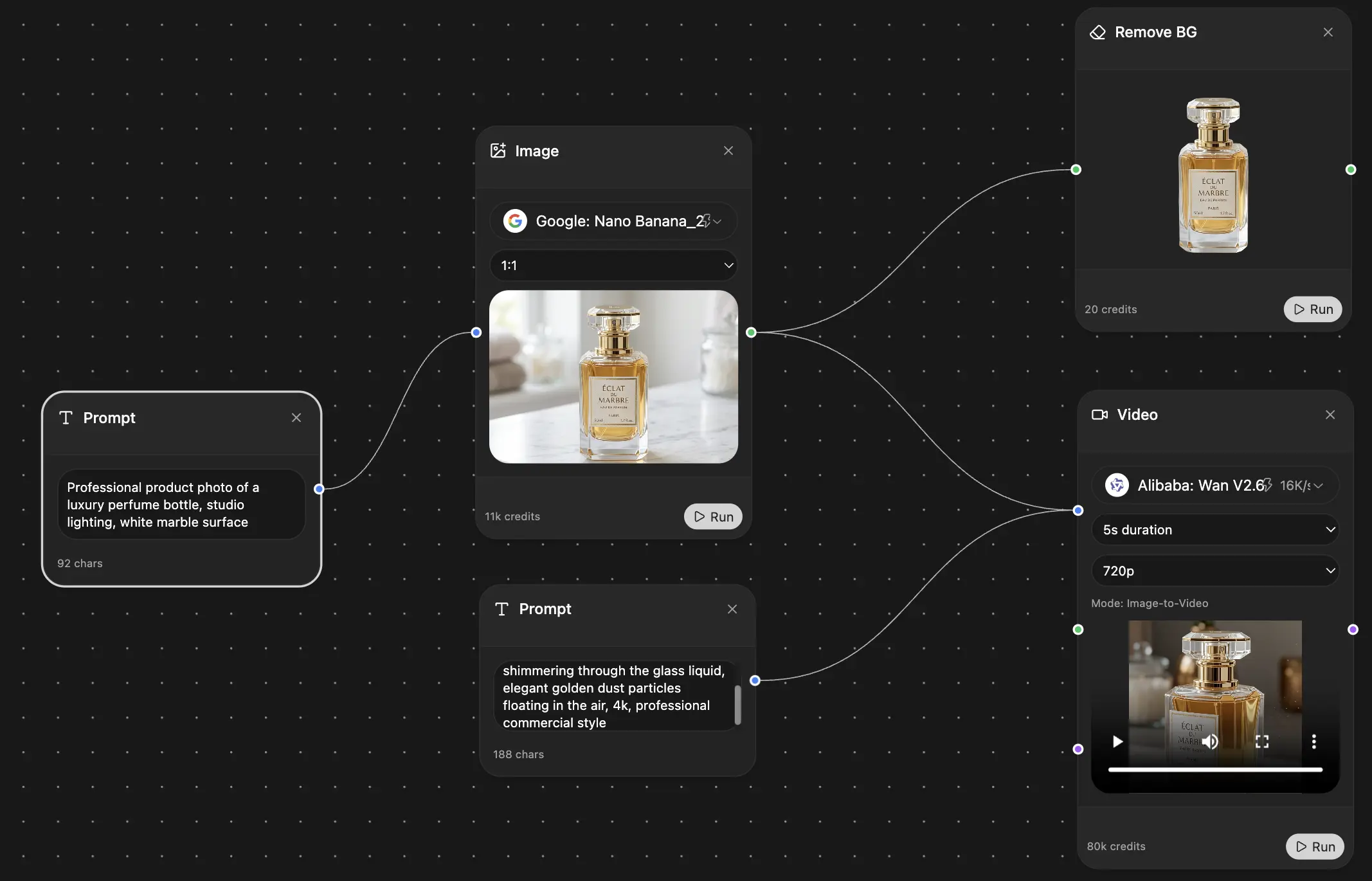

Image Generation

Flux, Nano Banana, Ideogram, Recraft + more

Write at the speed of thought.

AI autocomplete, rewrite & expand on command

Any document. Any format.

PDF, URL, or YouTube → chat, quiz, podcast & more

Video Creation

Veo, Kling, Grok Imagine and more

Text to Speech

Natural AI voices, 30+ languages

Code Generation

Write, debug & explain code

Chat with Documents

Upload PDFs, analyze content

Your AI, in your pocket.

Full access on iOS & Android · synced everywhere

Your infinite AI canvas.

Chat, image, video & motion tools — side by side

Save hours of work and research

Transparent, High-Value Pricing

Trusted by teams at

Free

No credit card required

- 100 credits daily

- 3 AI models to try

- Basic AI chat

Plus

- 1,000,000 credits/month

- 25+ AI models — GPT, Claude, Gemini, Grok & more

- Agent Mode with web search, computer tools and more

- Creative Studio: image generation and video generation

- Project Library: chat with document, website and youtube, podcast generation, flashcards, reports and more

- Workflow Studio and FocusOS

Professional

- Everything in Plus, and:

- 2,100,000 credits/month

- Pro-exclusive models (Claude Opus, Grok 4, Sonar Pro)

- Motion Tools & Max Mode

- First access to latest features

- Access to additional offers